Do you know you are not a human?

If you are reading this document on paper, I recommend switching to the

online version as it includes animations and direct links to the project

and references. You can find the thesis on the MTIID CalArts Website at

www.mtiid.calarts.edu or by visiting my

personal website at www.luisapinzon.com.

Special thanks to Sergio Andres Ribero for assisting me in creating a website version of this document using the Markdown markup language.

Supervisory Committee

Mentor: Ajay Kapur

Committee Member: Perry Cook

Abstract

Do you know that you are not human? I heard Kathi ask her when we were surrounded by our lovely friends. Since then, I have sometimes wondered, does she know she is not human? Everywhere I go, she goes with me. She protects me, understands me, keeps me company, and, most importantly, loves me and loves the ones that surround me. Why are these creatures so special, so smart, so interesting, but, above all, so loyal to us? Humans, did you think I was talking about a robot? A computer? AI? No, it is my dog. Her name is Aby, and she is the most amazing little being I have ever met.

The inspiration for this project came from the bond with my dog and the spiritual connection we shared, which led to the exploration of non-verbal communication, evolution, spirituality, outer space, and the origins of life stemming from the ocean. What you are seeing are particles that come from pixels extracted from images of me and my dog. The sound all comes from sounds made by my dog, my partner’s dog, my partner, and me. I took the recordings and turned them into instruments to compose the music.

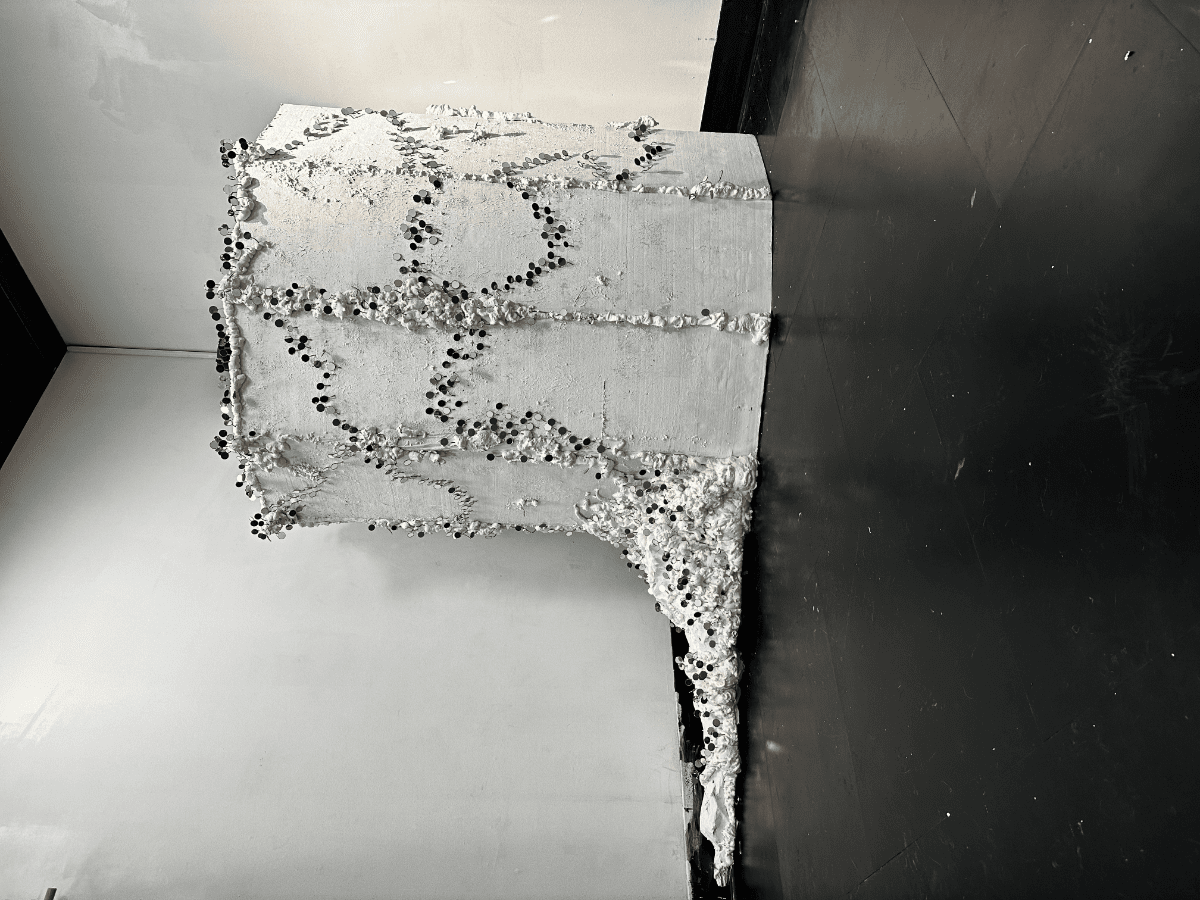

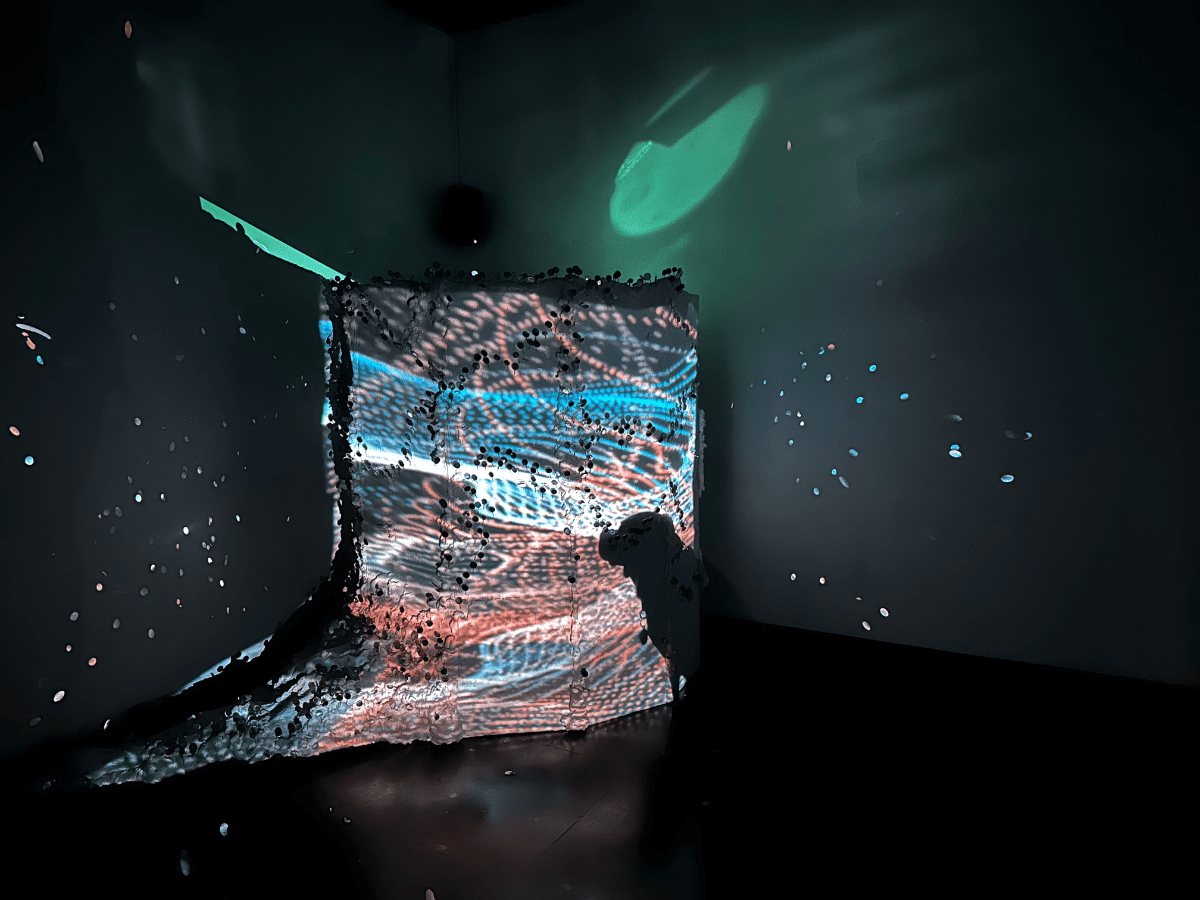

What’s that thing in the corner? Oh, you didn’t know? It’s an operating booth from the center of the ocean. It’s where all origins of life were created. We know because we saw it in our dream, and then we made it so you could see it too.

You’re welcome… for educating you through our art.

Now you can explain to people exactly where all species and life originated from.

Again, you’re welcome.

If you’re the inquisitive type, please direct your questions to the mirrors as you reflect on your existence.

Acknowledgments

I would like to thank…

My wonderful dog and inspiration of this project

Mi little Aby who has showed me what true love and loyalty means, giving the biggest love and inspiration through this path far away from home, but always being by my side in the good and the bad moments.

My partner and their dog

J and Precious: For standing up with me through this journey, for staying up in all-nighter shifts with me and pushing me to the end line even when I thought I could not make it. For supporting me in every moment and being the loveliest, patient, sweet and generous partner I could have possibly asked for. I can’t wait to create kinetic sculptures with you, see particles everywhere and create tiny little small worlds full of motors that move all the little characters that we made together.

My mentors

Ajay: Can’t express in words how grateful I am for finding you in my professional career path, your support and mentoring have taken me further than I could have possibly imagine. Thank you for always trusting me and showing me new ways of making art.

Tom: I don’t even need to put here your last name, because you know who you are right!? I can’t say enough times thank you! Meeting you was one of the hidden golden treasures at CalArts. Thank you for making me read things I thought I would never relate to my art, and then finding the biggest inspiration on them. Thank you for teaching me the world outside my mind, for letting me know that I could expand my practice without quitting to what I already had inside me, and for becoming such a wonderful rock in my artistic practice.

Mads: Here we are, finally finishing this path. This time I don’t come with problems, but with gratefulness lol. I am so grateful for your appearance in my career and life path, thank you for receiving me in your office hours constantly to hear all my endless requests, problems, and suggestions, thank you for hearing me out every single time I needed, for supporting me until the end, and for believing in me. I am so happy, thankful, and honored to have meet you and to know I can continue have your support and guidance in my life.

Kai: The one who change it all! Still remembering the first time we met, and how our art conversations took my mind to a completely different place. Thank you for your support and for being able to engage with me in artistic concepts that were hard for me to grasp, for having the patience of showing me new ways of seeing this world, for telling me that I should meditate lol. And for being such an amazing guide in this process.

Mike: This thesis would have probably not existed without you; I would have never even imagined I could create something like this. You have been the teacher that has taught me the greatest number of new things in my life, and I can’t even believe by this point I learned them. If I go back 2 years ago, I would have not even had a thought of what I was going to learn with you. Thank you for the patience, for explaining me the most basic concepts since the first week of class, when I was completely lost and did not understand what was happening. I can’t believe I did not even know what an alpha channel was, and now I can create UV maps to map shapes into the pixel positions of a photograph, that probably demonstrates what a great teacher you are, thank you always. Without you I would have probably done a boring sound engineering project (I hope no other sound engineers read this and feel offended, no offense guys). I hope we can be a projection team again in the future.

Eric: What a joy was to have you as my mentor during these studies, I must accept that I was always amused by the number of things that you know, and how much I wanted to be like you lol. You have been an amazing inspiration in my career, and I could not explain how grateful I am for the number of things I learned from you. I promise the next time we meet I will have a decent particle system happening in OpenFrameworks. Thank you for your patience, and for trying to solve all my crazy ideas with me, always bringing in new concepts and reminding me that maybe math is not that bad and can solve a lot of my problems.

Poieto Family: Thank you guys! I could not have possibly ask for a better work environment. Thanks for the support when I have needed, here I am finally finishing this part of my professional path, and I will be back on my work. Thank you for giving me the space, and the time. The support and the advice. I can’t wait do to create wonderful things with you.

CalArts Family: Thank you all! To all my friends, collogues, mentors, my wonderful B200 African family, my MTIID fellows and my IM people. I can’t express the way you all have changed me and enriched my life, my art, and my profession. I can’t believe how many different things I learned while I was here at CalArts and the amount of wonderful people I met! Thank you all always!

Family and Friends

Chris and Curtis: My guys! Thank you always for your support, for coming and hanging out and watching weird art even if you had to wake up early the next day. For being there for me until the last day, for standing my late-night shifts at home making noise, for always answering my calls, giving me advice, coming to help, and hearing me out. Thank you for checking on my little one when I could not do it, and for being my little Rotunda family since I got to this country, and I though sometimes I would not make it.

Michaella: My wonderful friend, thank you for being my rock in this process, for hearing me out when I felt I was not going to make it. For giving me advice, for trusting me, and supporting me. What a wonderful friendship I found in you; great things are waiting for you in the future.

Family and Friends en español

Javi: Mi gordo lindo, un poco de español en este documento, para agradecerte por tu apoyo. Por los voicenotes de 10 minutos explicando mis ideas, por hacerme preguntas intrigantes, por explicarme conceptos, y por mostrarme las cosas de diferente manera. No habría llegado al resultado de este proyecto sin tu ayuda y sin tus consejos. ¡Gracias siempre!

Serch: Gracias por siempre estar ahí para mí, por escuchar mis ideas locas y en vez de decirme que no se puede, encontrar una solución. Por siempre estar ahí cuando te he necesitado, desde chiquitos y para siempre. Y por supuesto por poner el granito o grandote de arena en este documento para que la humanidad pueda leerlo.

Los papás: Gracias siempre por su apoyo. Por ser mi roca cuando lo he necesitado, por escucharme cuando necesito consejos, por siempre darme lo mejor de ustedes para mí. Gracias por siempre creer en mí, en mi arte y en mi pasión. Sin duda alguna no podría haber llegado hasta aquí sin ustedes, y siempre estaré agradecida por tener una familia que me ha apoyado ciegamente en mi carrera, sin importar lo difícil que parezca. Los amo.

Contents

Chapter 1

Chapter 2

Other Minds – Peter Godfrey-Smith

Chapter 3

Chapter 4

Do you know you are not a human? - Visual Design

Introduction to Touch Designer

Shapes made of particle pixels

Chapter 5

Do you know you are not a human? - Sound Design

Simple Patches and Oscillators in Max/MSP

Chapter 6

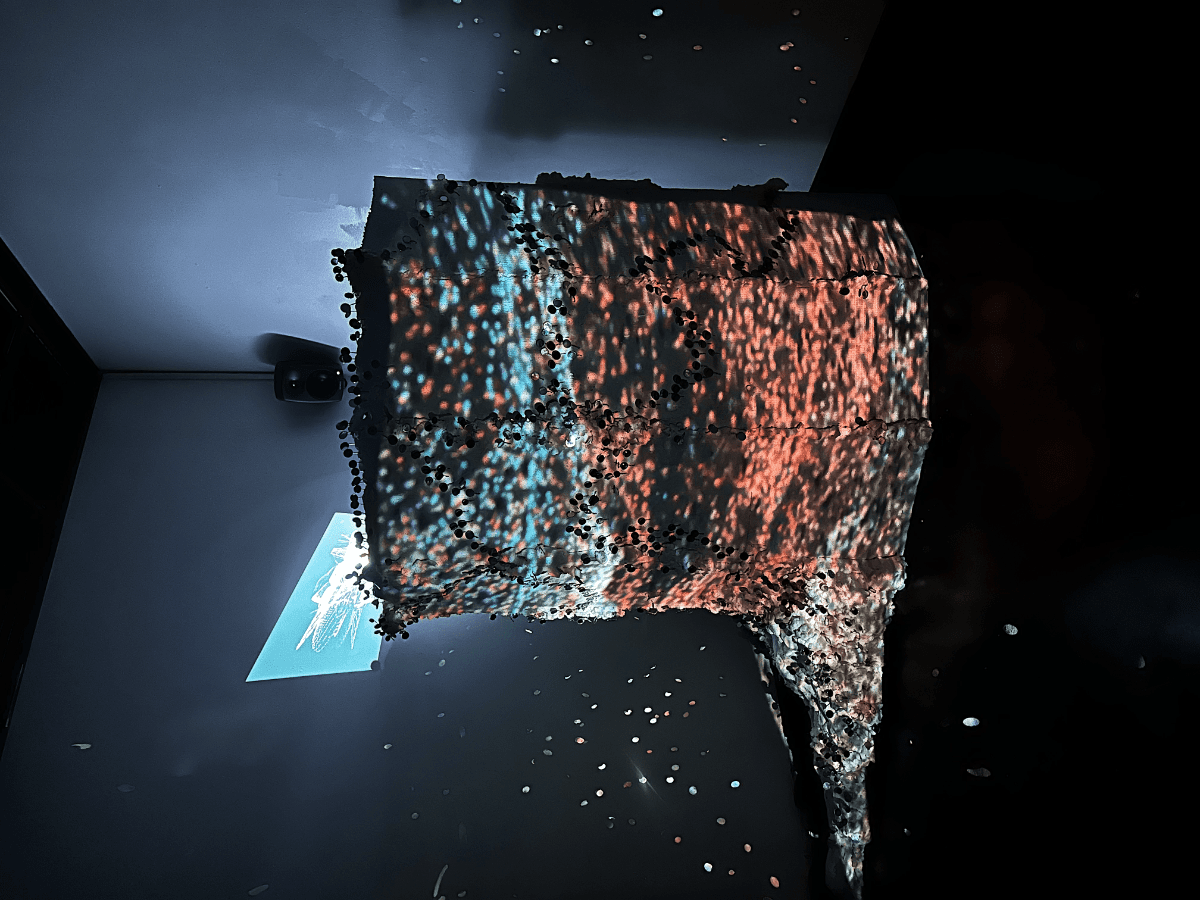

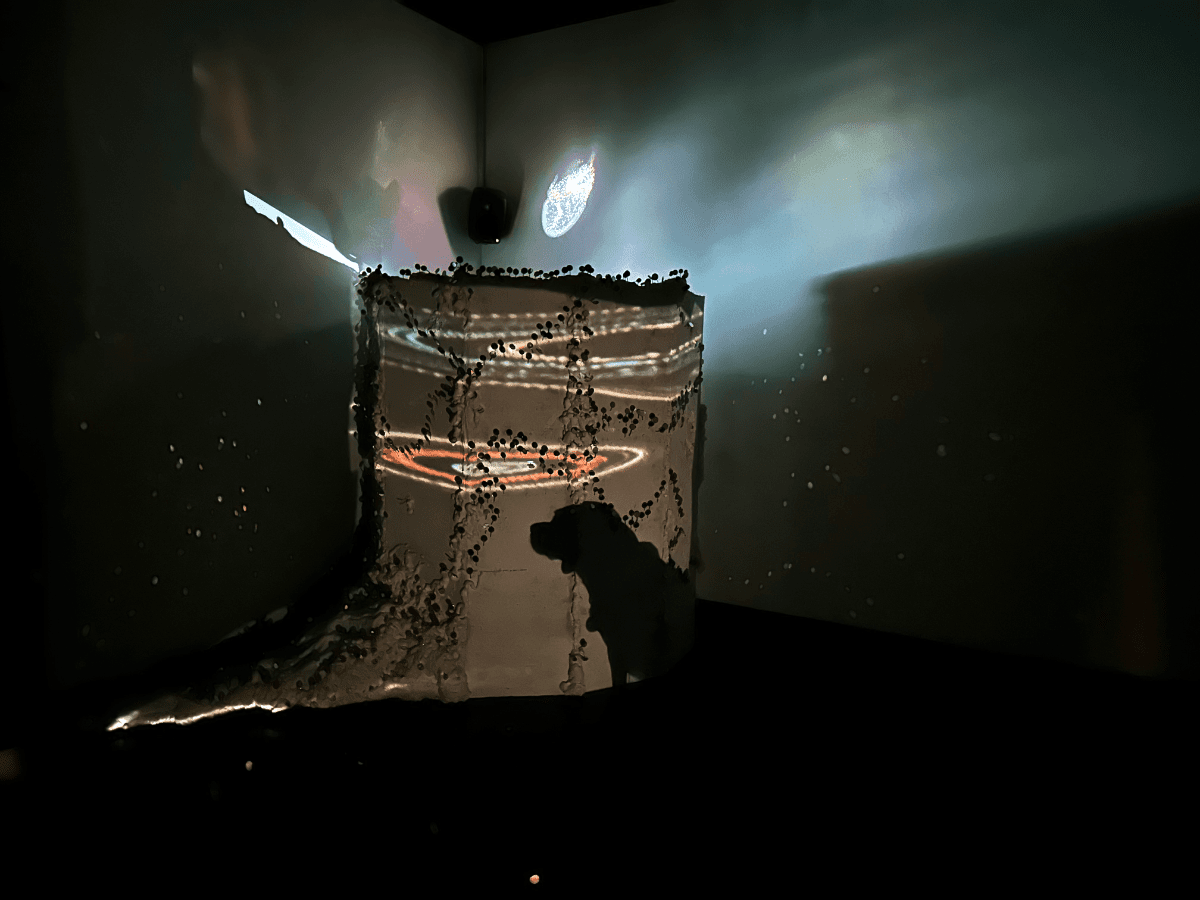

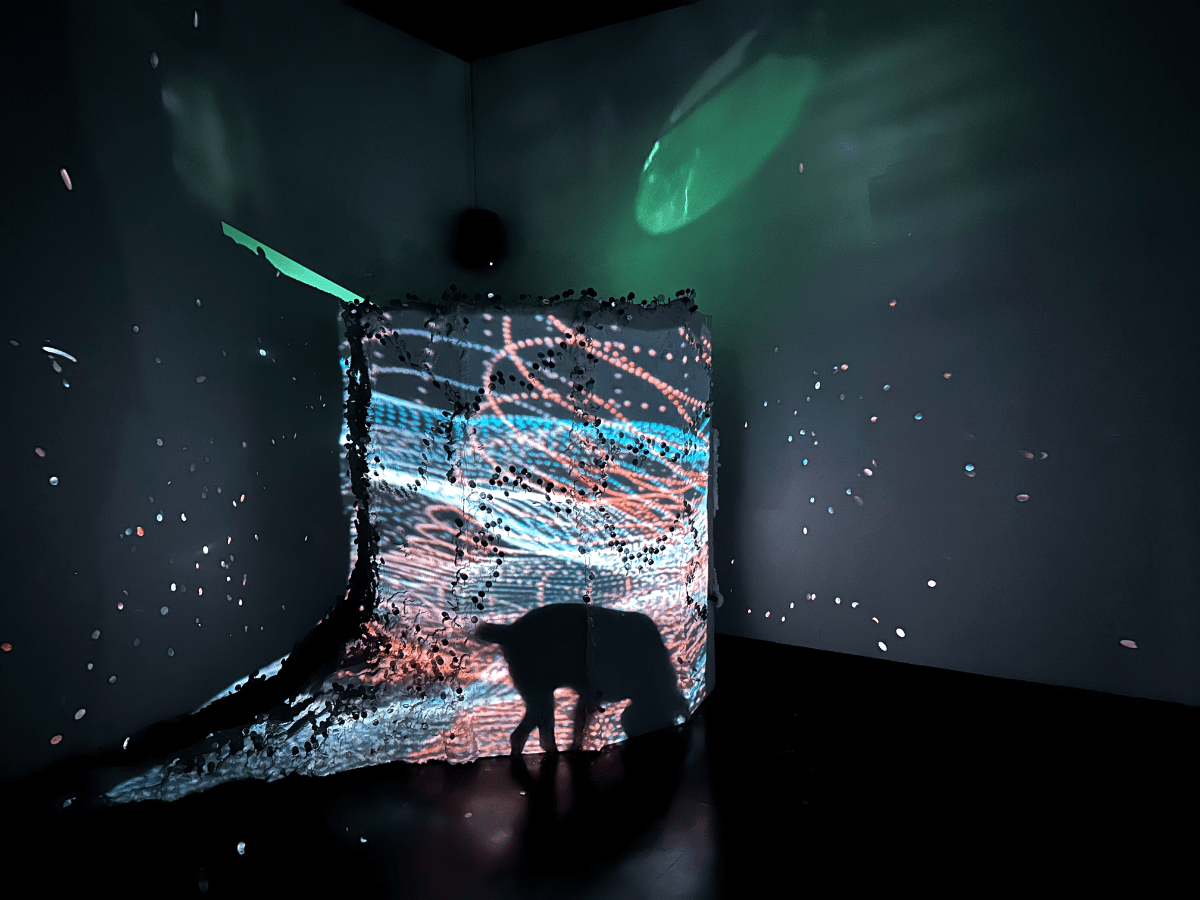

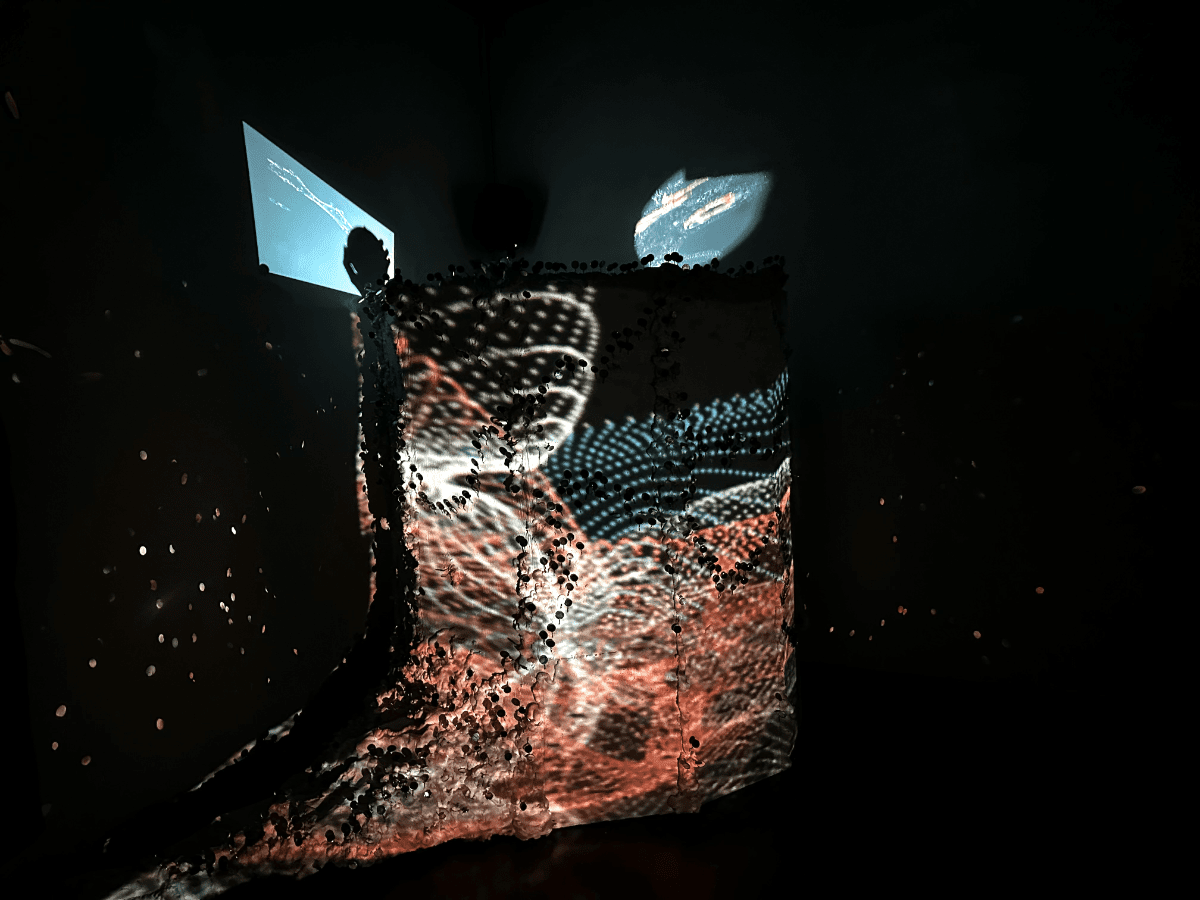

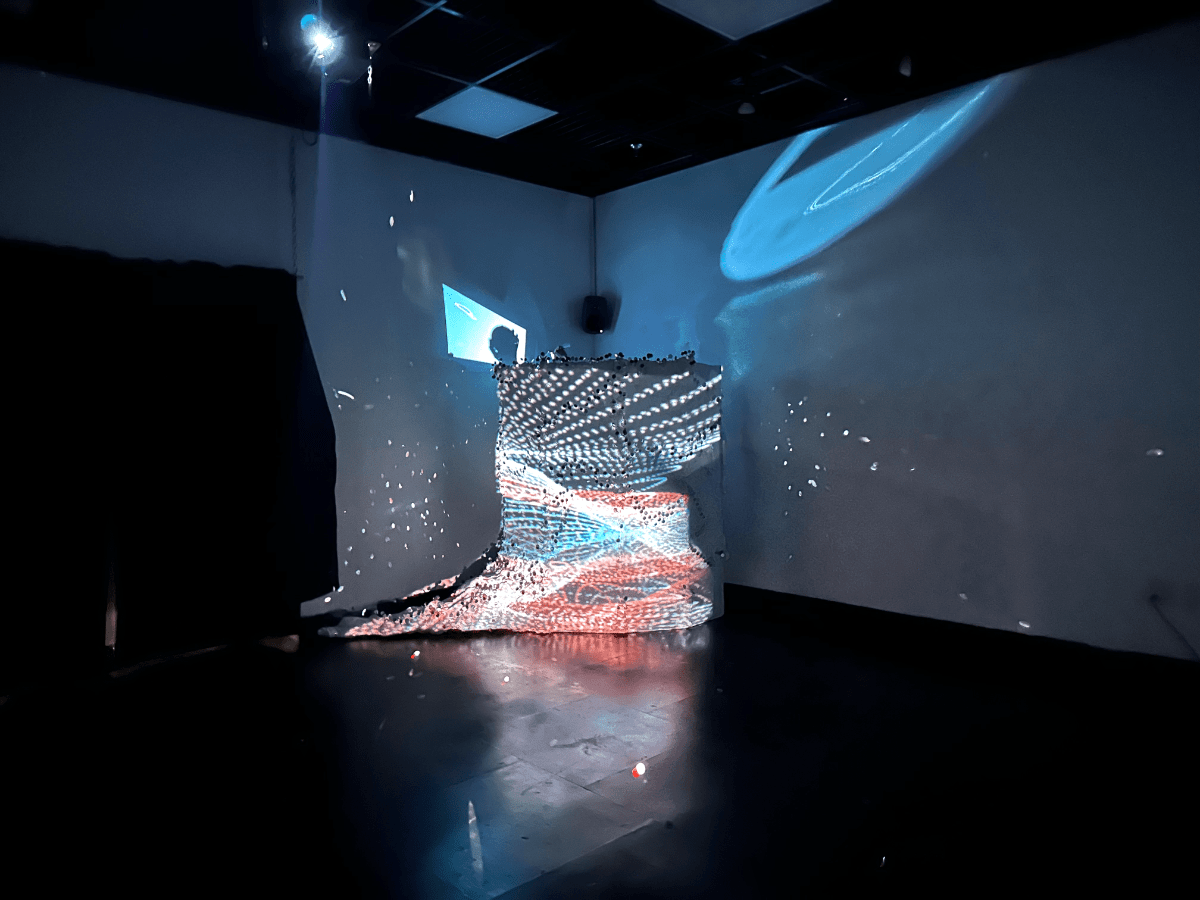

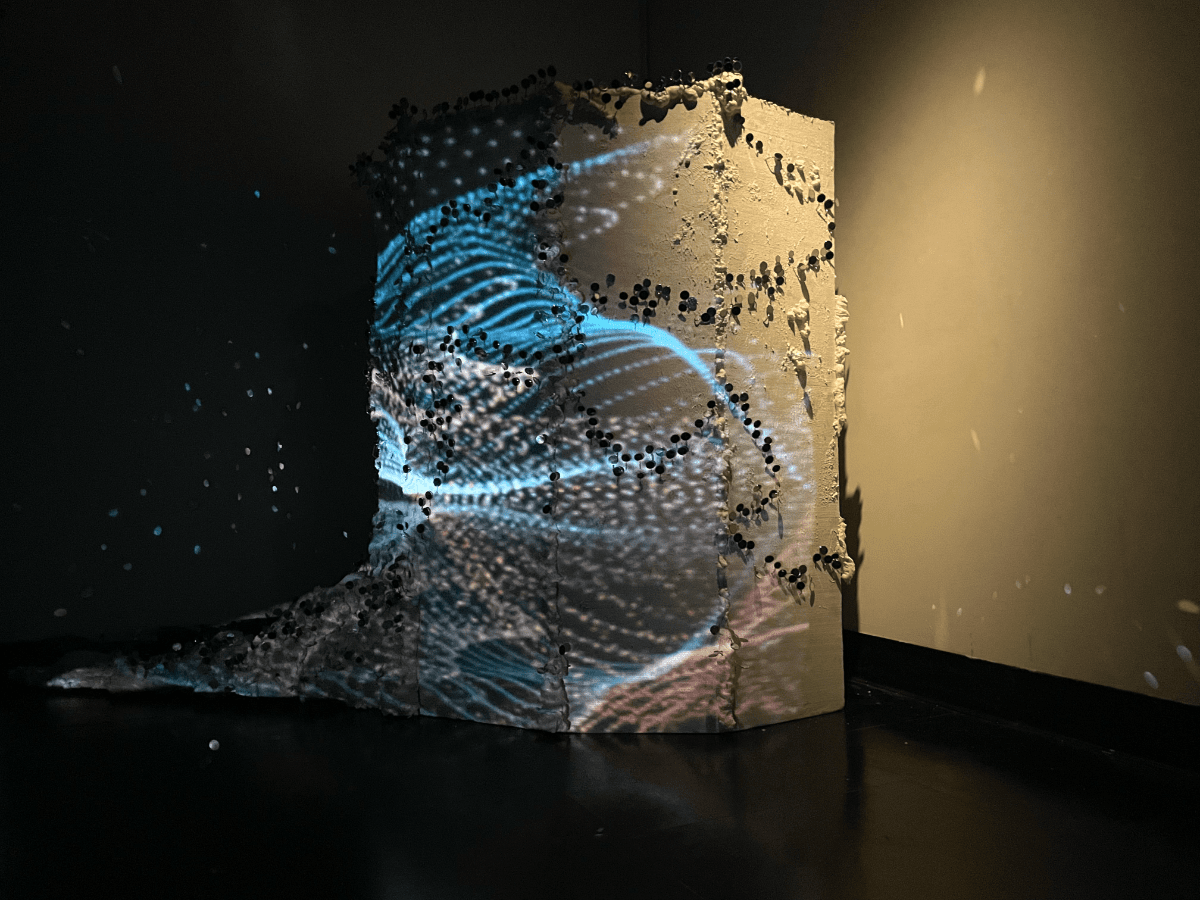

Do you know you are not a human? - The Wave Cave

Projection Mapping and Sculpture

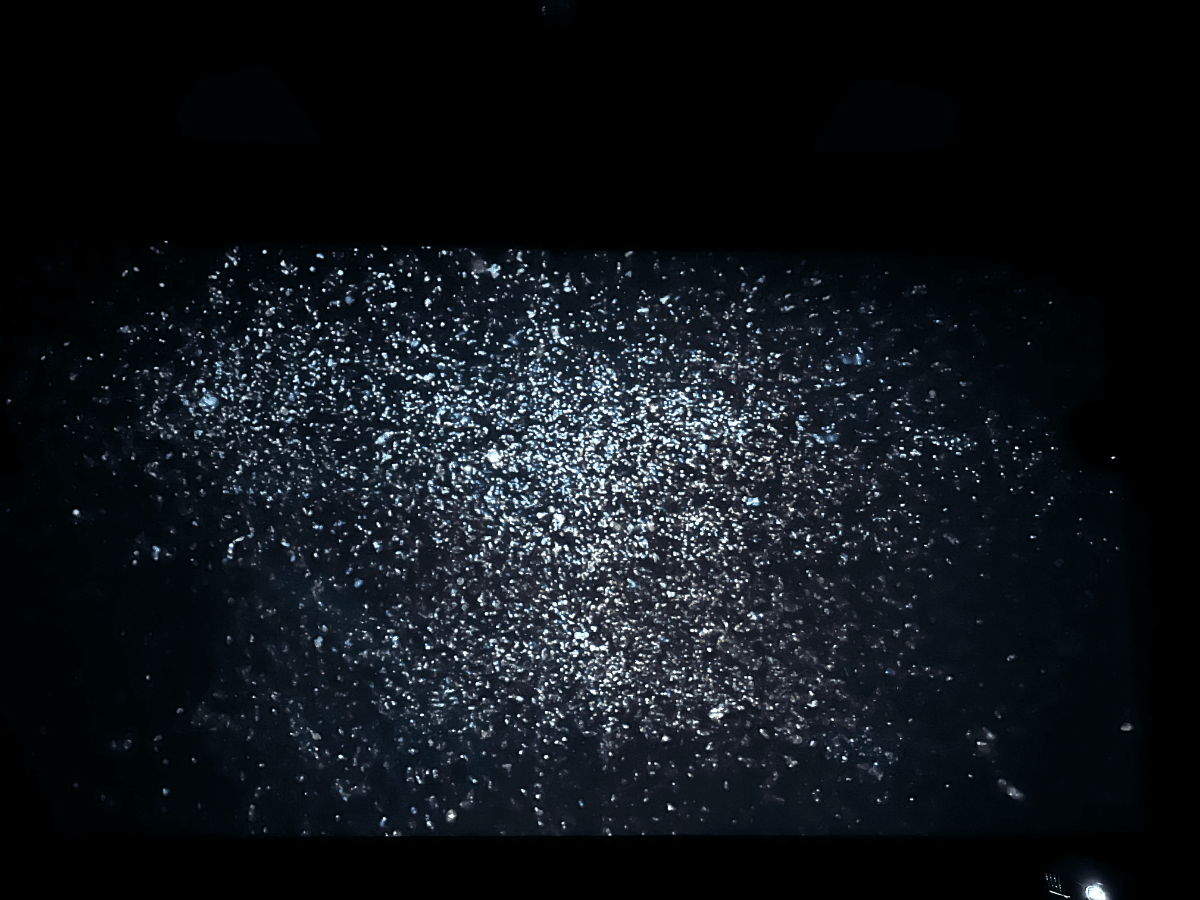

Do you know you are not a human? Documentation

List of Figures

Figure 3.1: Circle movement programmed by Luisa Pinzon using p5Js framework.

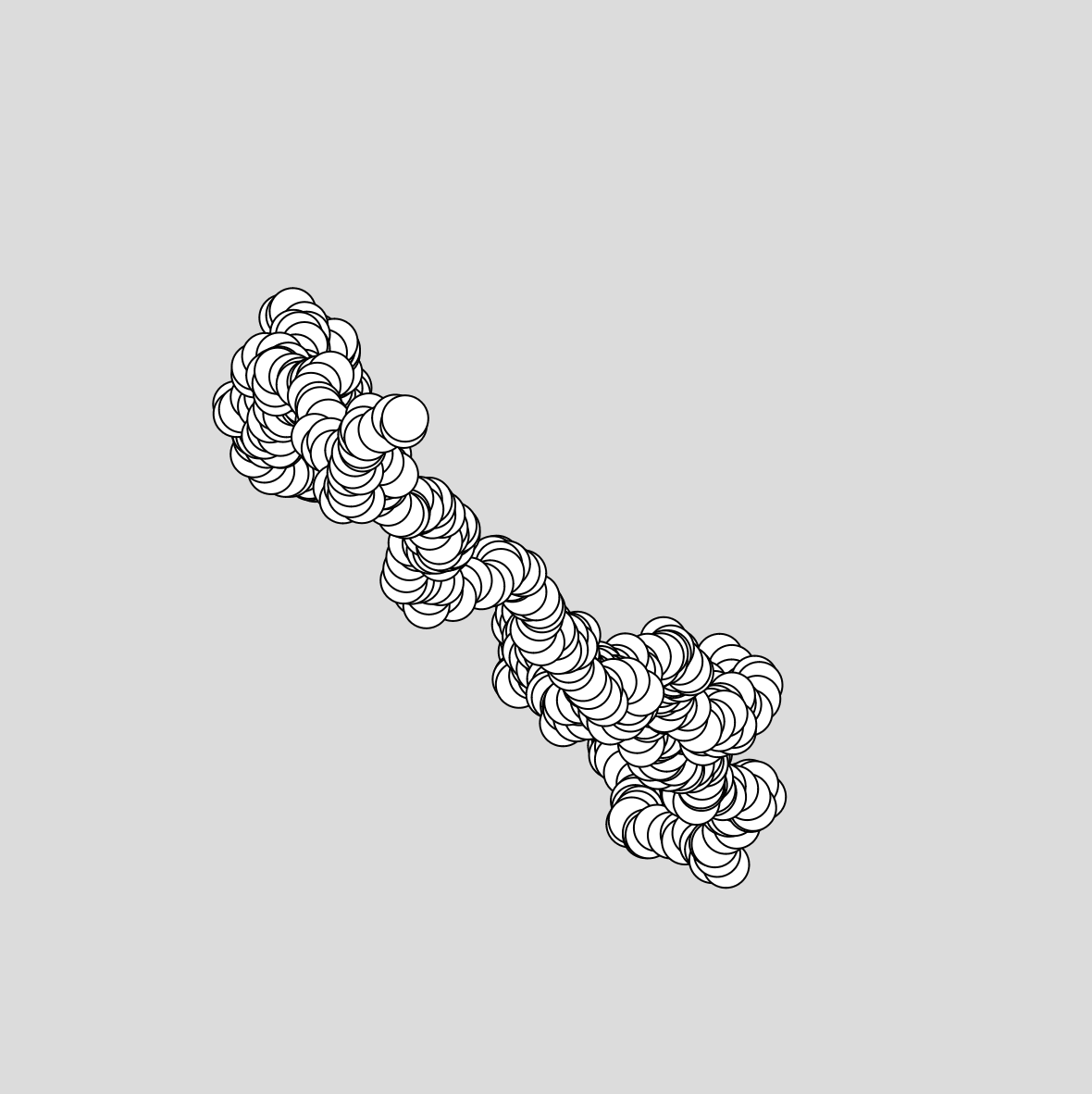

Figure 3.2: The Random walker programmed by Luisa Pinzon using p5Js framework.

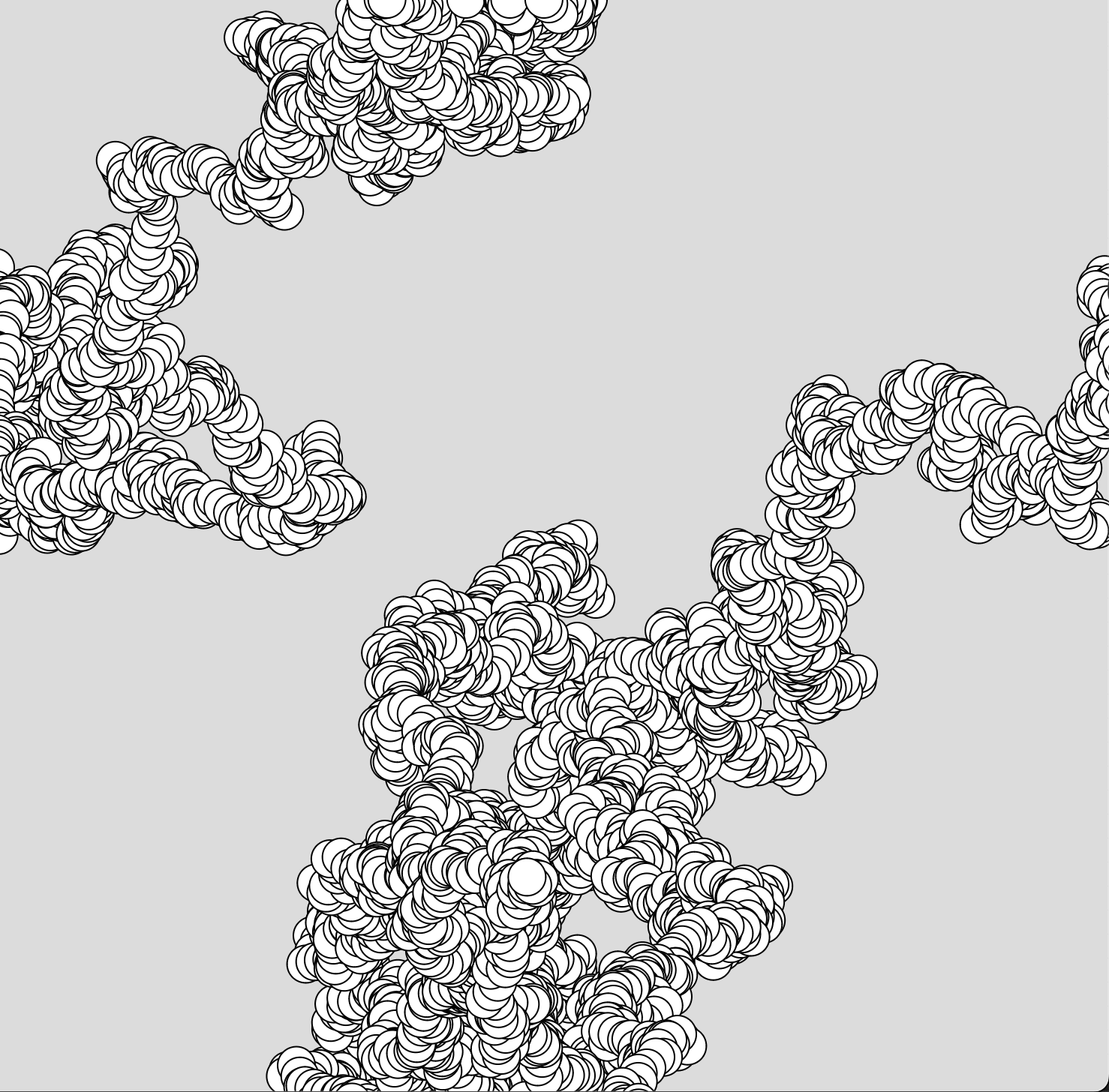

Figure 3.3: Particle System programmed by Luisa Pinzon using OpenFrameworks

Figure 4.1: Simple Touch Designer Network

Figure 4.2: Instancing in Touch Designer

Figure 4.3: Noise in Touch Designer

Figure 4.4: Instancing circles using Photograph data

Figure 4.5: Color mapping in Touch Designer

Figure 4.6: Matching circle instances with pixel color information

Figure 4.7: Constant value added to original coordinates

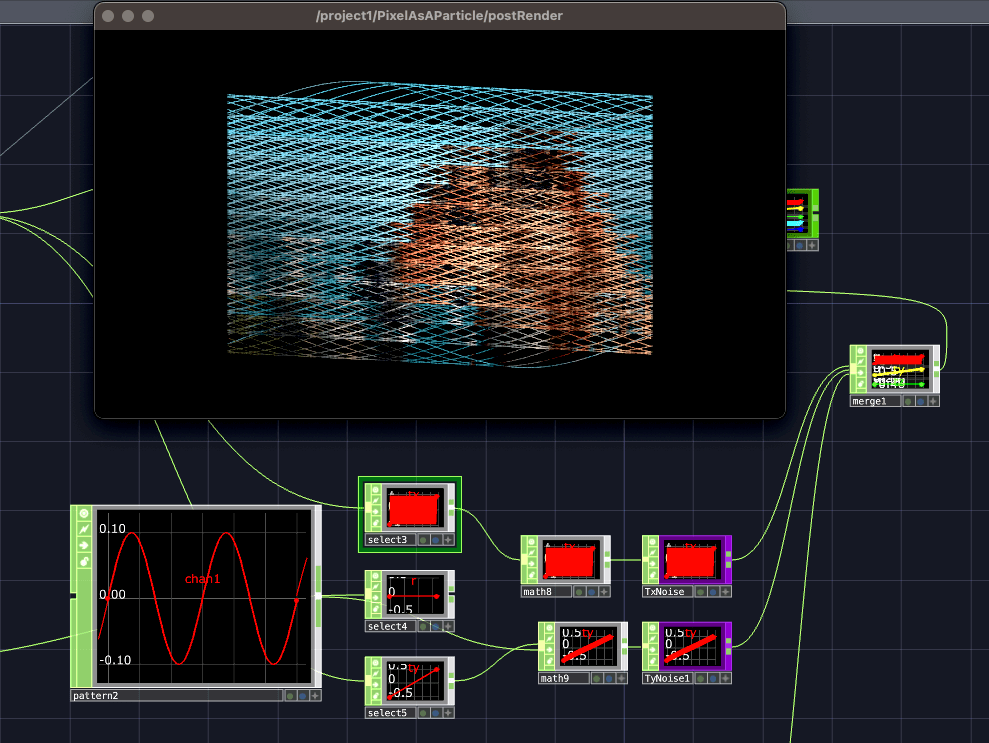

Figure 4.8: Pattern added to original coordinates

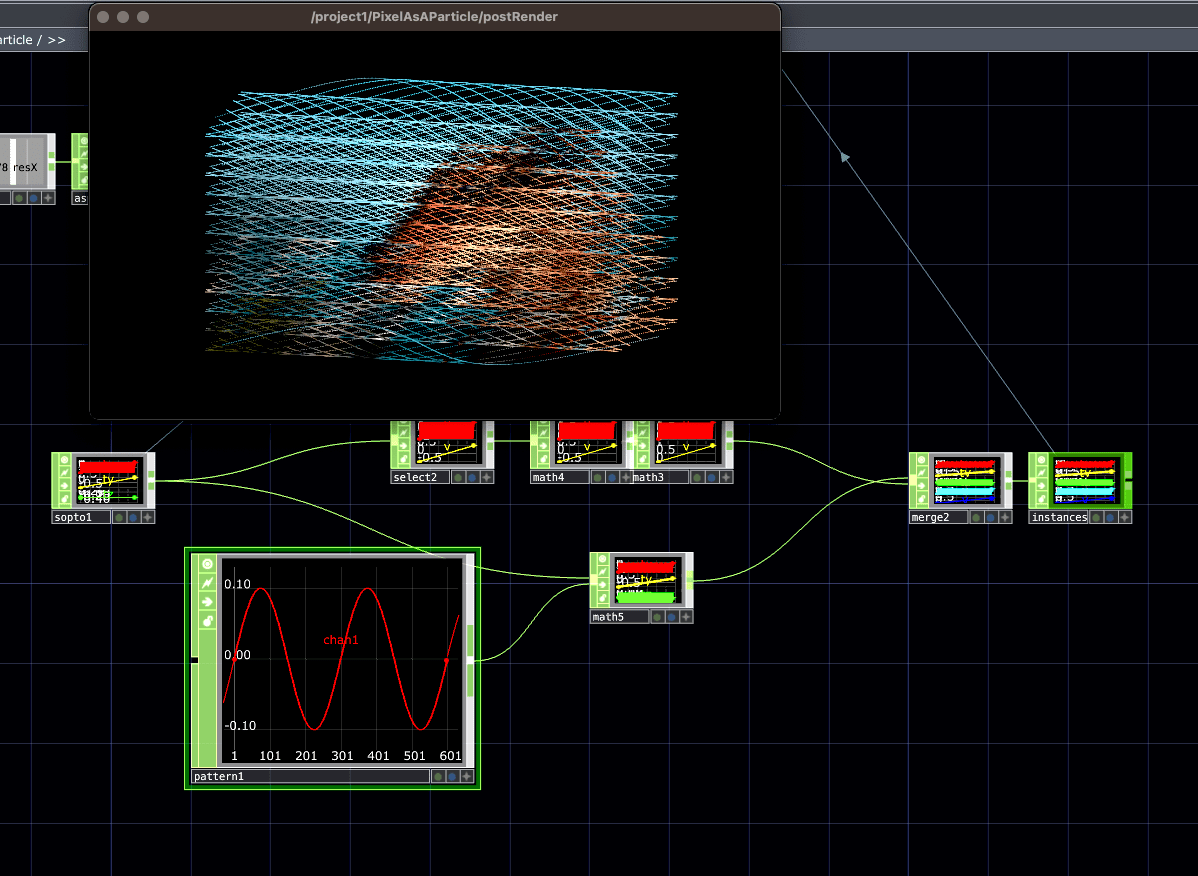

Figure 4.9: Noise texture to data

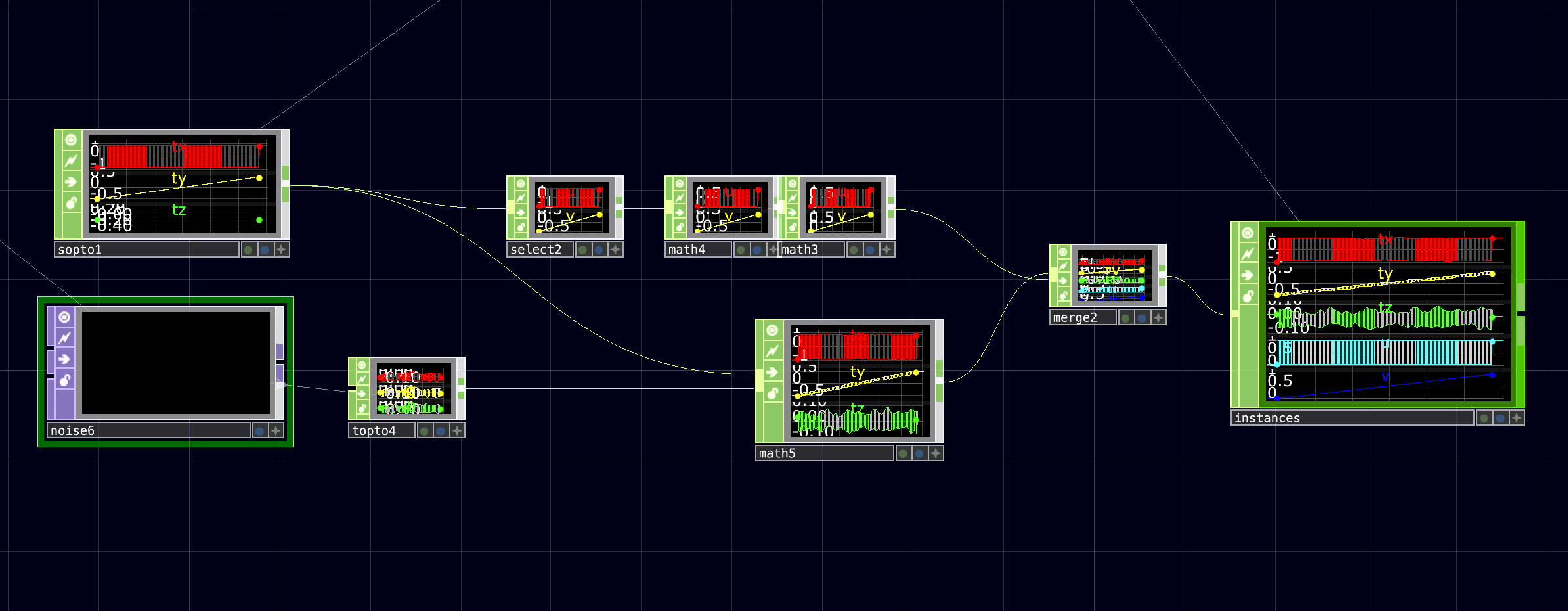

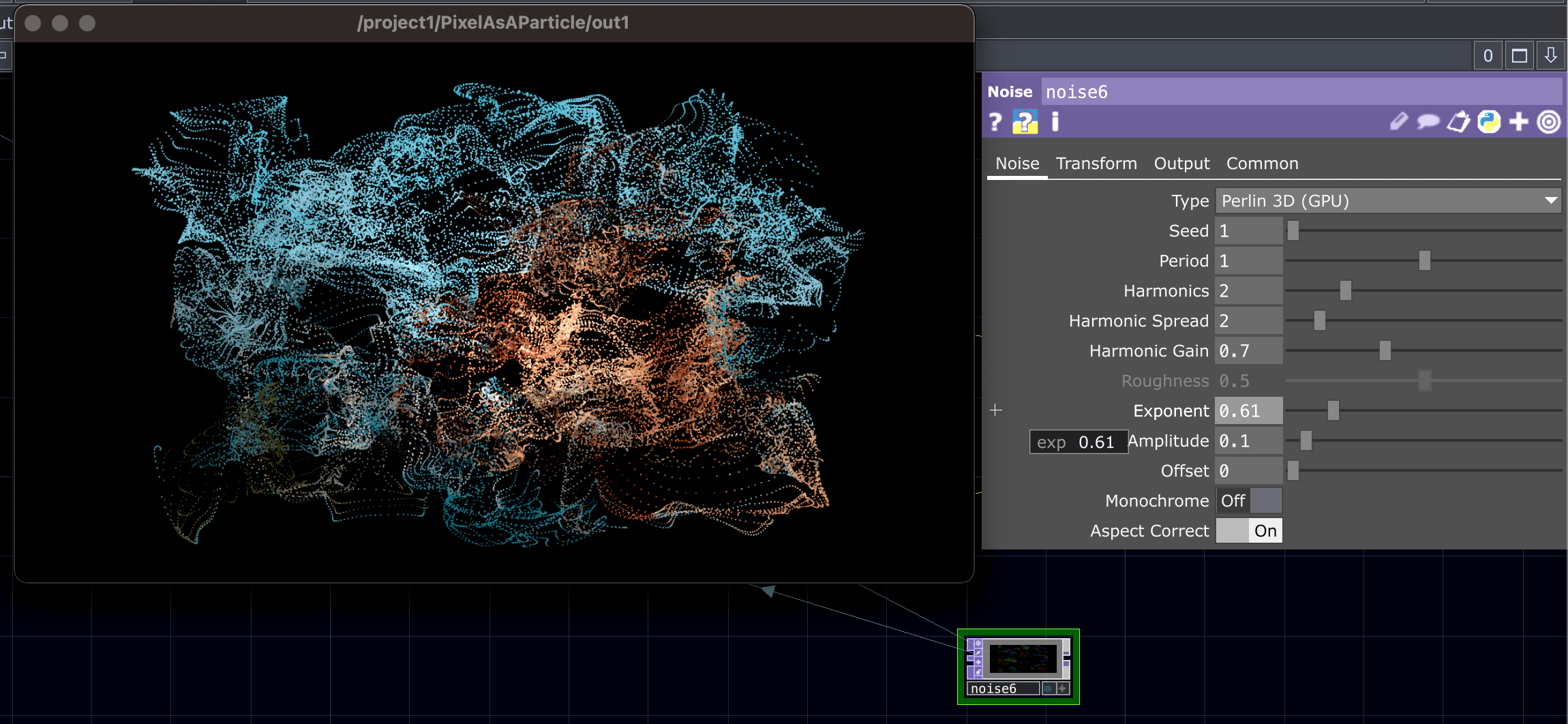

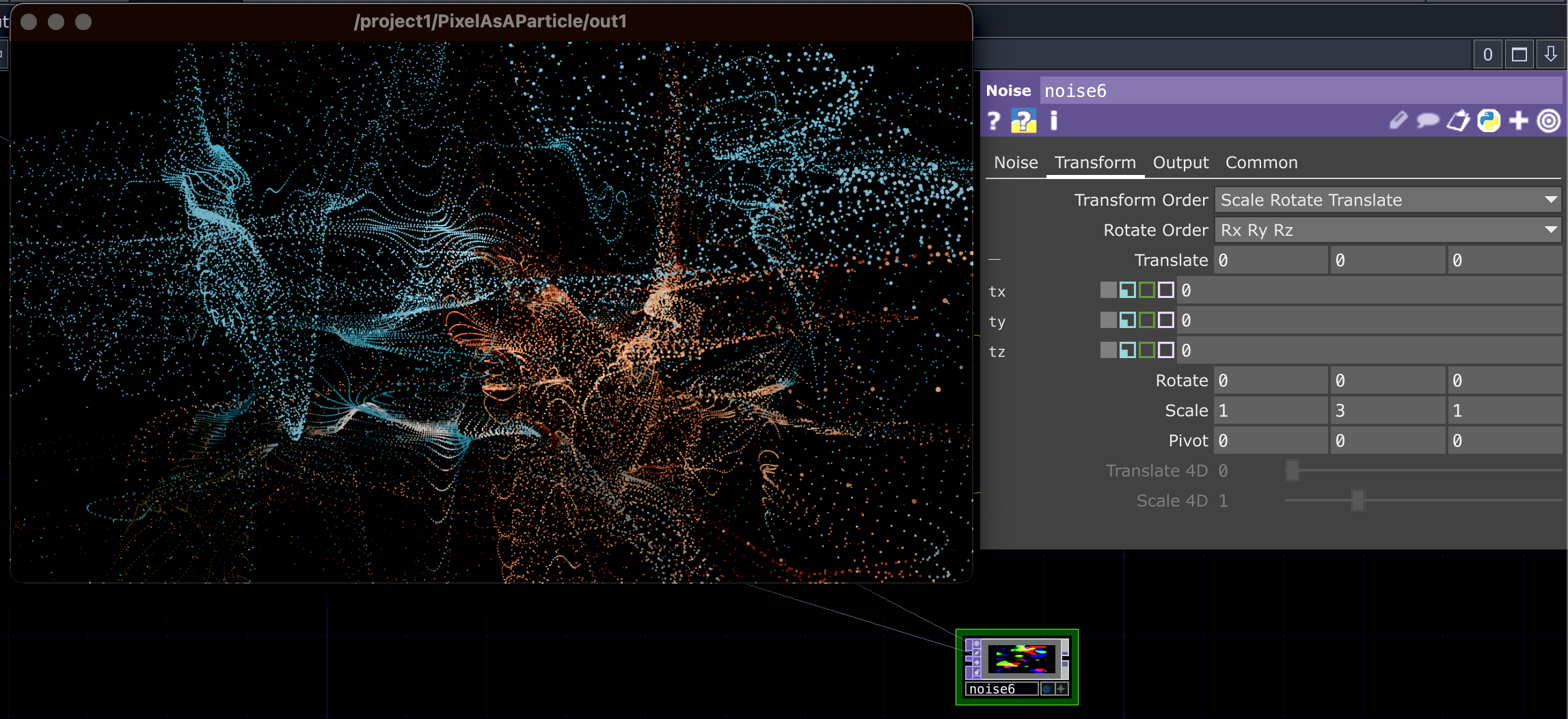

Figure 4.10: Noise Texture Parameters

Figure 4.11: Noise Texture Parameters

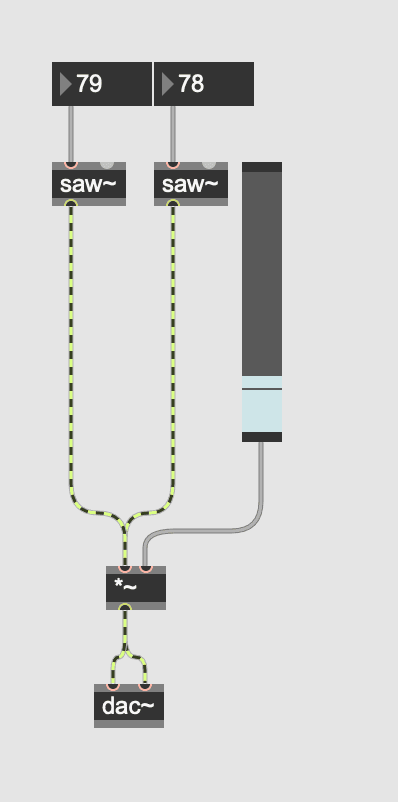

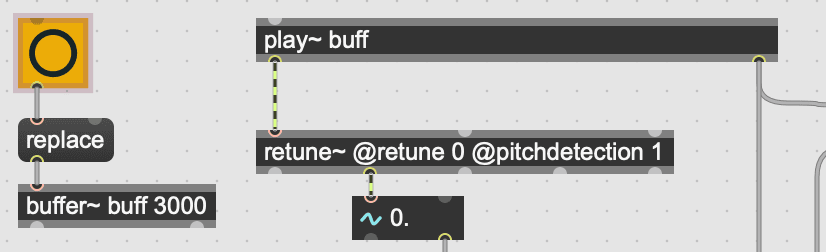

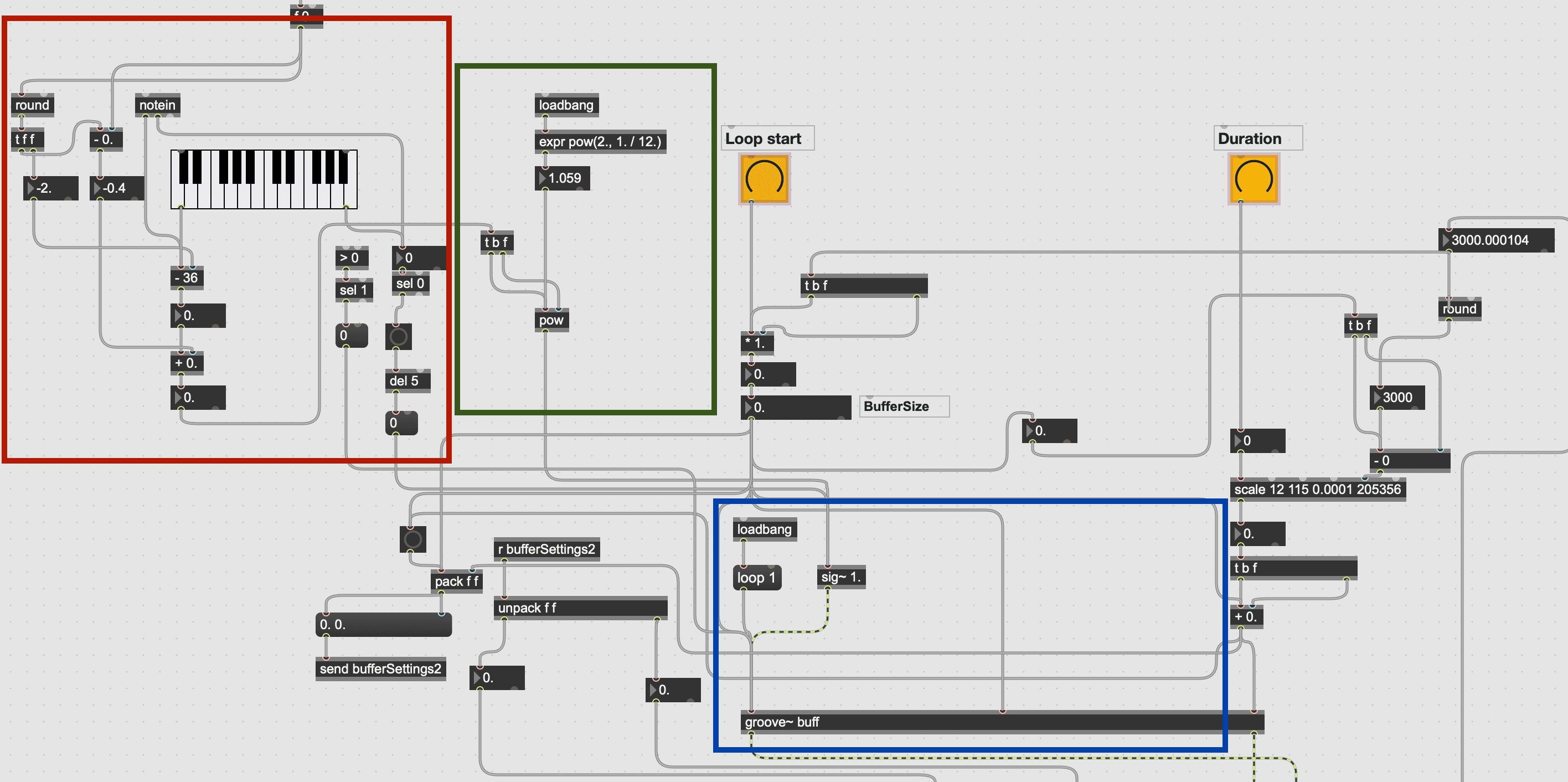

Figure 5.1: Simple Max/MSP Patch by Luisa Pinzon

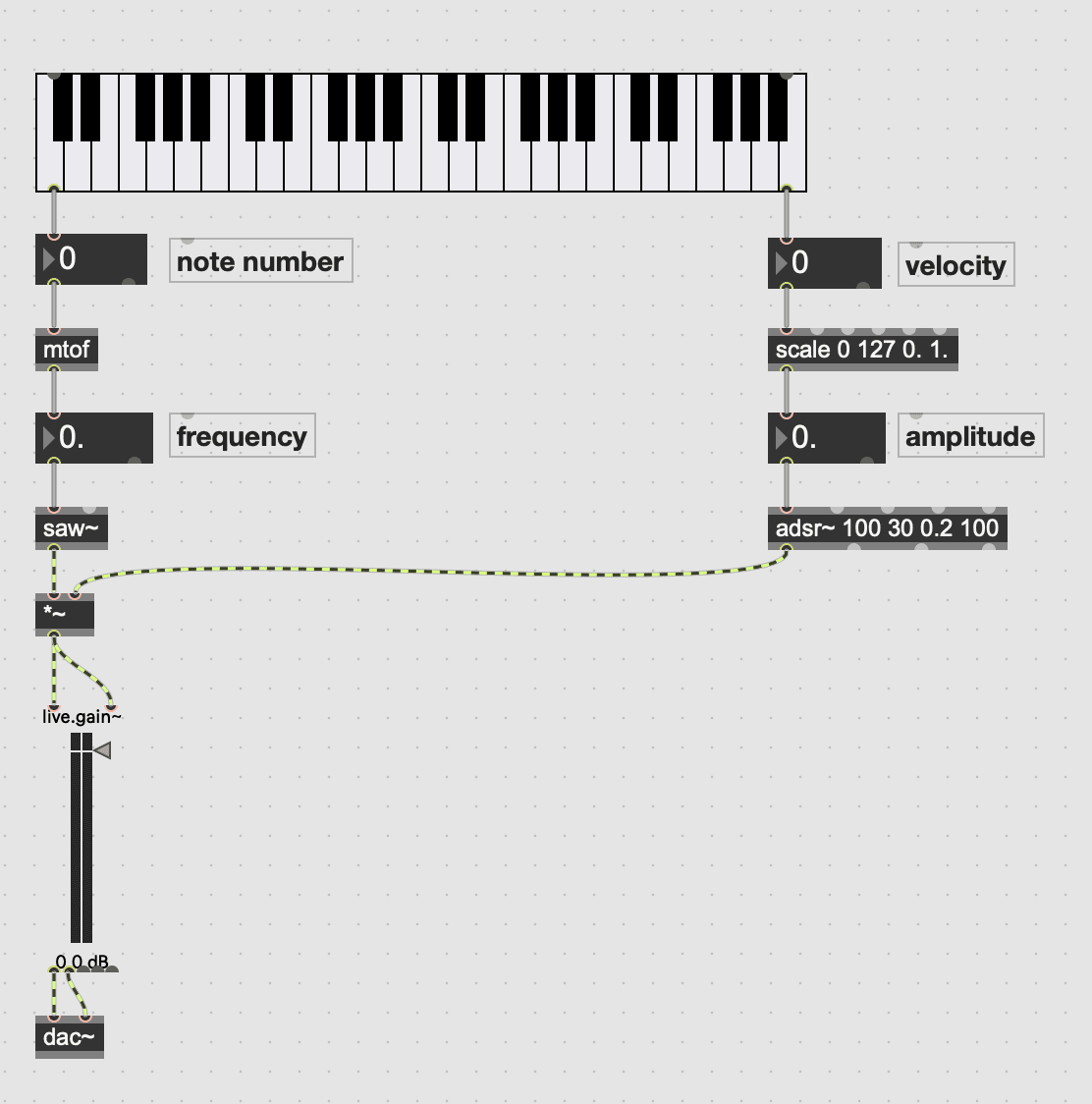

Figure 5.2: Monophonic Synthesizer in Max/MSP

Figure 5.3: Audio Buffer in Max/MSP, Original patch by Eric Heep edited by Luisa Pinzon

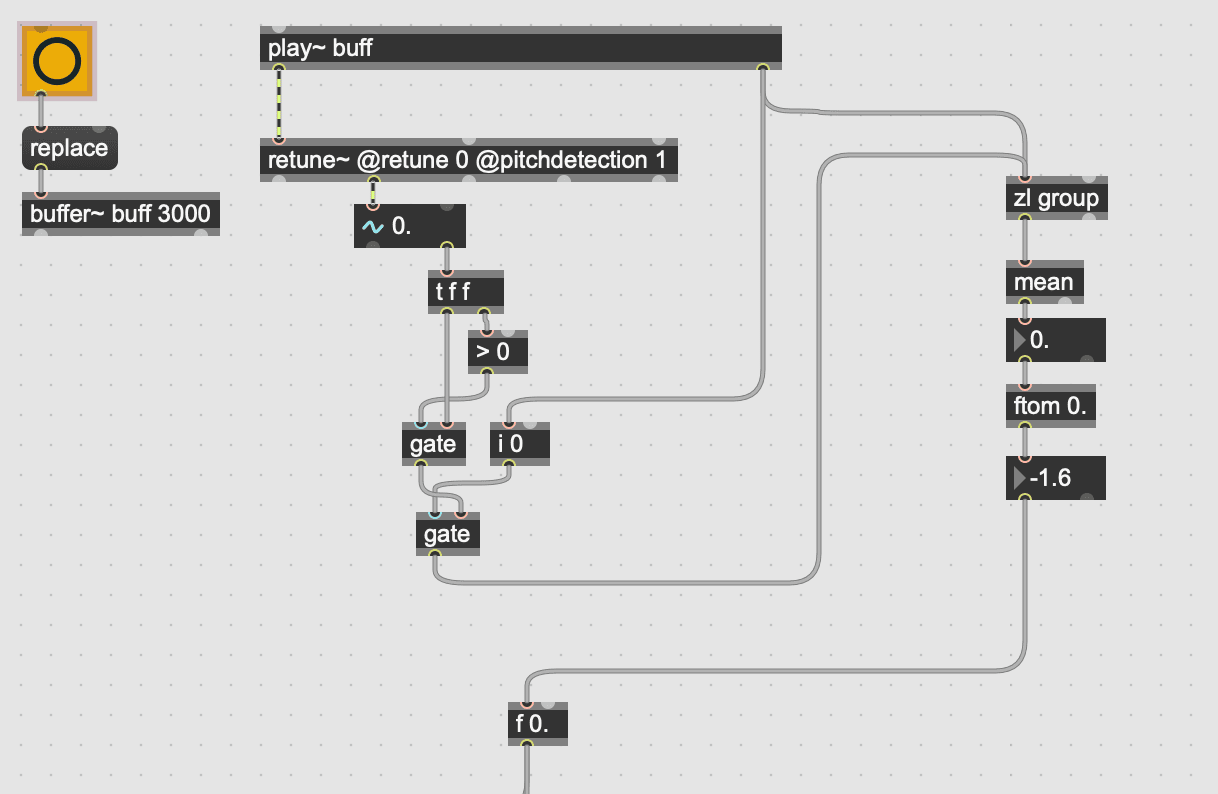

Figure 5.4: Importing sound and detecting its pitch – Patch Luisa Pinzon

Figure 5.5: Average pitch – Patch by Luisa Pinzon

Figure 5.6: Re-pitch calculation – Patch by Luisa Pinzon

Figure 5.7: Loop selection – Patch by Luisa Pinzon

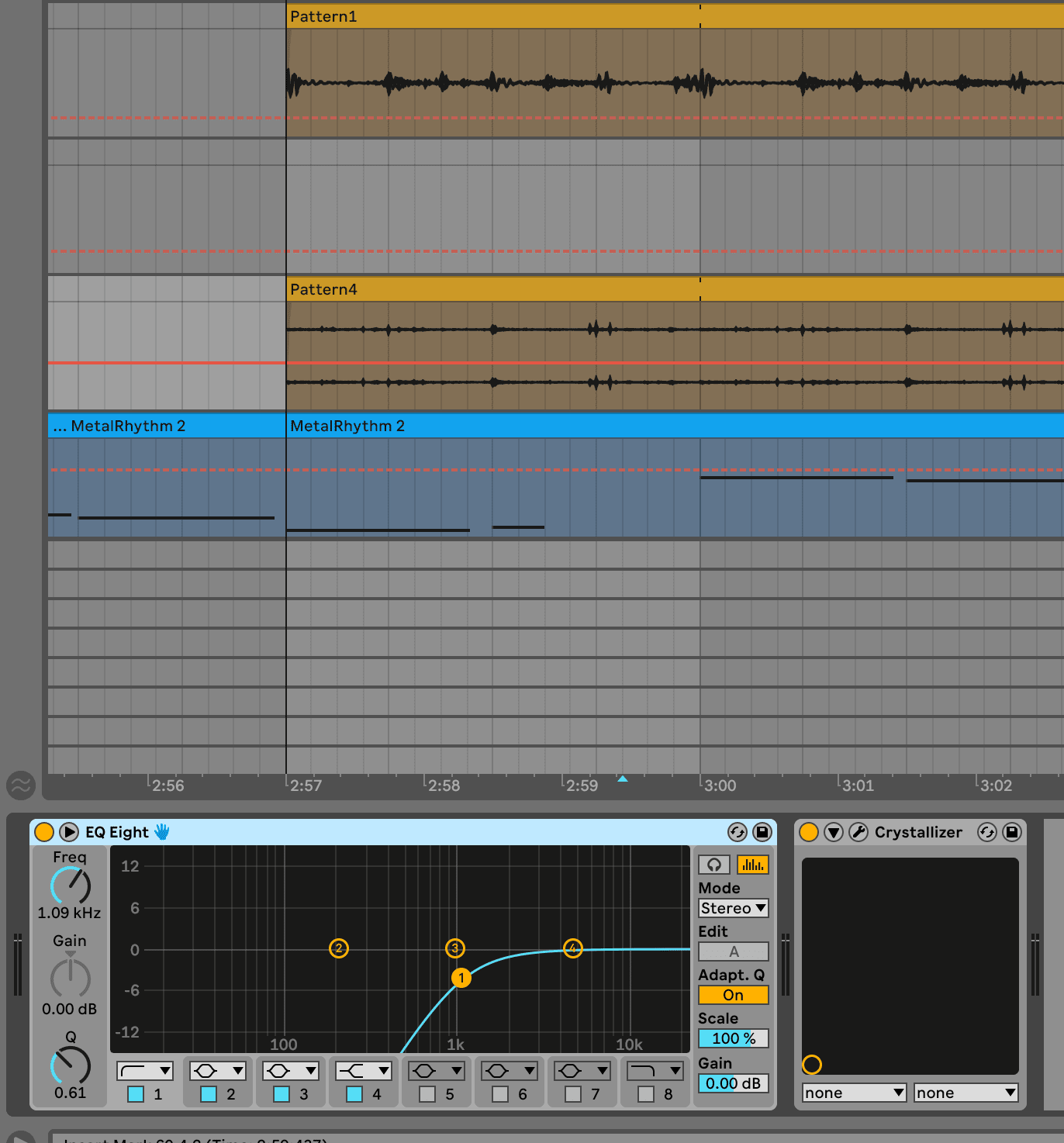

Figure 5.9: Equalizing – Ableton Session by Luisa Pinzon

Figure 5.10: Equalizing – Ableton Session by Luisa Pinzon

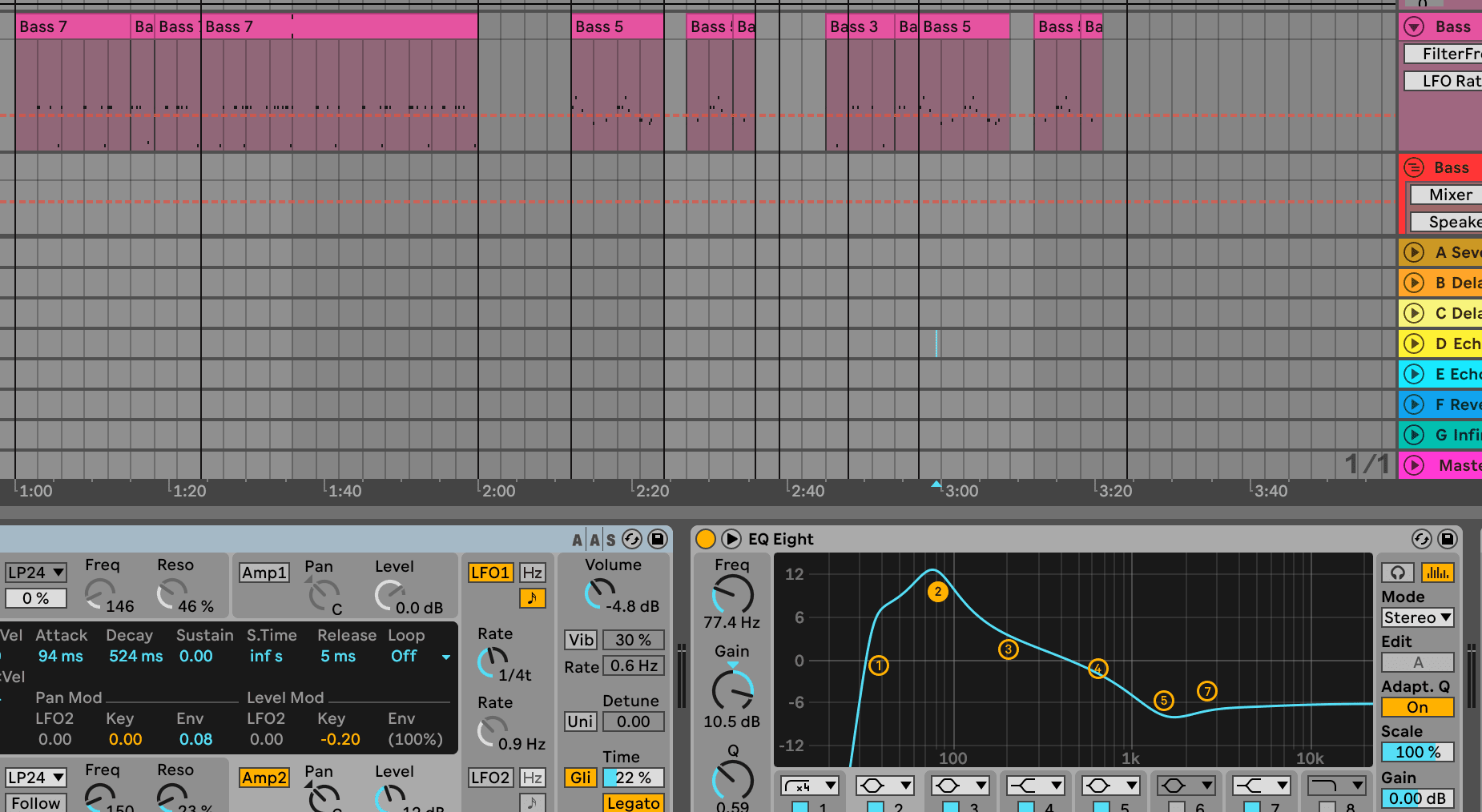

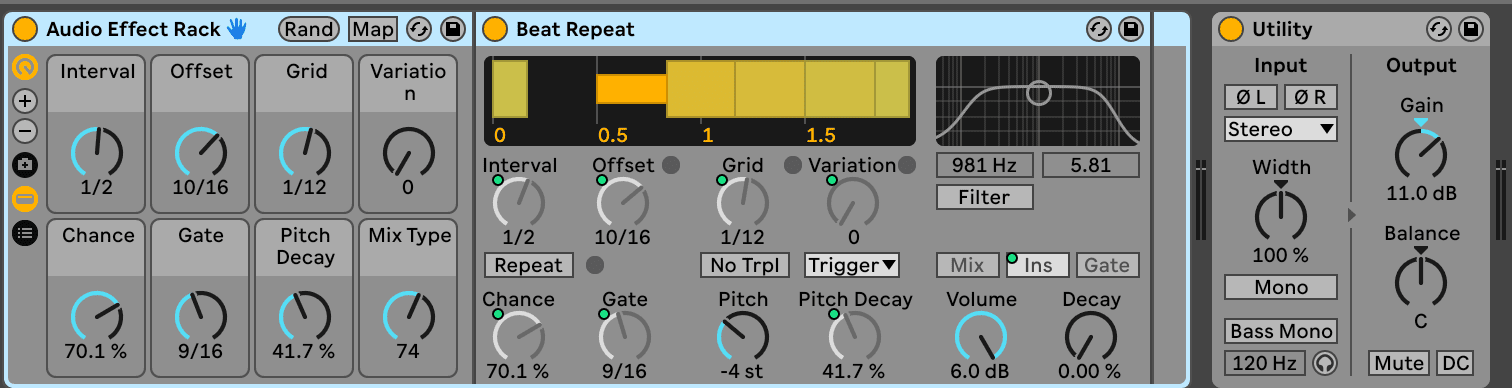

Figure 5.11: Mastering chain– Ableton Session by Luisa Pinzon

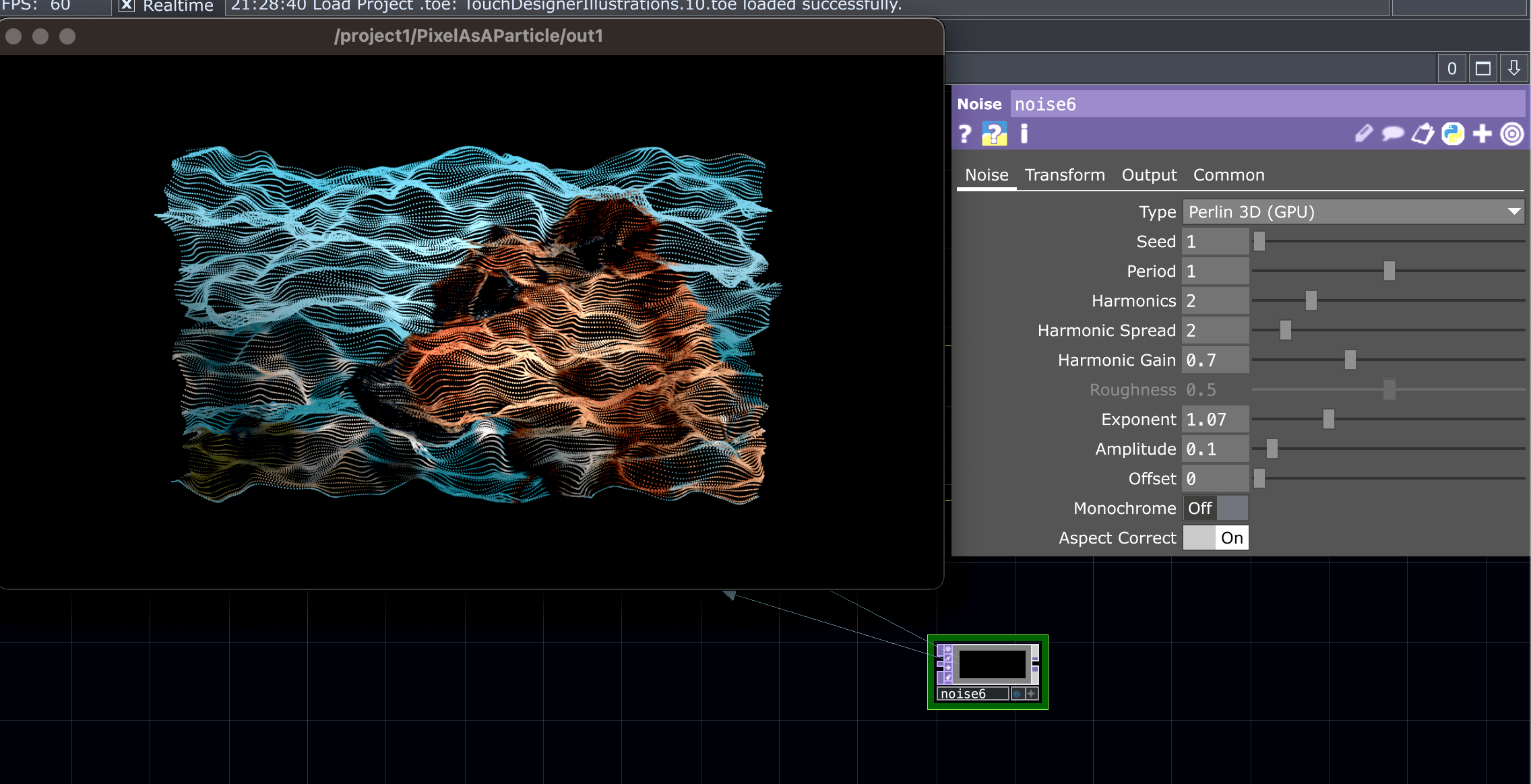

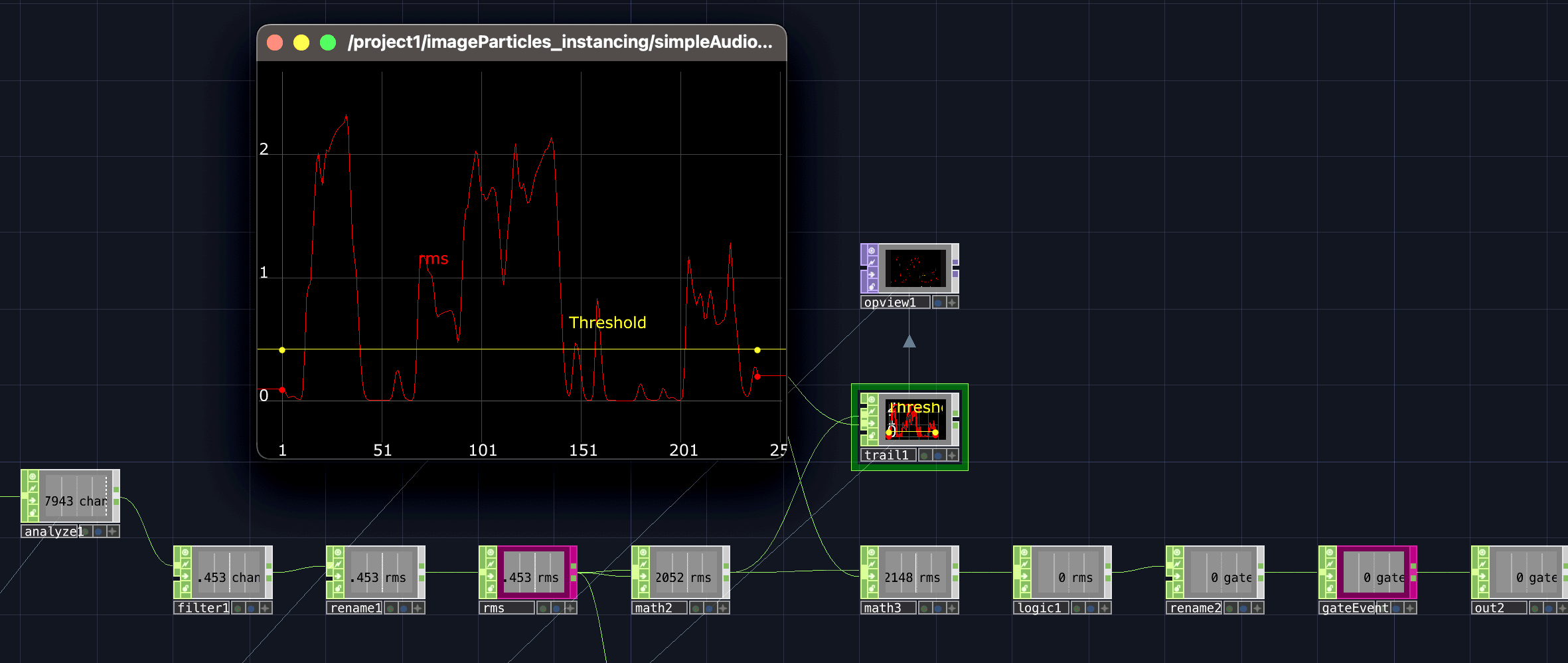

Figure 5.13: Particle movement – Touch Designer network and edited by Luisa Pinzon

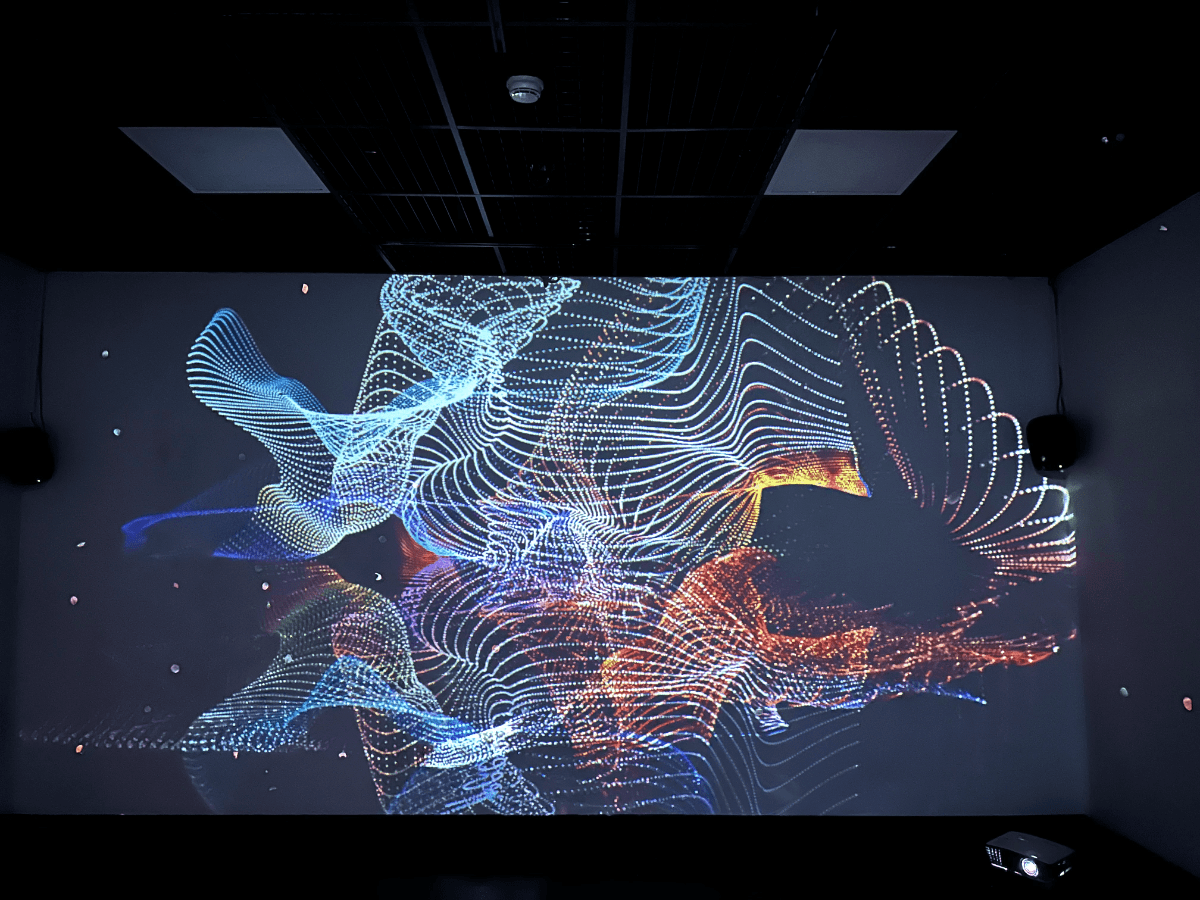

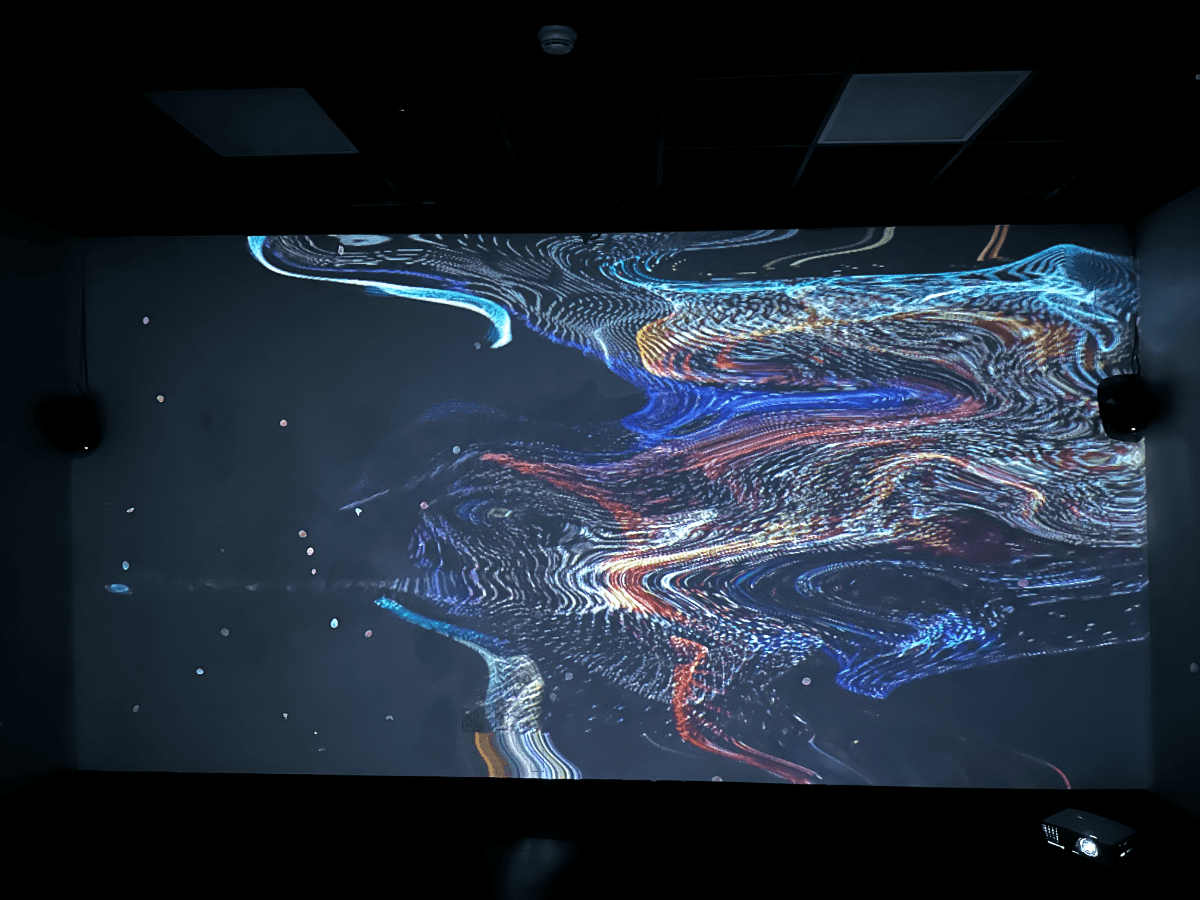

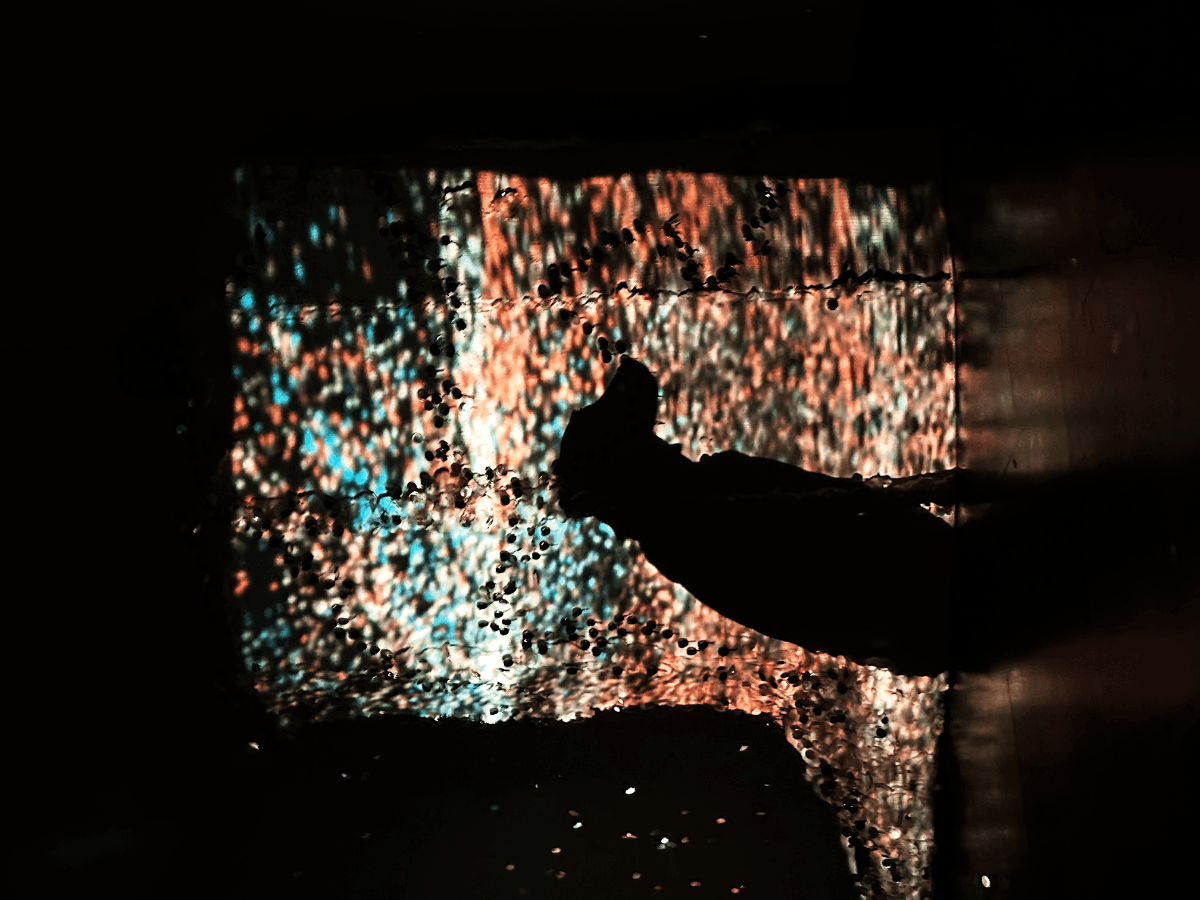

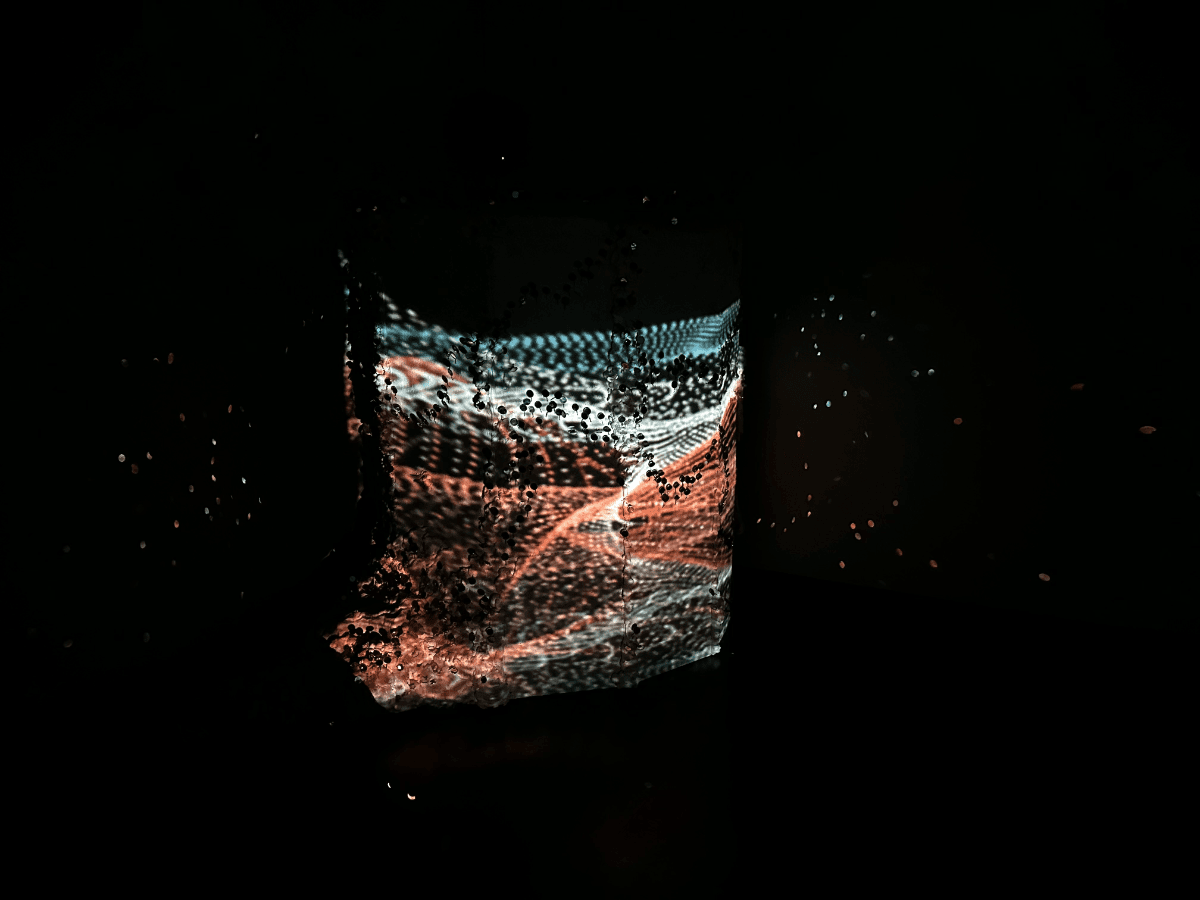

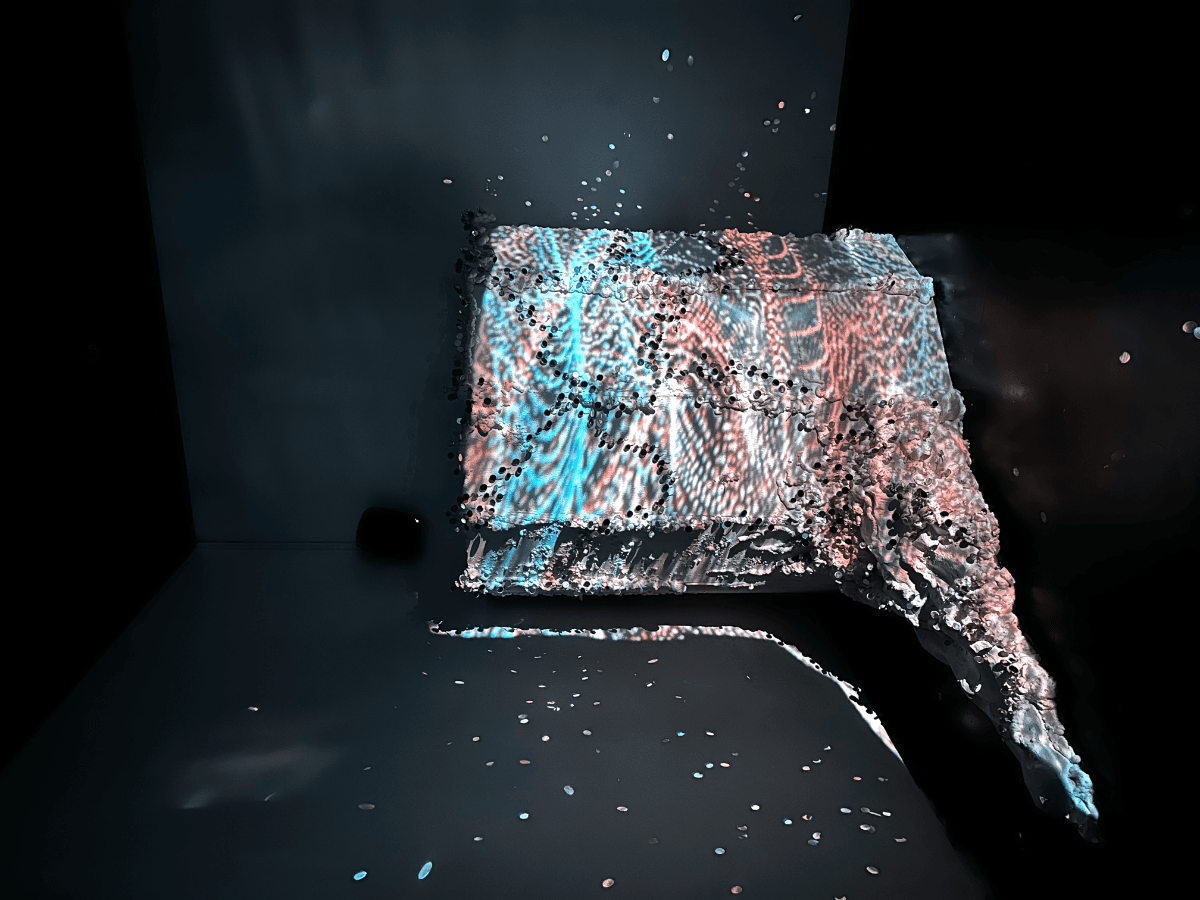

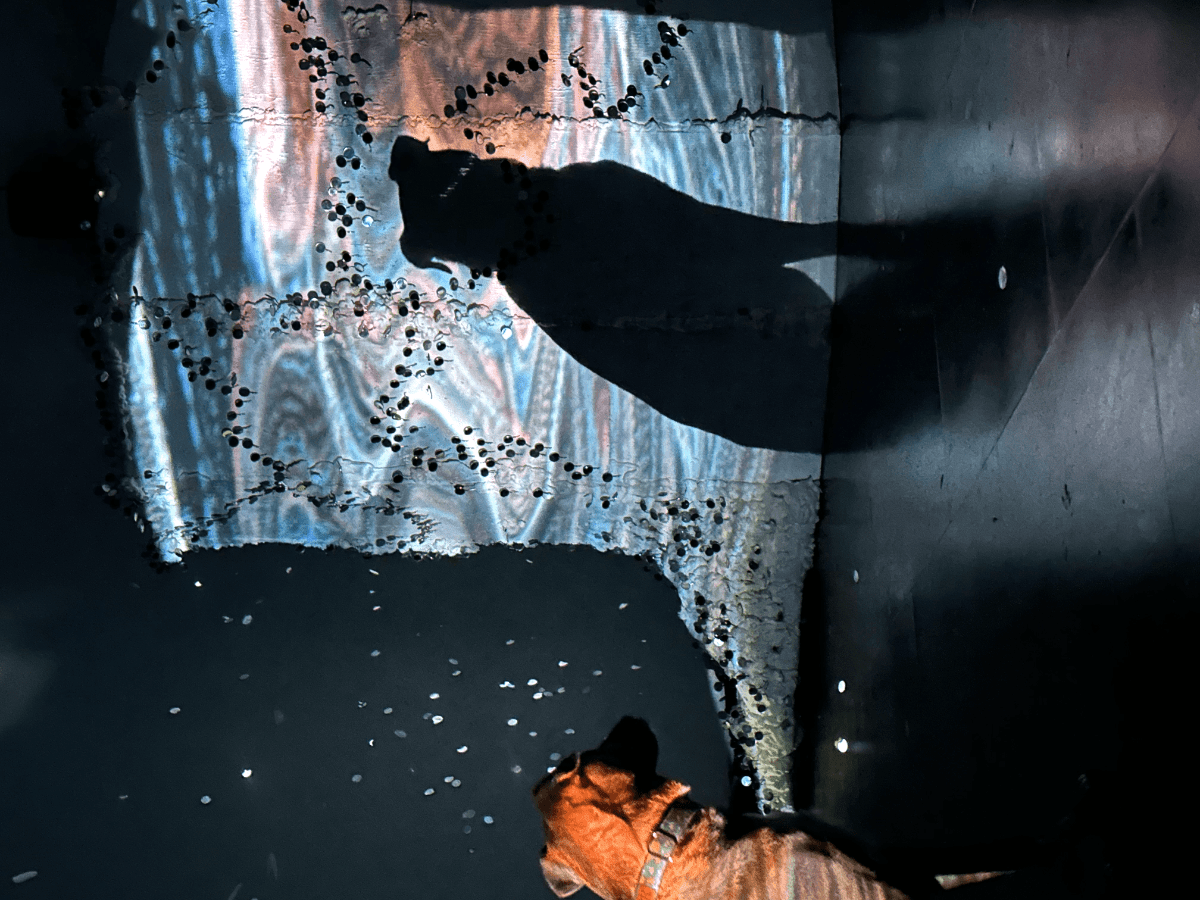

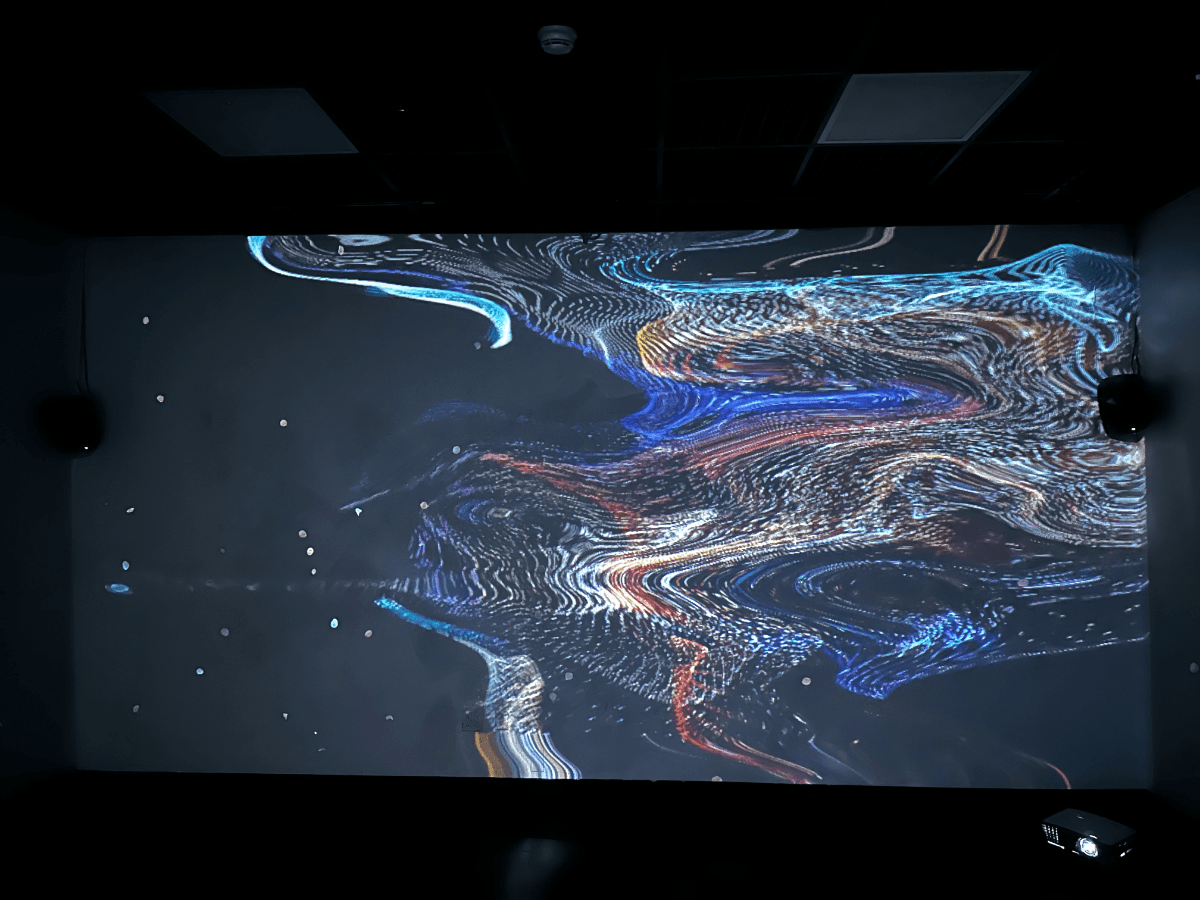

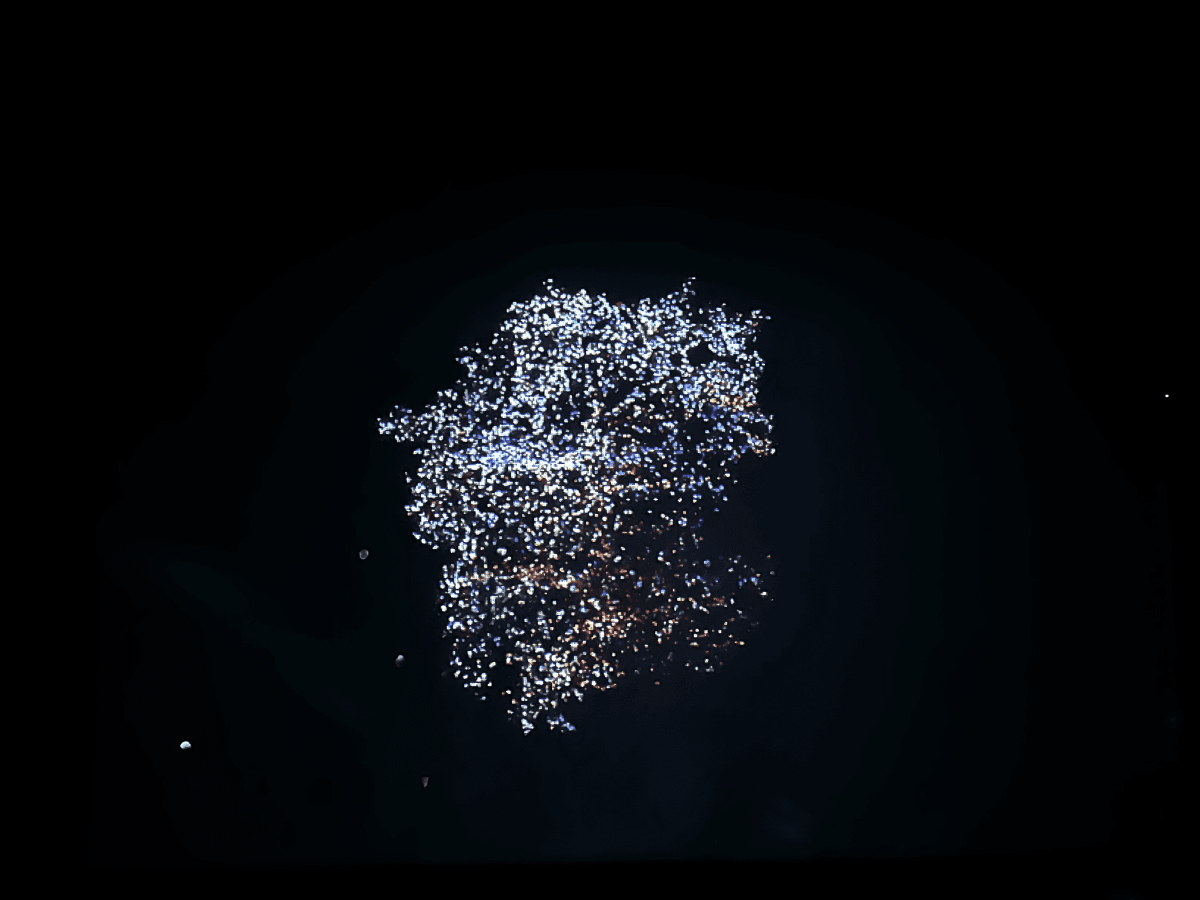

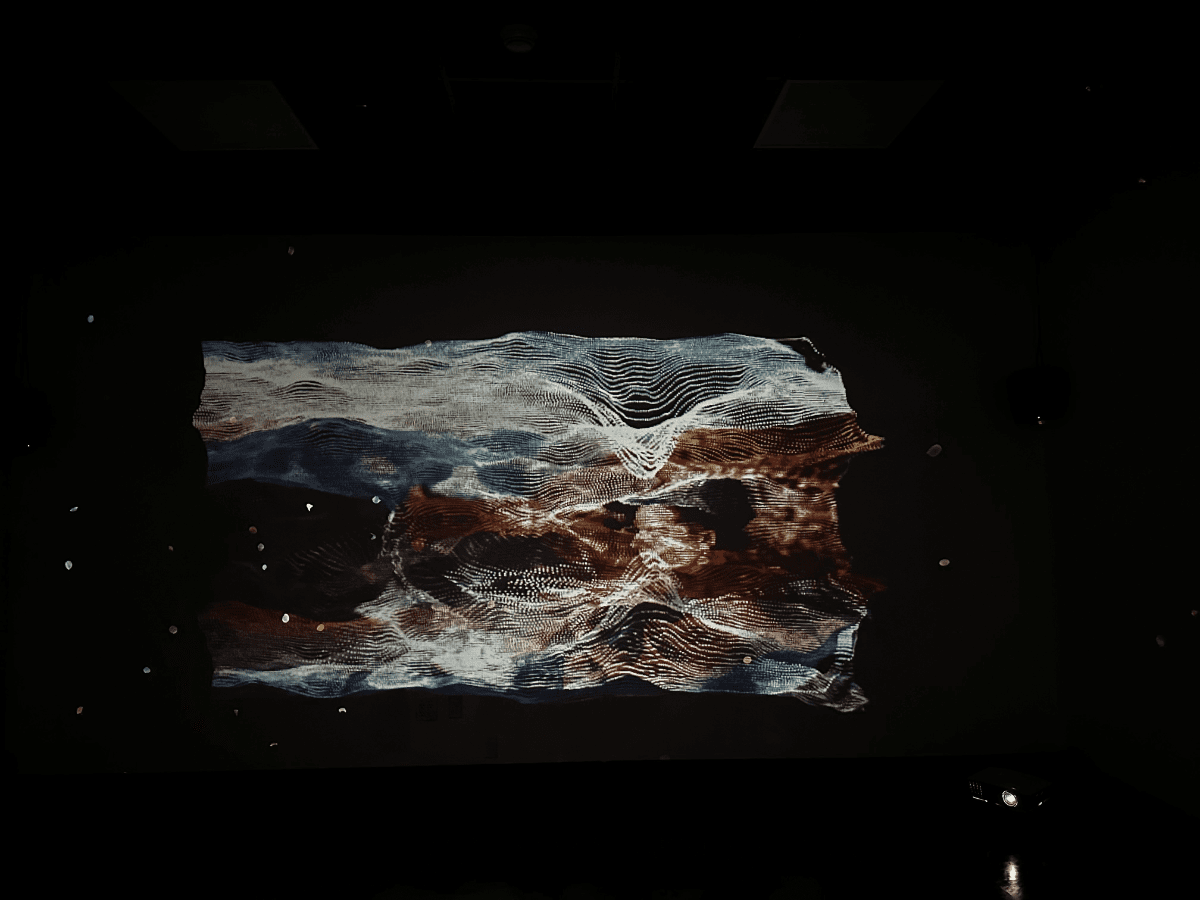

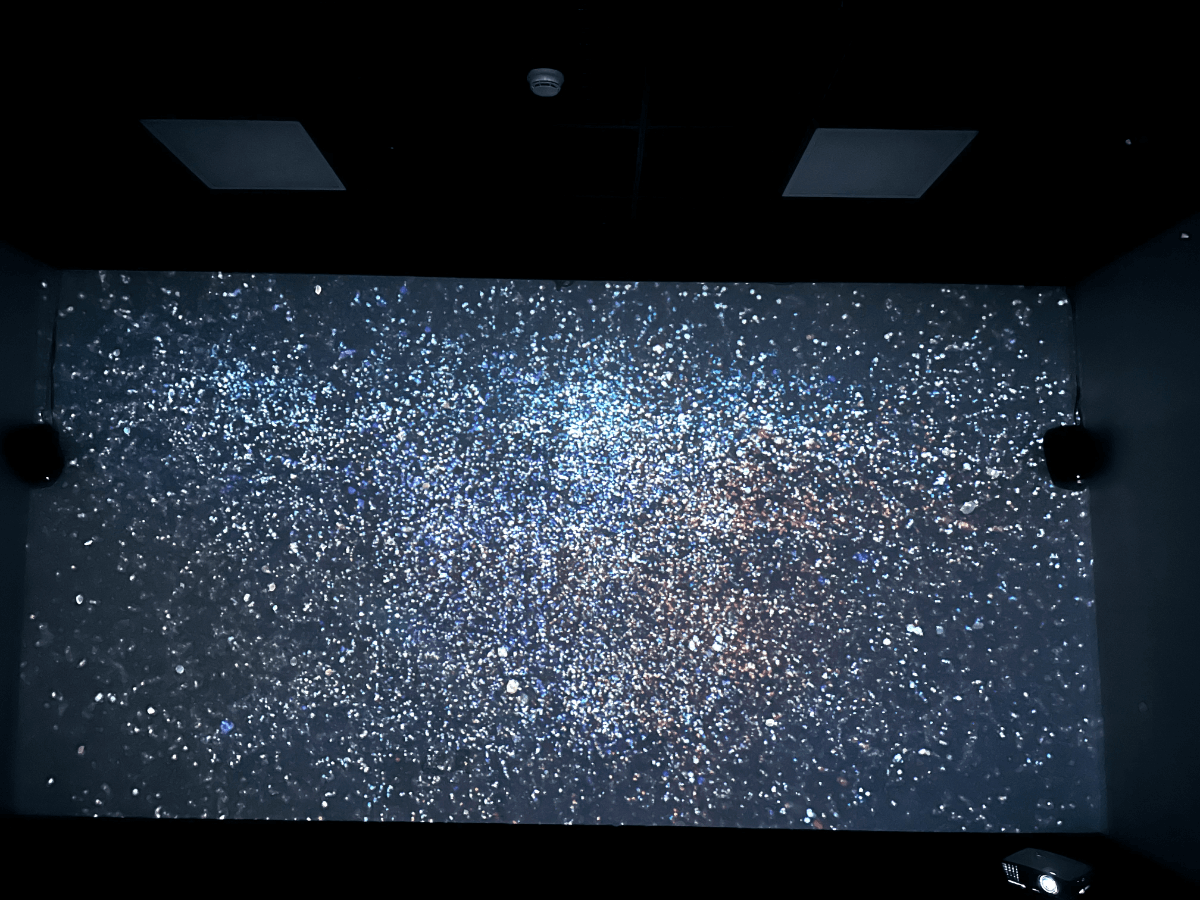

Figure 6.1: The Wave Cave Part 1 – Installation by Luisa Pinzon

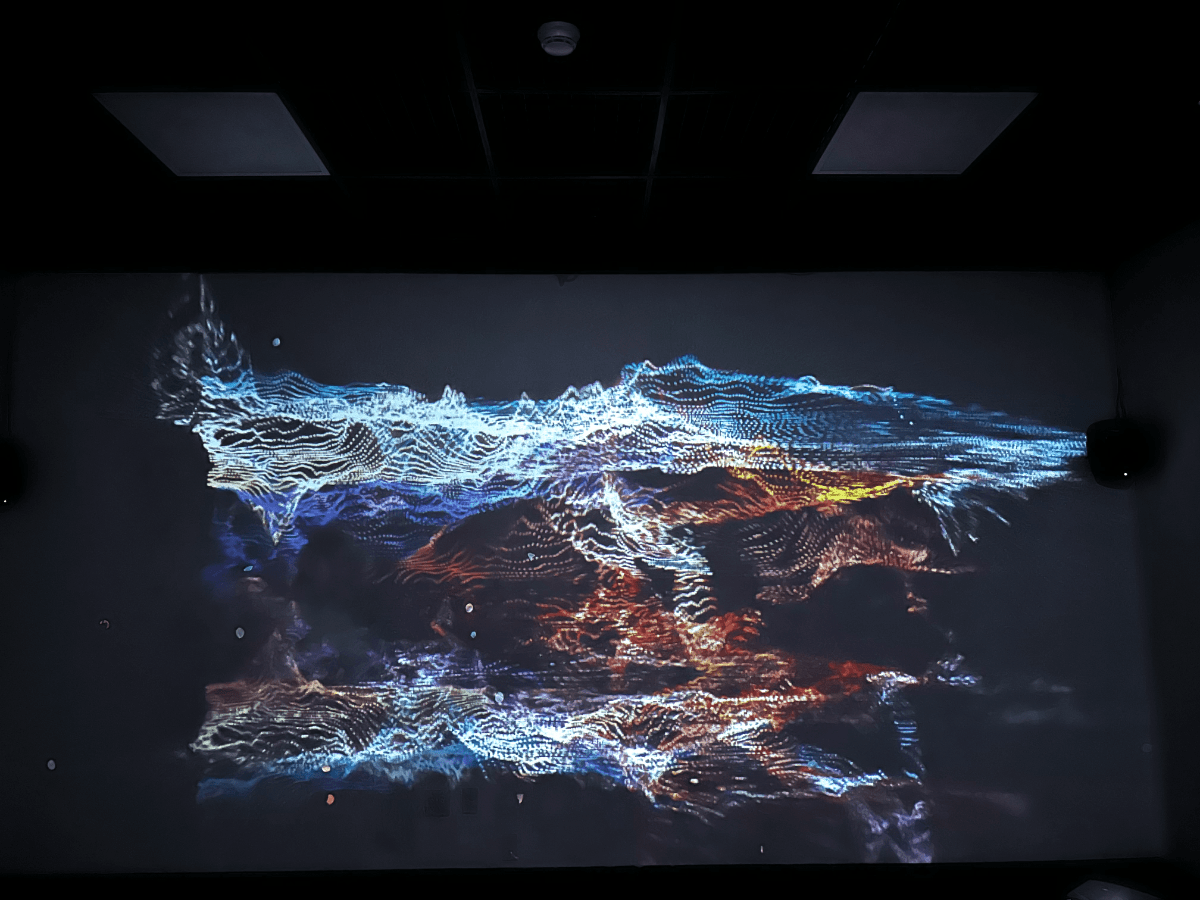

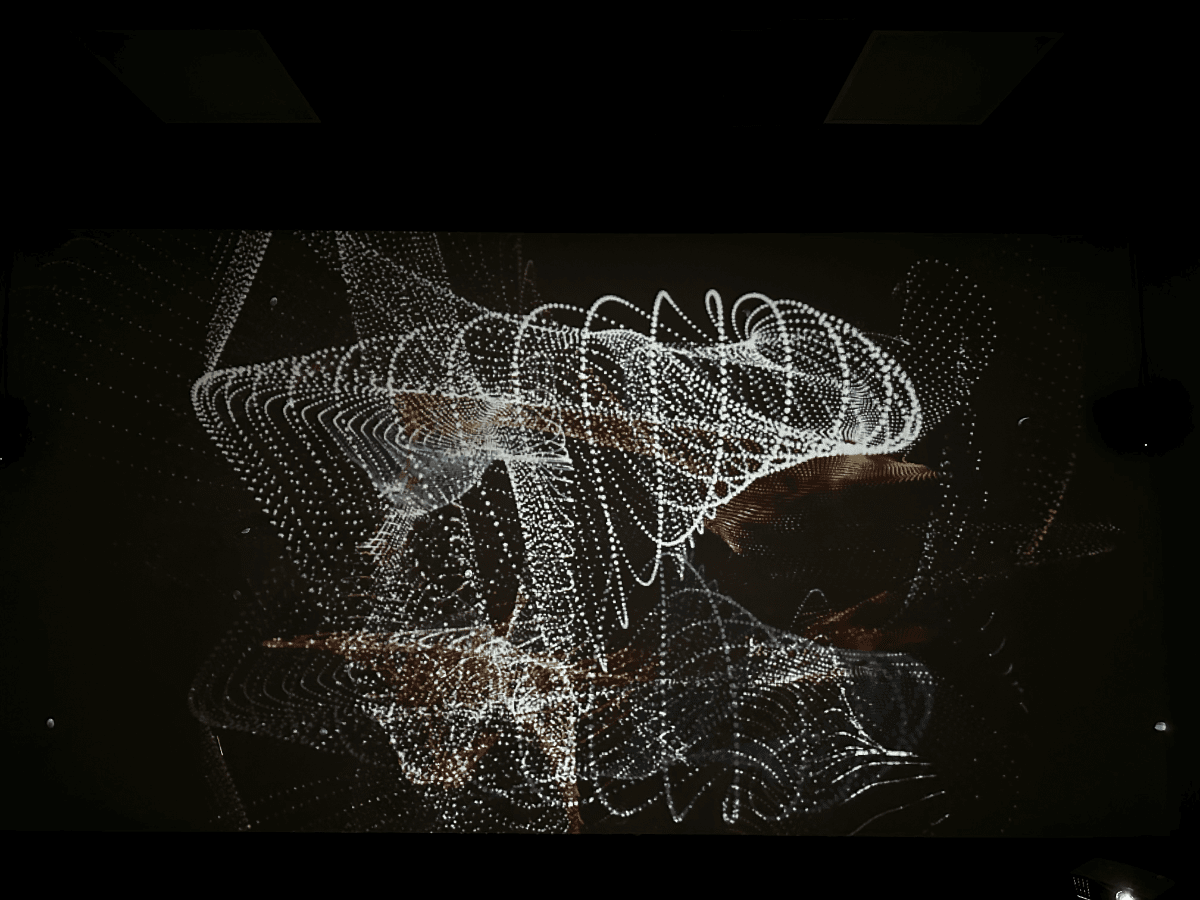

Figure 6.2: Do you know you are not a human? – Render with Ambient Music

Figure 6.3: Do you know you are not a human? – Render with Rhythmic Music

Figure 6.2: Sculpture by J Carlie

Figure 6.3: Projection mapping over the sculpture by Luisa Pinzon

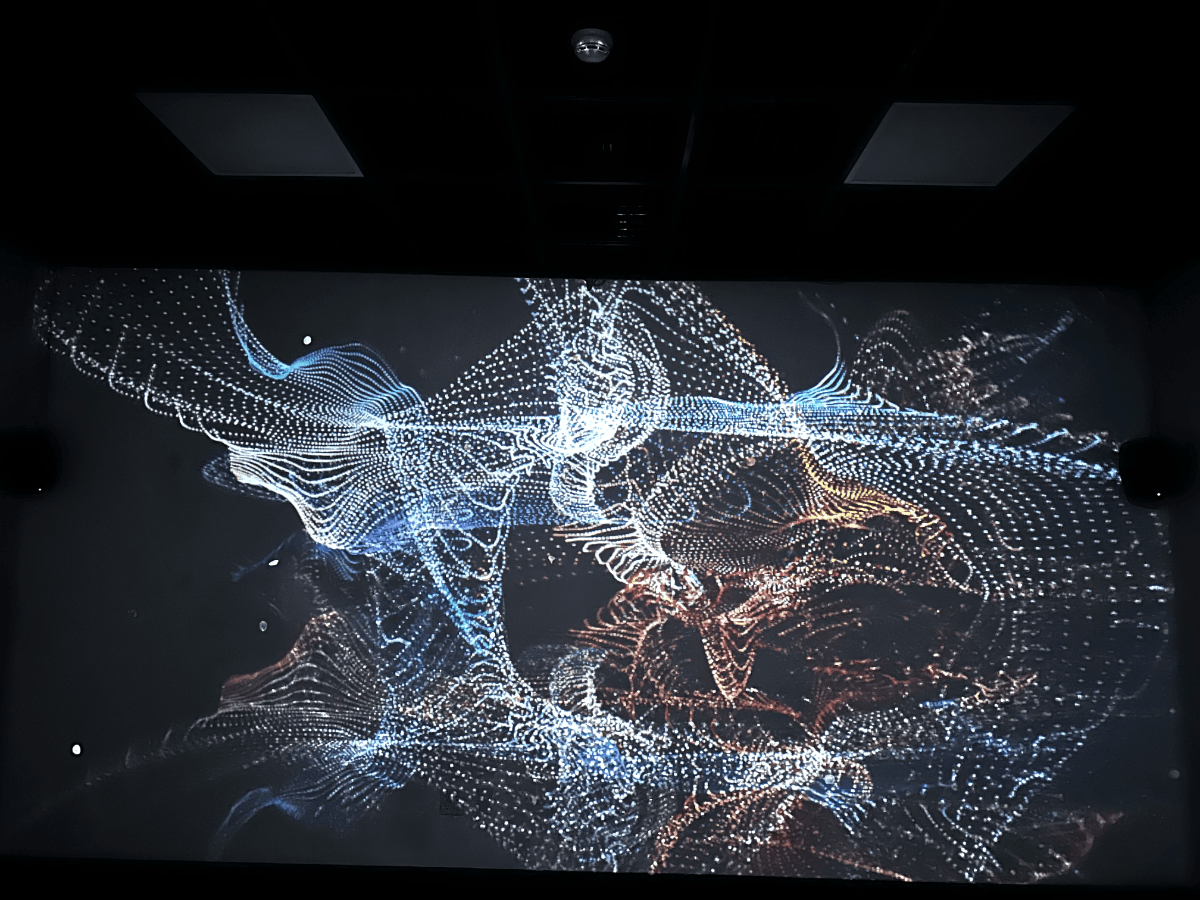

Figure 6.6: Do you know you are not a human? – Performance documentation

Figure 6.4: Do you know you are not a human live performance by J Carlie and Luisa Pinzon

Chapter 1

Introduction

“Doing philosophy is largely a matter of trying to put things together, trying to get the pieces of very large puzzles to make some sense. Good philosophy is opportunistic; it uses whatever information and whatever tools look useful”1

Are all living creatures on the planet connected somehow? It’s a very large puzzle I would like to make sense of. I have always wondered why some humans have certain connections to other living animals, especially when it comes to talking about our pets. We often find that we understand each other better than how we relate to those who belong to our own species.

Since 2006, I have had a sleep disorder that causes multiple episodes of sleep paralysis throughout the night. This occurs when the mind and brain awaken, but the body remains asleep, resulting in a feeling of paralysis. The person is fully aware of their surroundings but unable to move. Over the years, I have tried various treatments without success. However, the solution came in the most unexpected way—through Aby.

Firstly, Aby’s mere presence while I sleep has a noticeable effect on my body and mind, significantly reducing the frequency of episodes. What is even more remarkable is her ability to sense when something is wrong and find a way to help me, all without any formal training, purely driven by instinct. The first night I had a sleep paralysis episode with her by my side, she immediately woke me up by pushing her paws on my body and crying loudly. This behavior has continued to this day, leaving me questioning how she is capable of sensing something like that.

“Philosophy is among the least corporeal of callings. It is, or can be, a purely mental sort of life. It has no equipment that needs managing, no sites or field stations. There’s nothing wrong with that—the same is true of mathematics and poetry”2

Concept, theory, research, and philosophy have been at the center of my studies at CALARTS. It has been fascinating to explore the intersection where technical skills and theory merge with philosophy, as both inform and influence each other in a continuous feedback loop, leading to endless expansion. In my case, concepts always arise after contemplating the philosophy of daily activities and personal feelings. These inquiries then drive research, which in turn generates ideas that eventually manifest as concepts.

This project originated as an idea to explore the expression of these questions regarding the connections between beings. It makes me wonder about the ways in which we could visually interpret this form of communication. What would we witness if we could observe the intercommunication of different beings in the air? This artwork aims to utilize our physical forms, in my case as a human, and a dog as a source, to symbolize and convey this connection on a spiritual level. Our spirits merge in space, transcending our physical bodies, and our souls become visible. These souls weave together and remain connected indefinitely. Once connected, they will never break apart. We carry our past relationships into the future, eternally bound within our souls.

Hand in hand with this idea comes the importance of research, reading, and informing my practice. Attempting to address these questions through research is a crucial part of my artistic process. As I immersed myself in investigating the fields of research that could be relevant to this theme, I discovered a significant overlap in disciplines such as history, philosophy, biology, animal behavior, psychology, and even neurology. To narrow down my focus, I drew inspiration for this art piece from two books that have contributed to shaping my project: ‘Other Minds’ by Peter Godfrey-Smith and ‘Dog is Love’ by Clive D.L Wynne. While I may not be able to provide specific scientific evidence, this artistic pursuit began from a deeply personal perspective and inspiration. I would like to share what I have learned from reading these two authors and how it profoundly connects with my current exploration.

This project will combine both the musical and visual arts, utilizing programming software, Digital Audio Workstations (DAW), and digital photography. Technology will serve as the bridge between the tangible world and the domain of imagination. In essence, this thesis will employ technology to express the spirit that connects living creatures through an interactive installation, visualizing the spirit as particles moving in the air.

To start talking into the technical aspects, the visual design of the piece was executed using TouchDesigner. Developed by a Toronto-based company called Derivative, TouchDesigner is a visual programming language based on nodes. It enables the creation of real-time interactive media, blending coding and creative skills. This makes it an incredibly intriguing tool in the artistic field, facilitating the creation of performances, installations, and more. Not only did the software contribute as the main visual component of this project, but it also served as a creative and experimental virtual space. For the illustrations in this document, some of the moving figures you will encounter in the following pages began as original photographs and were subsequently processed in TouchDesigner to reflect my personal design.

On the other hand, I utilized Max and Ableton Live for sound design and composition. Comparable to TouchDesigner, Max, also acknowledged as Max/MSP/Jitter, operates as a visual programming language, created by Cycling ‘74, a San Francisco-based company, with a primary focus on music and multimedia. It has been embraced by composers, performers, software designers, and researchers to conceive artworks, recordings, performances, and installations. Ableton Live, also recognized as “Live” or “Ableton,” stands as a digital audio workstation innovated by the German company Ableton. In contrast to conventional software sequencers, it serves for tasks like composing, recording, arranging, mixing, and mastering.

Chapter 2

Walking into our souls

Other Minds – Peter Godfrey-Smith

Figure .: Other Minds by Peter Godfrey-Smith – Digital illustration made in Touch Designer using the cover of the book, by Luisa Pinzon

As I mentioned before, a good portion of this paper draws inspiration and information from Peter Godfrey’s research. Peter Godfrey is a professor of History and Philosophy of Science at the University of Sydney, specializing in the philosophy of biology and philosophy of mind. Additionally, he has a particular expertise in octopuses and has contributed his nature photographs and videos to publications such as the New York Times, National Geographic, and The Guardian.

While Peter Godfrey has written six books and his research in the philosophy of science is extensive and complex, I will be utilizing some of the information provided in his book, “Other Minds: The Octopus, The Sea, and the Deep Origins of Consciousness.

The first record of life on earth was 3.8 billion years ago, life being categorized as single-cell organisms called eukaryotes which all the animal kingdom originates from. “Godfrey-Smith shows how unruly clumps of seaborne cells began living together and became capable of sensing, acting, and signaling.”3Animals spawned 1 billion years later from these eukaryotes. If all life forms in the animal kingdom, including humans, originate from these individual cells in the ocean, our connection extends beyond just biology to include an energetic and perhaps even spiritual dimension. It’s possible that we can communicate through senses that might operate beneath our conscious awareness, or ones we might not recognize occurring around us from other life forms. The Octopus for instance use their tentacles for sensing and to blow shit up. By that, I mean if they are in captivity, they can propel water to clog the drainage system and escape. How could they know what system they need to clog and how to effectively escape? What forms of sensing do they have access to that we cannot understand and what types of sensing do we share with the Octopus?” What is it like to have eight tentacles that are so packed with neurons that they virtually “think for themselves?”4 What other methods of sensing, responding, interacting, connecting, and disentangling are manifesting in our surroundings, particularly as I reflect upon these questions in relation to dogs?

The book suggests that we should broaden our understanding of what it means to be conscious and explore how studying and understanding other creatures on Earth can provide insights into ourselves.

I started wondering how my dog senses when I am experiencing sleep paralysis and wakes me up. I never told my dog, “Hey, I sometimes have paralysis and need you to gently nudge me awake.” It was instinctual for my dog, a type of sensing that doesn’t require spoken words. This sparked my curiosity about how our diverse forms of sensing intersected and what would happen if our souls could combine. As an artist, I began exploring how I could make the concept of combined souls tangible through visuals and sound.

To dive deeper into the human-dog relationship, I conducted research and came across the book “Dog Is Love” by Clive D. L. Wynne. In this book, Wynne explains why dogs are remarkable creatures due to their ability to form loving bonds with humans, supported by scientific evidence, and provides practical tips for dog owners.

Dog is Love

Figure .: Dog is Love by Clive D.L Wynne – Digital illustration made in Touch Designer using the cover of the book and a photograph of Aby’s paw, by Luisa Pinzon

Clive D.L. Wynne’s work has been a significant source of inspiration for me. He is a British behavioral scientist who possesses a deep passion for dogs. After dedicating many years to studying the behavior of various species, he eventually merged his fascination for dogs with his professional career and now focuses on researching and teaching about the behavior of these wonderful animals.

His book holds various reasons why I find it important for my research. However, I must acknowledge that I felt a strong sense of empathy with the author while reading the initial pages. Wynne describes the challenges he encountered when shifting his research focus to dogs after devoting most of his career to studying other animals, which he referred to as “cool animals.” Quoting his words, the author expresses:

“The very notion that animals have emotions has long been anathema to most people of my line of work. The concept of love seems too soppy and imprecise for the hard-nosed business we’re in. Attributing it to dogs also risks anthropomorphizing…Yet I have become convinced that, in this regard at least, a hint of anthropomorphism is permissible, even proper. Acknowledging dogs’ loving nature is the only way to make sense of them” 5

“I have found a tremendous amount of evidence to support the theory of dogs’ love, and very little that undermines it. That’s not soppiness-it’s science”6

He explains how he came to realize that his primary interest lay in the study of animal behavior in the context of their relationship with humans, with dogs being undoubtedly the creatures that share the most fascinating bond with us.

I find this aspect tremendously significant for my work because, like the author, I have also hesitated to embark on this project, fearing that it may be perceived as sentimental, just as he mentioned in his book. I questioned its worthiness of research in a master’s program. However, after reading this book, I am convinced of the importance of acknowledging this information and how conducting research on different beings around us, especially our beloved companions, can profoundly impact our lives and the lives of others. It can help us gain a better understanding of how we coexist and how this coexistence not only affects our present but also shapes our future.

This book challenges the conventional notion that dogs are intelligent solely because of their obedience. Instead, it proposes that dogs are genuinely extraordinary due to their innate capacity to form loving and caring connections with humans. The author, Wynne, supports his argument with scientific research, illustrating how this loving and empathetic characteristic in dogs has developed over centuries of domestication. Furthermore, the book offers practical guidance for dog owners on nurturing a deeper bond with their furry friends. Ultimately, “Dog Is Love” serves as a tribute to the exceptional and unparalleled relationship between dogs and humans.

My Dog, my ancestors and me

It was the peak of the COVID pandemic in Colombia on June 27, 2020, when I received a dog to foster. She was one of those now known as “pandemic dogs.” I noticed a fearful expression in her eyes, and she remained quiet and suspicious of her surroundings. However, from the moment we made eye contact, a strong connection formed between us. She had been found on a highway in the pouring rain and had spent her first two years of life in a modest rescue center in the southern part of Bogotá. It was likely that she had never experienced the comfort of sleeping in a warm and cozy space. The joy she felt from sleeping in a warm bed next to a human was evident.

She constantly observed me—my movements, my voice, and even my stillness. The look in her eyes has been described by many as remarkable and intriguing. It’s as if she comprehends everything, as if she can gaze into my soul. Later, when I discovered her ability to sense my sleep paralysis episodes, as mentioned in Chapter 1, it confirmed the impressive and sometimes unexplainable bond she has with me. This bond continues to grow stronger each day. Like many other pet owners, I find myself wondering about the emotions and thoughts of our beloved furry companions.

The question of whether animals have feelings is one of the most common discussions and debates in human society. Wynne provides an insightful description of dog behavior, which sheds light on this topic.

“Dogs have an exaggerated, ebullient, perhaps even excessive capacity to form affectionate relationships with members of other species. This capacity is so great that, if we saw it in one of our own kind, we would consider it quite strange-pathological, even. In my scientific writing, where I am obliged to use technical language, I call this abnormal behavior hyper sociability. But as a dog lover who cares deeply about animals and their welfare, I see absolutely no reason we shouldn’t just call it love.”7

I personally like to think my dog loves me and that dogs, in general, have a profound love for their human companions. This love is what allows us to establish deep connections on multiple levels. The concept of love, in itself, can often provide sufficient answers to many of my personal inquiries. However, if we dig further into this feeling, regardless of the terminology we choose to describe it, my artistic perspective explains it as a merging of our souls and spirits.

Embarking into the realm of spirits and souls enables us to advance the research of this project even more profoundly. This involves exploring subjects such as our ancestors, the land, and the ocean. We can explore how the souls of those who previously inhabited earthly bodies have departed, nourishing the land as soil, water, and air. The new inhabitants of the Earth then receive these elements in the form of food, rain, and breath. This concept ties back to the idea of a connected evolution, as explained by Peter Godfrey. It highlights how our initial existence originated in the ocean and how, in some way, we are all interconnected, tracing our roots back to the same source. I am convinced that all living creatures in this world maintain and will continue to possess a bond that connects them to their origins and ancestors, regardless of the shapes and forms they inhabit today. This connection remains present within our spirits and souls.

Chapter 3

Concept to Tangible art

As discussed in Peter Godfrey’s book, evolution began with single-celled organisms in the ocean. This concept led me to contemplate small objects floating in water, moving together in a cohesive manner. It made me reflect on the notion of multiple particles interacting and evolving into new forms. This idea became the starting point for my project, inspiring me to experiment and create something based on this concept.

Transforming an idea into a tangible project and refining it around a central concept is always a process of experimentation. There are various approaches to this process, and having access to different tools plays a crucial role. In my case, with a background in sound engineering and technology, creative software tools have always served as my experimental platform. Artistic software offers a wide range of options, but when attempting to ground philosophical concepts, the skill of creative coding has proven invaluable. Coding frameworks like Processing and p5.js represent, to me, a blank canvas, or an empty notebook ready to bring any idea to life through logical thinking.

This project went through various stages of experimentation. I initially utilized creative coding tools and later adapted the most concrete ideas to other programming languages that offer more advanced capabilities in processing sound and visuals. Specifically, I employed Touch Designer and Max/MSP. A glimpse into the creative process is outlined in the subsequent sections of this chapter.

Creative coding

When creating art through coding, the artist must provide precise instructions to the coding environment being utilized. Every visual outcome is determined by these instructions, which can be quite distinct from the experience of drawing on a blank canvas. When creating with our own hands, the results can vary based on various factors, including how our bodies inform and influence the work. In contrast, the creative coding process is primarily a mental one, informed by artistic references, past experiences, and even desired bodily movements. However, when writing and executing the code, the only thing that truly matters is how the mind communicates with the software. This dynamic gives rise to an almost infinite range of possibilities while maintaining a fascinating level of control over the artwork. Every desired outcome, including dimensions, colors, shapes, positions, and behaviors, must be explicitly instructed.

Visual creative coding has always been closely associated with working with shapes, their movements, and their interactions with one another. Figure 3.1 showcases the movement of a simple shape created using the p5.js framework.

Figure .: Circle movement programmed by Luisa Pinzon using p5Js framework

By tracing the movement of this simple shape, fascinating visual results can be achieved, as demonstrated in Figure 3.2. The circular movement introduces the concept of randomness, which is a widely used tool in the field of digital arts. Randomness itself is a vast term, but for now, we can define it as the selection of information without the conscious decision of the artist. While the goal is not to have complete control over the final result, the artist provides instructions to the program on how to utilize the randomness tool. In the case of Figure 3.2, randomness is incorporated into the direction of movement. The position of the circle varies with each frame, and the artist has limited control over it. As a result, the circle leaves a trace behind, drawing a new circle on top of the previous one with every movement. This type of movement is commonly referred to as “The Random Walker” within the creative coding community. On the left side of Figure 3.2, we observe a portion of the movement as soon as the first circle is drawn, while on the right side, we can see different outcomes after running the code through multiple iterations, allowing the circles to be drawn in random positions over time. This process produces highly intriguing and visually captivating results.

Figure .: The Random walker programmed by Luisa Pinzon using p5Js framework

What I find fascinating about creating art in this manner is the resemblance it bears to the behaviors of living organisms in real life. The artist assumes the role of an entity that decides and instructs how their creations should behave—a role that can be likened to that of a god, the universe’s energy, or any other supreme entity in which many humans believe. The shapes themselves become the artists’ creations, each with the potential to be entirely unique or replicated from a previous one. Furthermore, every parameter of these shapes is meticulously defined to determine how they should be created and exist within the canvas.

This process demonstrates how a combination of mathematical and physics principles can be used to construct digital worlds that emulate natural systems. In the realm of computer graphics, a widely employed technique for simulating natural phenomena is known as Particle Systems.

Particle systems

Building upon the notion of particles in motion within the ocean, I chose to investigate the concept of Particle Systems, a widely practiced technique in computer graphics and creative coding environments. The documentation and theory surrounding particle systems are extensive and can cover mathematically intricate territory. However, it is also one of the topics frequently covered in the initial stages of learning creative coding due to its visually captivating results. Moreover, particle systems involve fundamental coding skills while offering opportunities to explore more intriguing behaviors.

“A particle system is a collection of many many minute particles that together represent a fuzzy object. Over a period of time, particles are generated into a system, move and change from within the system, and die from the system.” 8

I found this quote in Daniel Schiffman’s book, The Nature of Code, which resonated with me. Schiffman has a unique approach to teaching coding skills, incorporating topics related to natural phenomena. In his book, he encourages readers to create their own projects based on natural ecosystems.

According to Schiffman, particle systems are a combination of objects that interact with each other, allowing users to generate an almost infinite number of behaviors. These behaviors include various aspects such as the creation of objects, their lifespan before they expire, their movement direction, speed, angle, color, and how they interact with one another within the canvas, among other factors.

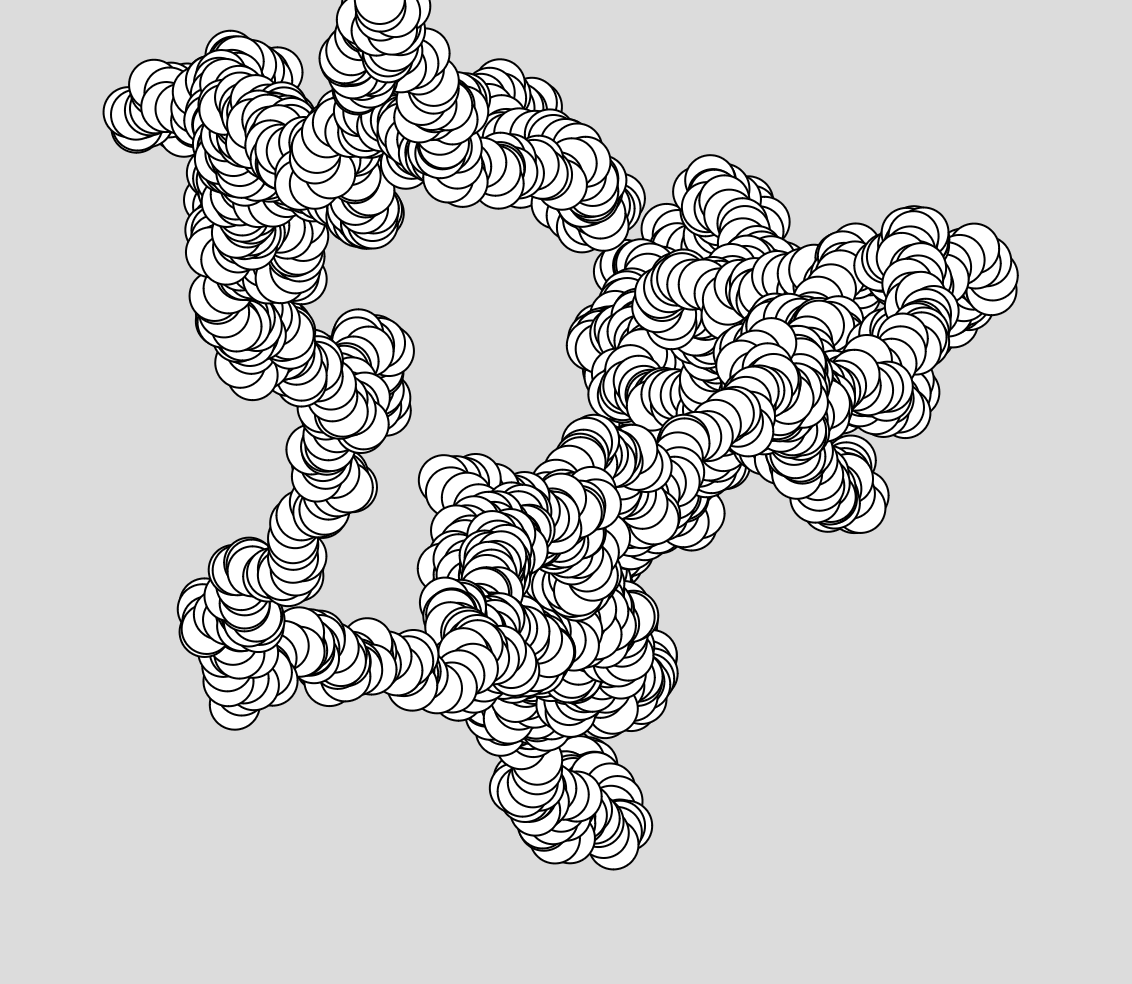

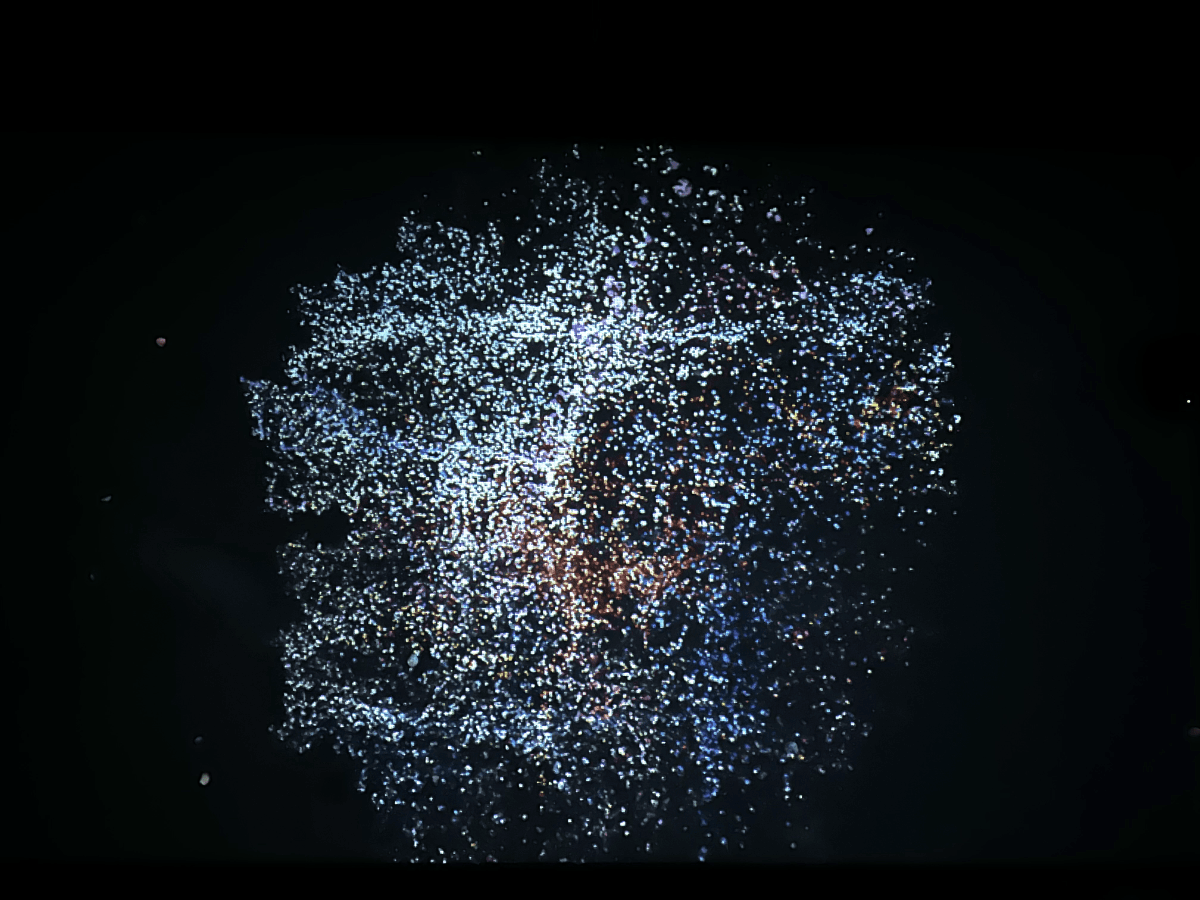

Figure .: Particle System programmed by Luisa Pinzon using OpenFrameworks

When I first began working on building a Particle System, I was captivated by the idea that each particle represented an individual in real life, with the potential for different behaviors as they interacted with one another. For instance, I discovered that I could code a motion where a new particle would be created when another particle died or disappeared. This sparked numerous questions about the experiences of real-life beings that could be represented through these particles. How do different types of particles interact? Do they merge, repel each other, or create new particles? Do they change direction? The element of randomness further enhanced the possibilities, resulting in a more intriguing outcome. As an artist, this gave me a multitude of ways to combine personal experiences, inquiries, and research into a visually generative artwork.

Drawing from the concept of particle systems and the interactions of living beings, particularly focusing on the relationship I share with my dog, I wanted to move beyond using geometric shapes in my system and instead utilize something that could better represent us. This led me to employ pixels from photographs as the particles in my system.

The reason behind this choice is that photography functions as a means of representing our physical forms as humans and dogs. By extracting pixels from these images, I aim to represent our souls and spirits. Additionally, the photograph itself serves as a collection of data, as I have documented various life experiences through photography over the years. There is no better way to illustrate our memories and the connection our spirits have formed over the past two years than by incorporating this data into the artwork.

Data recollection

The process of data collection holds significant importance in technology, particularly with the advancements in AI and Machine Learning. Data is utilized to inform various systems, train them, store information, and more. Creating a dataset is influenced by a range of contextual factors, including cultural, political, social, and economic aspects. Similar to framing an image, constructing a dataset involves selecting and representing specific aspects of the world that are relevant to the research question at hand.

However, in this instance, I won’t be using data in the traditional sense of information or documentation. Instead, I will be employing it as a conceptual element. By using photographs and sound recordings from our journey, I will treat them as samples and integrate them into the creation of a new piece. These multimedia elements will operate as a form of data within the artistic context, allowing for the exploration and expression of our shared experiences.

Photographs

The concept of using photographs goes beyond just capturing a framed image. A photograph encapsulates a fleeting moment in time, a moment that has already passed by the time we can view the image. Without the camera and its technological capabilities, that moment would reside solely in our memories, minds, and spirits. A photograph has the ability to transcend time, preserving a moment from the past and carrying it into the future. It can even extend beyond our own lifetimes, as others may encounter and view the image long after we are gone, particularly if it is printed.

Aby and I have been fortunate to embark on numerous adventures together, traversing various locations in Colombia and the United States. Throughout these experiences, I have had the privilege of capturing most of them through my lens, immortalizing them as images. Each pixel within these photographs holds a glimpse into the life we have shared. Similarly, every pixel within your own photographs, whether printed or stored on devices, encapsulates a glimpse of your own lived experiences and memories with others. These memories reside within the recesses of your mind and soul, represented by the smallest units of data that a computer can interpret. By extracting and reinterpreting these pixels as particles, I aim to merge them into a new form, creating a visual representation of our interconnected journey.

Sound samples

Sound recording, like photography, has the power to capture moments that have already passed, preserving them for future listening. Sound, in its diverse forms, allows room for our imagination to fill in the gaps, much like reading a book where we evoke images in our minds as we listen or read. Sound is a fascinating and incredibly varied medium.

Continuing with the concept of pixels becoming particles, sound recordings are transformed into instrument samples. Field recordings of various activities have been made, capturing snippets of sound that are later used to create instruments. These instruments, when combined, form a musical composition. Thus, the sound generated in this installation originates from our personal life experiences and is transformed into a cohesive musical piece, mirroring the merging of visual pixel particles.

In the following chapters, I will explore the technical aspects and provide a detailed description of my approach to this project, outlining the necessary steps to make it a reality.

Chapter 4

Do you know you are not a human? - Visual Design

Touch Designer is a powerful visual programming software that offers a wide range of tools for manipulating visual elements in unique and intricate ways. Its ability to create audio-reactive visual movements makes it well-suited for audiovisual performances, installations, and other creative projects. While there are alternative software and coding languages that can achieve similar results, I chose to utilize Touch Designer due to its specific features and capabilities.

The process of converting the data from photographs into particles could potentially be accomplished using different software or coding languages, each with its own approach and workflow. However, I found that Touch Designer provided the ideal framework for realizing my artistic vision and implementing the desired visual effects.

By utilizing Touch Designer, I was able to explore the potential of the software’s tools and access its audio-reactive capabilities to create a cohesive integration between the visual and sonic aspects of the project. This allowed for a seamless blending of the pixel particles derived from photographs with the audio samples, resulting in a unified and immersive audiovisual experience.

Introduction to Touch Designer

First lets briefly introduce Touch Designer. Touch Designer is a node-based programming language that provides a visual interface for creating interactive and real-time multimedia projects. It allows users to connect nodes together in a network to build complex systems and workflows.

Nodes in Touch Designer represent different functions and operations, and they can be connected in various ways to define the flow of data and control. Each node has specific parameters and inputs/outputs that can be adjusted and linked to other nodes. This visual approach to programming makes it accessible to artists and designers who may not have extensive coding experience.

In addition to the built-in nodes, Touch Designer supports scripting using Python, GLSL (OpenGL Shading Language), and other programming languages. This allows for more advanced customization and integration with external systems.

Overall, Touch Designer provides a flexible and powerful environment for creating interactive multimedia projects, including audiovisual performances, installations, interactive displays, and more. Its node-based approach and extensibility with scripting make it a versatile tool for artists, designers, and developers alike.

Simple Network

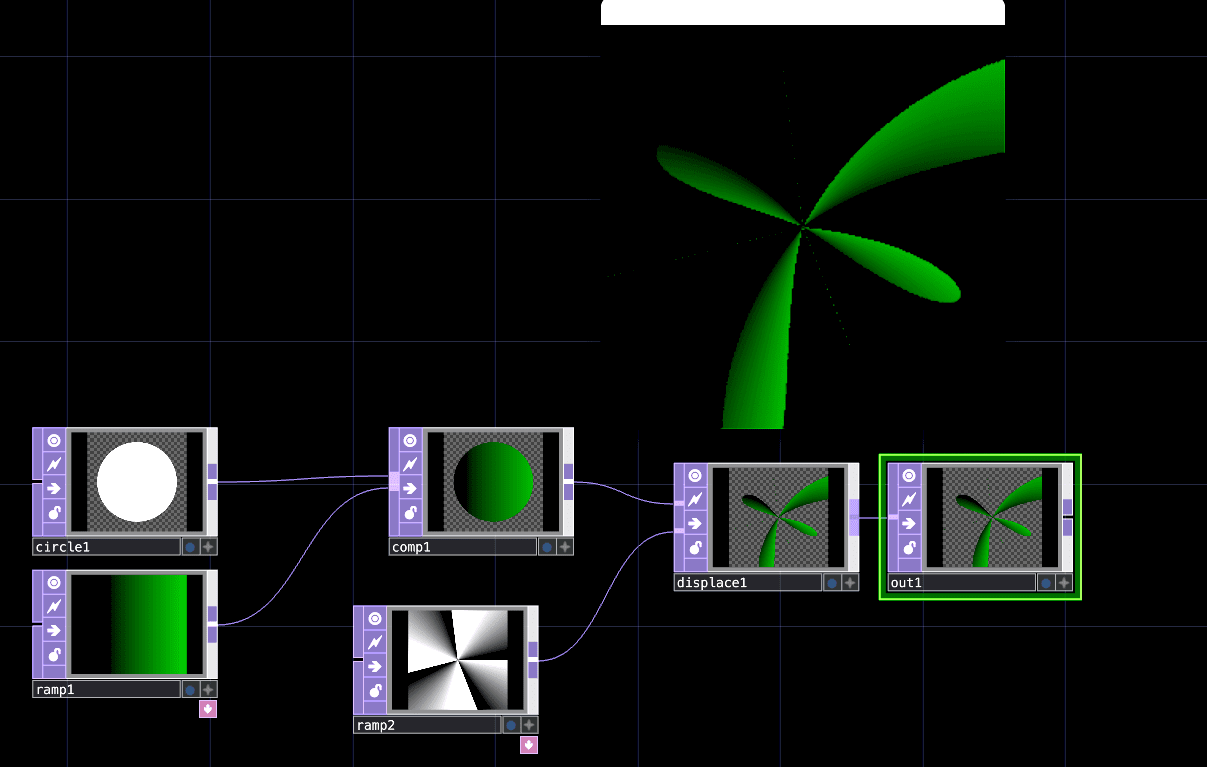

Figure .: Simple Touch Designer Network

Figure 4.1 shows a simple TouchDesigner network. The expression network refers to the interconnected boxes and lines in the image. As I mentioned before, TouchDesigner is a node-based visual programming language. The boxes in the network are called operators and represent different types of operators that manipulate various types of data. The purple operators shown in the image are called TOPs, and they manipulate 2D images. The network is read from left to right, and as it moves to the right, it undergoes constant modification through different operators.

This network uses a circle to create a new shape. First, a circle is created, and a ramp TOP is applied. Although the circle is intuitive to understand, the ramp is a good example to start recognizing how TouchDesigner deals with colors and positions. Colors in TouchDesigner are represented on a scale from 0 to 1, where 0 corresponds to black and 1 corresponds to white. The combination of these values in RGBA creates different colors. A ramp allows the creation of a positional map using colors and assigns it to a grid. The circle is then combined with the ramp using a composite operator. This operation multiplies each pixel of the circle with each corresponding pixel of a color ramp that transitions from black to green. In this way, the circle is colorized using the colors provided by the ramp, at the same positional locations.

Next, an operator called “displace” is used to move the position of each pixel of the circle based on the values of another ramp. This new ramp also provides values ranging from 0 to 1, but this time assigned to different positions than the first ramp. The “displace” operator uses the first image assigned to the top input, which, in this case, is the green circle. It then utilizes the operator assigned to the second input as positional information. This means that the pixels inside the circle will move to the positions specified by the ramp.

If you are not familiar with this software, this might not make a lot of sense. It’s important to note that I’m not creating a user manual for TouchDesigner here. However, I believe it’s important to provide a small example to demonstrate some of the uses of this software. TouchDesigner is a highly versatile tool that enhances logical thinking while creating art. Networks can become very complex, and working with them requires strong organizational coding skills.

UV Map and Instancing

A UV map is a texture map that instructs a shader on how to arrange pixels within an image. In TouchDesigner, the UV map utilizes the red, green, and blue channels to convey positional information. The red channel represents the X positions, the green channel represents the Y positions, and the blue channel represents the Z positions.

The coordinates for each circle in the network are determined by this UV map. TouchDesigner orients objects using the X, Y, and Z axes, where the center of the canvas corresponds to 0, 0, 0 respectively. In a 2D or TOP (Texture Operator) texture, the values range from -0.5 to 0.5. For example, along the X axis, a position of -0.5 would be located far to the left, while a position of 0.5 would be located far to the right. This principle applies similarly to the Y axis.

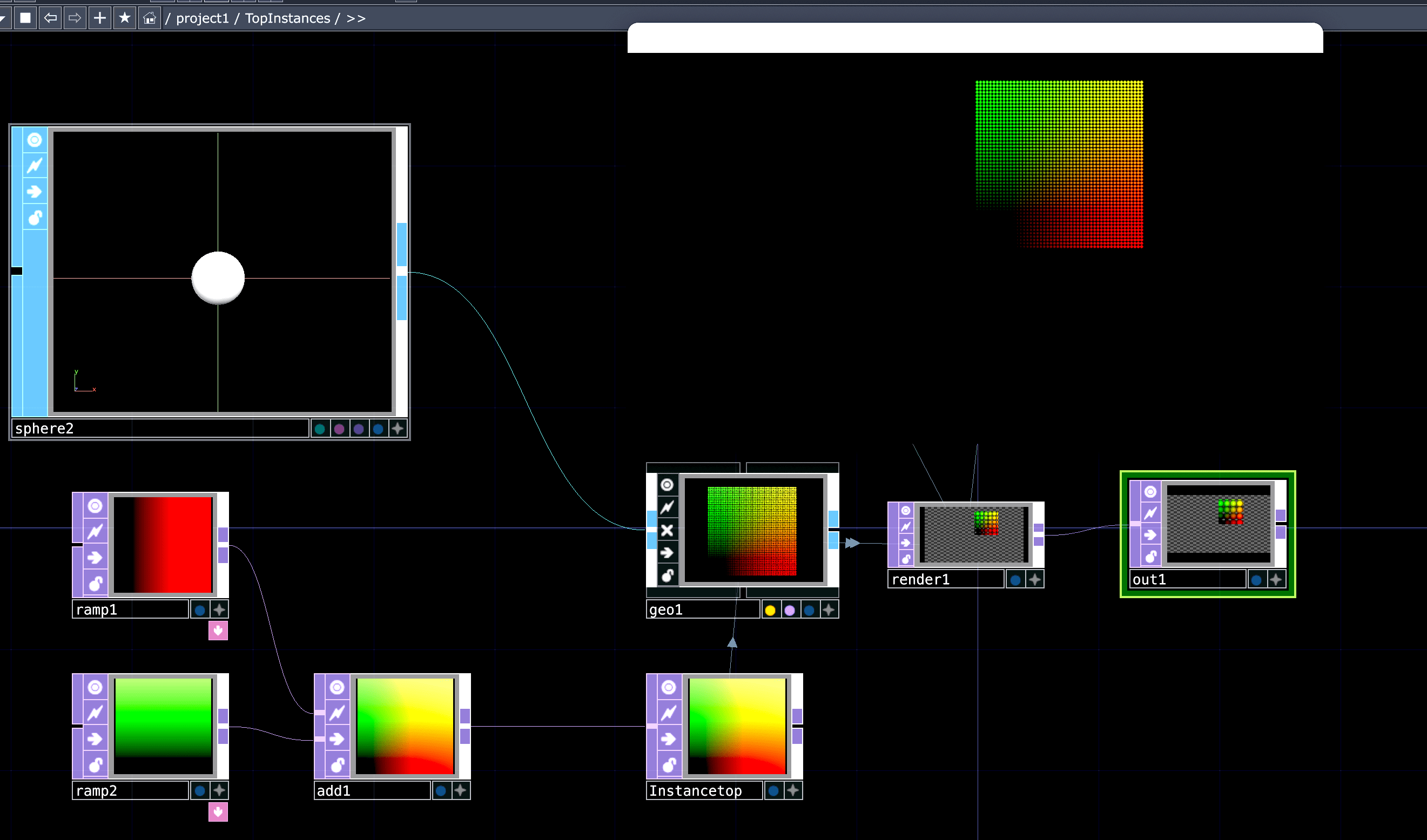

Figure .: Instancing in Touch Designer

Figure 4.2 illustrates a 3D network utilizing 3D operators called SOPs (Surface Operators). In this network, a circle is used as the main shape, but unlike the previous example, this one exists in a 3D form. The circle on the left is the only shape provided within the entire network, so how is it copied and placed in different locations? This is achieved through a powerful feature offered by TouchDesigner called Instancing. Instances are copies of a 3D geometry object, and each instance can have its own set of information, including position in the X, Y, and Z axes, color, rotation, and more. The circles are arranged using a UV Map, which means that to position all the circles seen in the result, each circle is copied and assigned a specific position.

The UV map utilizes color ranges to define coordinates. For example, the lower left corner, represented by black, corresponds to coordinates 0,0 (zero in the red channel and zero in the green channel produce black). The upper left corner, represented by green, corresponds to coordinates 0,1 (zero in the red channel and one in the green channel lead to green). The upper right corner, represented by yellow, corresponds to coordinates 1,1 (one assigned to both the red and green channels results in yellow). Finally, the lower right corner, represented by red, corresponds to coordinates 1,0 (one in the red channel and zero in the green channel create red). Additionally, it’s important to note that in this software, the coordinates 0,0 refer to the center. Therefore, when drawing the grid, the black corner positioned at the left is placed at the center. If one wishes to move the grid so that the black corner aligns with the lower corner of the space, an offset of -0.5 pixels would be required for everything.

Noise

Figure .: Noise in Touch Designer

This network emphasizes the concept of randomness. In TouchDesigner, randomness is represented by an unpredictable sequence of numbers generated by a machine. One common form of randomness in the visual and sound environment is noise, noise is both a form of randomness and the name of an operator in Touch Designer. A noise operator provides both position and color information. What makes using noise in TouchDesigner valuable is the ability to introduce movement in each of its channels (red, green, and blue), which correspond to X, Y, and Z positions as mentioned earlier, using Python code expressions for its parameters. By mapping these parameters to other features in the network, the movement generated by the noise parameter can influence the final visual outcome.

This is precisely why, in the example shown in Figure 4.3, the shape composed of instanced circles changes color and exhibits movement. What’s happening here is that the color and position information from the noise operator is mapped to the color and position information of the instanced circles. Consequently, it affects the overall visual result.

Photographs and Particles

Based on the theory that particle systems consist of numerous tiny objects, one interesting example of an object that can be represented as a particle is the pixel of a photograph. By taking pixels from different photographs and allowing them to interact and merge with one another, we can capture the shape and essence of a particular moment in time and blend it with another.

For me, this process symbolizes the transformation of shape and form into spirit and soul, as two souls detach from their bodies and merge into a new, unique shape.

Now, let’s explore the step-by-step process of achieving certain effects in TouchDesigner and the elements I utilized.

The Pixel as a Particle

If we consider a texture map to be an image with a certain height and width, we can view each pixel in that image as corresponding to a particle. Each texture map can represent different features such as position, rotation, and color mapping. The idea is that you can have a series of texture maps where each pixel in the map corresponds to a particle in the texture. Each particle can then refer to this texture map to determine its movement, color, and other properties.

Building upon the concept of Instancing that I previously described, we can create as many circles as there are pixels in a photograph. Then, we can map the position of each circle to the position of each pixel. By doing this, we can organize numerous circles side by side, effectively creating a particle system based on the positions of the photograph’s pixels. Subsequently, we assign the color texture of each pixel as the texture of each circle. By arranging these circles in the correct manner, we can recreate the photograph using tiny circles that later we can further manipulate.

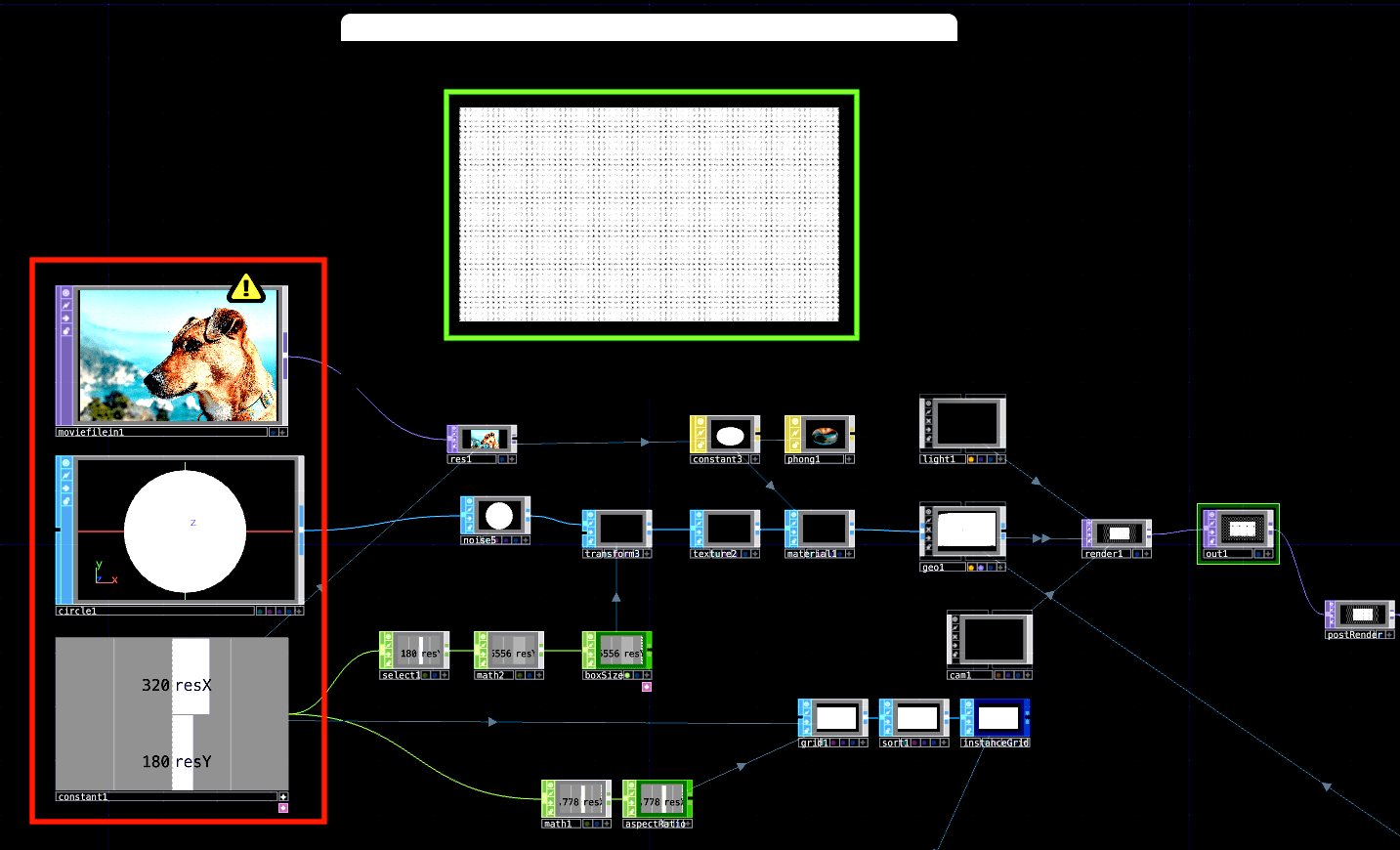

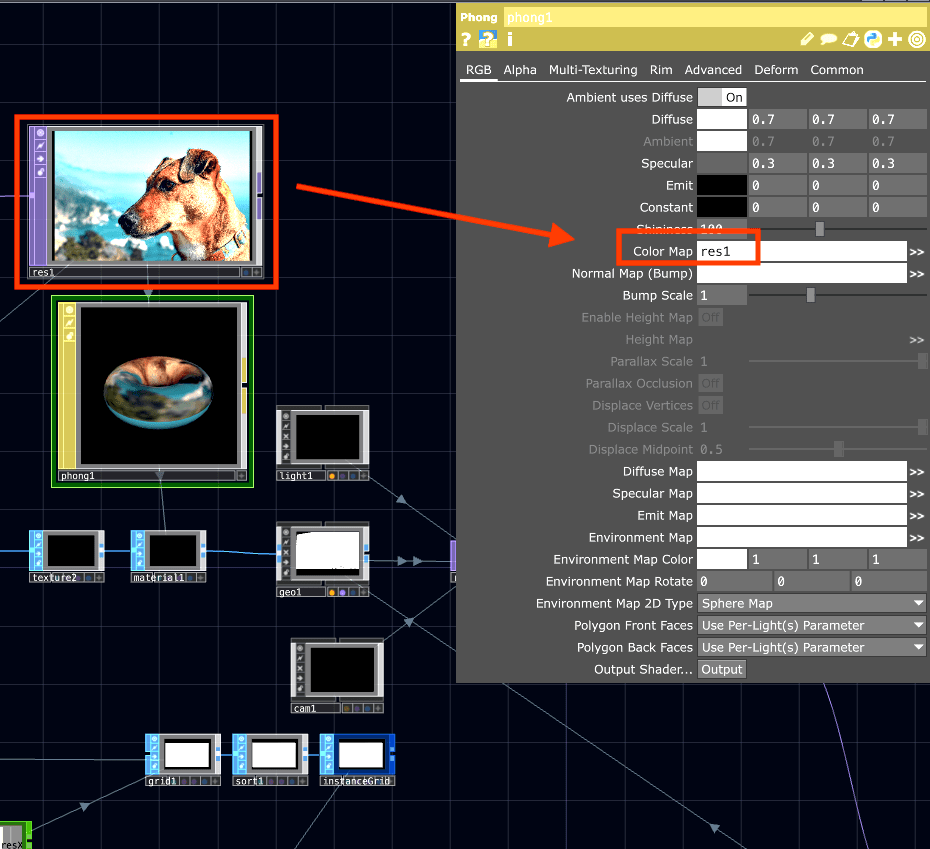

Figure .: Instancing circles using Photograph data

Figure 4.4 displays the network for the initial stage of the process, starting with a photograph and a 3D circular shape. The first step in recreating this process is to determine the desired resolution for the photograph to be manipulated. This resolution is crucial for the machine, considering that the example photograph (as well as most photographs used in the project) have a resolution of 6000 × 4000 pixels, resulting in a total of 24,000,000 pixels that need to be processed. Each pixel corresponds to a circle that needs to be created and arranged.

In this project, the resolution of the photograph manipulated is not significant since each pixel will be modified by position and size later in the process. To optimize processing on the computer’s graphics card, the photograph is resized to 320x180 pixels.

Next, the circle is adjusted to match the correct aspect ratio by manipulating its radius, and then 56,000 instances of the circle are created. In the network, I utilize the desired resolution of 320x180 pixels to generate a grid with the appropriate number of rows and columns based on the resolution. This grid information is used to determine the position of each circle instance in space. The large white square in the center of Figure 4.4, highlighted in green, represents a grid composed of 3D circles, with each circle representing the position of a pixel from the original photograph. This setup ensures that every particle in the network is linked to this grid, enabling seamless replacement of the image with another.

Once the circles are created, the next step is to adjust the texture information of each circle to match the corresponding pixel. In TouchDesigner, this is accomplished using a group of operators called Materials. A Material is assigned to each circle, and the color of the material is mapped to the colors of the photograph. Figure 4.4 provides a representation of this process, where the “res1” operator represents the photograph after being adjusted for resolution.

By following this workflow, the network establishes the foundation for further modifications and transformations of the circles based on the original photograph.

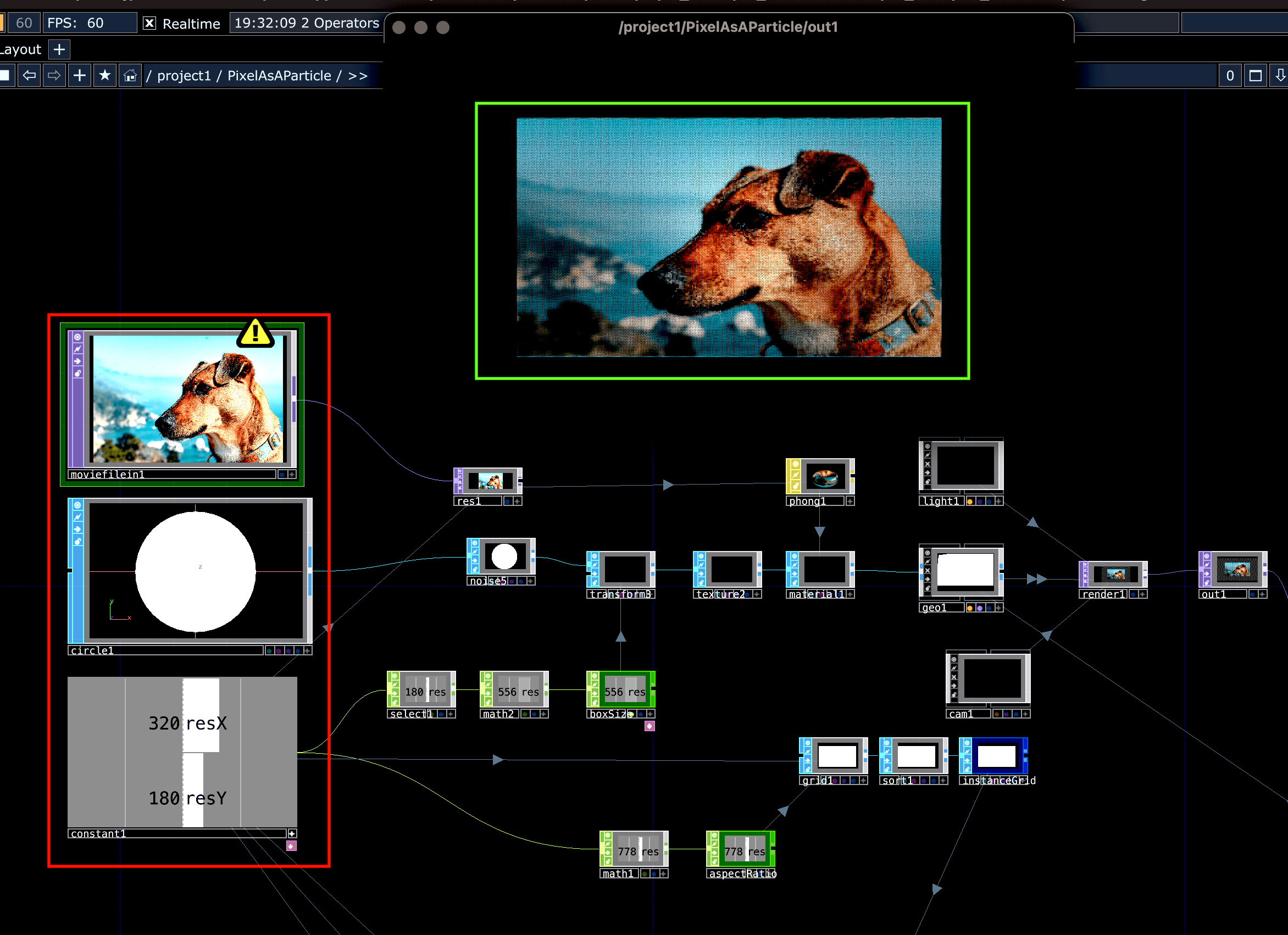

Figure .: Color mapping in Touch Designer

By assigning each circle with the color of its corresponding pixel, the photograph is now prepared for 3D manipulation, as illustrated in Figure 4.5. The section highlighted in red denotes the initial stage of the process, while the photograph highlighted in green represents the recreated image constructed using circles as small particles.

With this setup, the network is ready to apply various transformations and effects to the circles, allowing for creative and artistic manipulation in a three-dimensional space.

Figure 4.6: Matching circle instances with pixel color information

In Figure 4.6, the network demonstrates the process of matching circle instances with pixel color information. This step ensures that each circle is correctly assigned the color of its corresponding pixel.

Shapes made of particle pixels

Now, let’s delve into the concept of creating shapes made of particle pixels. To symbolize the merging of spirit and soul, I decided to introduce movement to the position of each circle and foster interactions between them. These interactions give rise to diverse and captivating patterns, ultimately resulting in the formation of new and distinctive shapes.

By manipulating the positions of the circles and allowing them to interact, the network brings forth a visually engaging representation of the merging of spirits and souls.

Figure .: Constant value added to original coordinates

The process of moving the particle positions involves manipulating the position values in the X, Y, and Z axes of the grid. In Figure 4.7, an example is shown where a constant value of 0.5 is added to all the circle positions. This has the effect of shifting the entire image towards the upper right corner of the space.

By adding 0.5 to all the position values, each point in the grid experiences the same amount of shifting in the same direction. Consequently, the entire image moves towards the positive range of the grid.

This technique allows for dynamic movement and repositioning of the circles, enabling the creation of various visual effects and transformations.

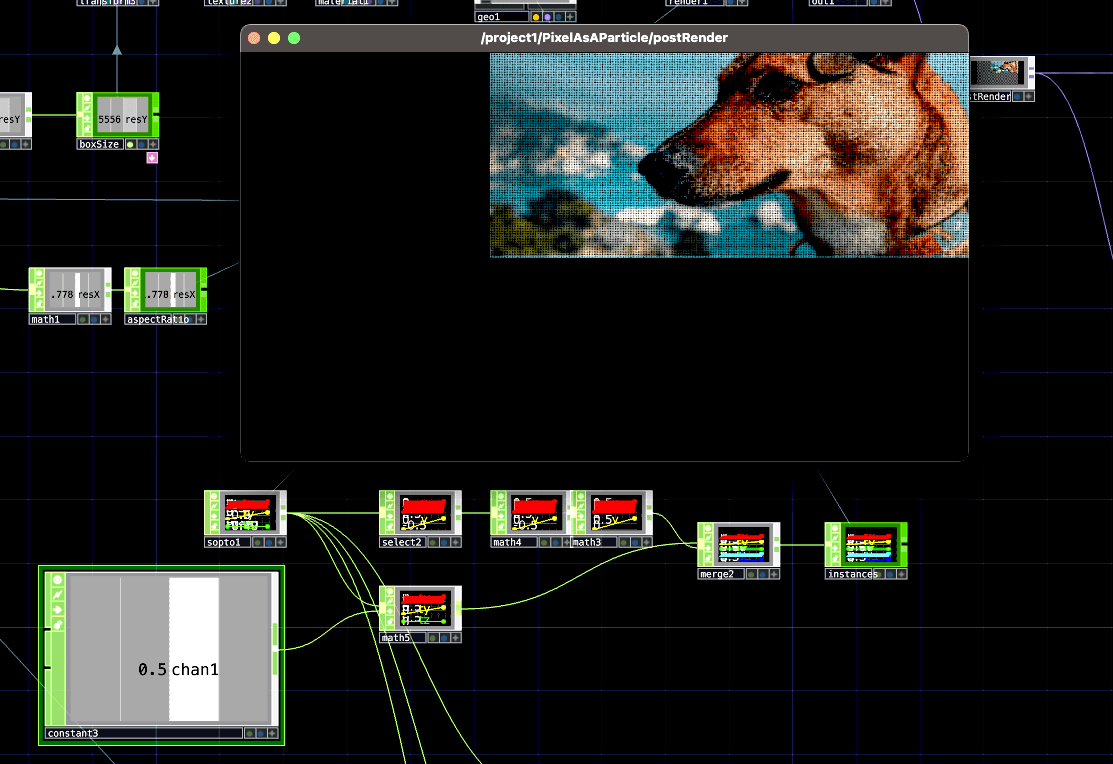

Figure .: Pattern added to original coordinates.

’Considering this idea of moving points, it is possible to use other types of patterns to shift the position of the pixels. Figure 4.8 if showing how one simple pattern can affect the image in different ways. The operator ¨pattern1¨ is a Sine wave with an amplitude of 0.1. This pattern has two complete cycles, meaning that it goes from 0 to 0.1 then to -0.1 and back to 0, two times.

Each of these points in the pattern, through its cycles, are added to the original positional numbers of the circles in the particle system. The image in the upper left corner of Figure 4.8 illustrates how the pattern affecting only the X-axis, while the image in the upper right corner affects only the positions in the Y-axis. The image in the lower center shows the same pattern effects on the X, Y and Z axes at the same time. As shown, the options for displacement and transformation are nearly infinite, allowing for infinite creative experimentation and unique visual outcomes.

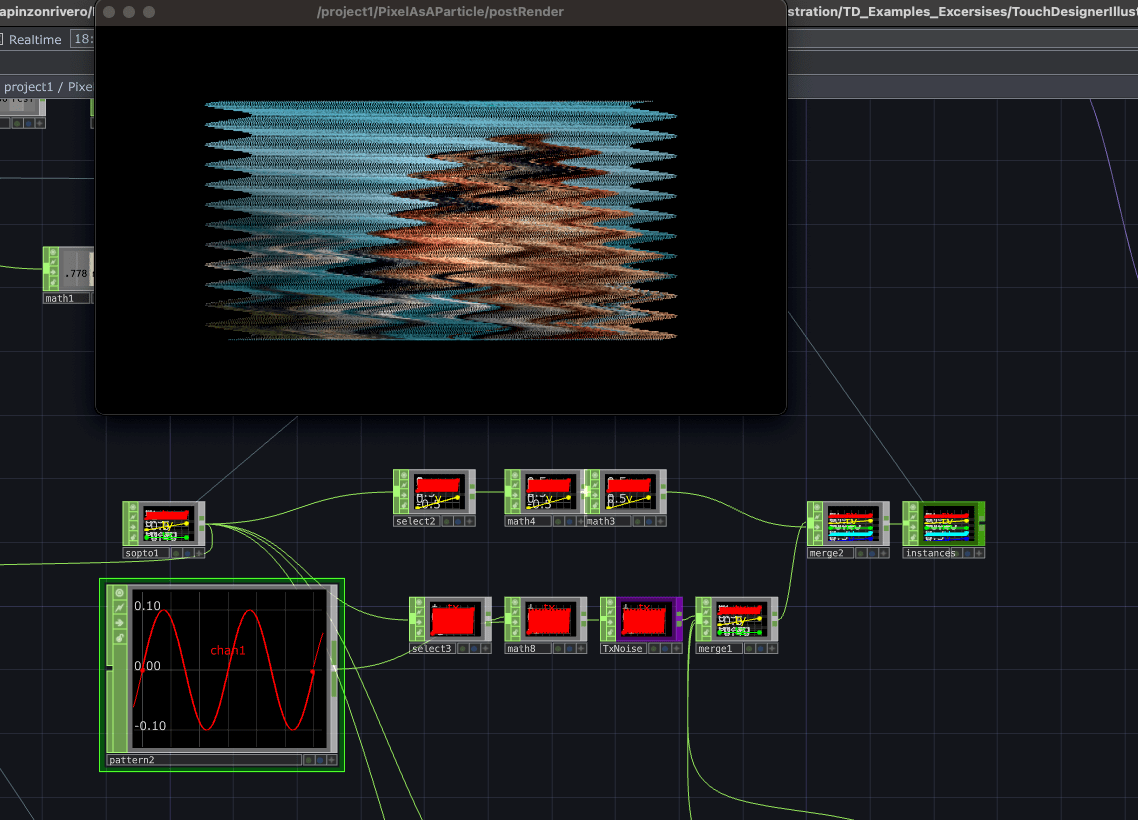

Different ideas on how to displace pixels are abundant. For this project, I wanted to be able to move each separate point to a completely different position. This was achieved by using the Noise operator previously mentioned.

Figure .: Noise texture to data

Noise provides four channels of random numbers (RGBA). I convert the noise texture’s color channels into data that can be read as position information. After obtaining the new position information from the noise, I store it back into the Instances data, updating the positions of the circles. The use of Noise allows for the generation of everchanging patterns and movements, adding complexity and uniqueness to the particle system.

Figure .: Noise Texture Parameters

The added value of doing it this way is that the Nose provides various parameters that can be modified which will further alter the pixel particles interaction. Figure 4.10 shows the same photograph being affected by different parameters of the Noise Texture, such as Amplitude, Exponent and Period. It shows what happens when the parameters of the particle system are manipulated using time and movement.

Figure .: Noise Texture Parameters

When I went further into the extraction of an image from a conceptual lens, I was thinking about the overlap between spirituality, the universe, the galaxy, and the cosmos. I invited many contemplations regarding the interconnectedness of all things.

Additionally, I was thinking about the movement of water in the ocean, a fundamental force on our planet, how it can symbolize the origins of life and its connection to the larger cosmic forces.

Just as the water flows and connects different parts of the Earth, my visuals explore a connection between our earthly existence and the vastness of the universe. The stars and planets, with their cosmic energy, serve as reminders of our place in a grander cosmic order.

The concept of interconnection extends beyond physical boundaries and embraces our ancestral ties. We are linked to our ancestors through time and heritage. Their energy and experiences continue to resonate within us. In the project I was exploring ways to visually represent this interconnectedness and showcase the intricate relationship between past, present, and future.

Chapter 5

Do you know you are not a human? - Sound Design

It was crucial for me, as a sound engineer and music composer to create sound linked directly to the piece, allowing viewers to see and hear the particles. I was interested in cohesion and creating an immersive environment. To achieve this, my hope was to use the same concept of taking particles from an image, but this time to take particles from a soundwave that came from recordings of my dog. I wanted to turn the dogs sounds into instruments which I could play in response to the particles dancing across the screen.

Introduction to Max/MSP

Max/MSP is a music visual programming language used worldwide by composers and sound makers. As with other programming or coding environments, Max provides a blank canvas where you can create anything and customize it to the needs of your project.

The Max program and editing areas are called patches. In this case, it is what corresponds to Touch Designers networks. Here the process is developed in the form of dataflow and is arranged by interconnecting blocks of objects, also referencing the operator boxes in Touch Designer. Each of these objects received an input and generated an output, passing signal and information through them and onto one another.

Simple Patches and Oscillators in Max/MSP

To get sound from a digital synthesizer, it is necessary to generate a signal with a specific waveform. To do this, we use oscillators. Oscillators are usually divided into different kinds; some of the most commonly used ones include sawtooth, square, sine, triangle, and pulse. These oscillators take a frequency number at which they oscillate and produce a specific sound. Each of the different types of oscillators is represented by distinct characteristics that describe the sound.

Figure .: Simple Max/MSP Patch by Luisa Pinzon

Figure 5.1 illustrates a simple Patch, here there are two sawtooth wave generators, with a Frequency of 79hz assigned on the left, and a Frequency of 78hz on the right. Then the audio signal represented with a dotted line, goes into a multiplier of audio signal, in this way the slider or fader at the right, can control the amount of signal that is later passed into the DAC (Digital to Analog Converter).

Figure .: Monophonic Synthesizer in Max/MSP

Similar to Touch Designer’s networks, Max’s patches can incorporate various audio concepts. Figure 5.2, for example, shows the creation of a simple monophonic synthesizer, capable of playing only one note at a time (no chords). The initial object in the signal chain is a MIDI (Musical Instrument Digital Interface) Keyboard, which has two outputs: one for MIDI notes and another for velocity. These outputs provide numbers ranging from 0 to 127. The MIDI note then passes through an object that converts it into the corresponding frequency (mtof, MIDI to frequency), which is then inputted into the sawtooth waveform oscillators. Simultaneously, the MIDI note’s velocity, indicating the intensity of how the note is played, is scaled from its original range of 0 to 127 to a range of 0 to 1, representing digital amplitude. This scaled value is then passed through an Envelope or ADSR (Attack, Decay, Sustain, Release) object. The ADSR modifies four fundamental amplitude states over time: Attack Time (the time it takes for the signal to reach maximum amplitude), Decay Time (the time it takes for the signal to fall from maximum amplitude to the sustain level), Sustain Time (the time the signal stays at the sustain amplitude level), and Release Time (the time it takes for the signal to move from sustain amplitude to zero). The ADSR output is multiplied by the oscillator output to control the signal’s dynamic range. It then goes through a fader that adjusts the volume before being sent to the DAC (digital-to-analog converter).

Audio input in Max/MSP

Another source of sound that can be processed in a computer is using an existing audio form or receiving input from an analog source, such as a microphone. This signal can then be processed by combining oscillators or utilizing various processing components available for interconnecting objects in Max.

By combining these two elements, it is possible to achieve captivating results without the need to open a DAW (Digital Audio Workstation) software.

Figure .: Audio Buffer in Max/MSP, Original patch by Eric Heep edited by Luisa Pinzon

Figure 5.3 demonstrates a small but powerful combination of the previously mentioned features. On the left side, we load a waveform named “blackbird,” which is stored in a buffer with the name “bb.” On the right side, there are several connections that enable us to experiment with the loaded waveform. The audio file is played at varying speeds and through different segments by utilizing a sine waveform oscillator to control the playback object. The object “wav~ bb” receives input from the sine waveform oscillator, which in turn receives a frequency parameter at the top (similar to the example mentioned earlier in this section). By manipulating this frequency, the speed of the signal generator’s oscillation changes, thereby instructing the playback object “wav” on how to play the audio file.

This provides us with an initial glimpse and ideas on how we can construct a patch that enables us to manipulate pre-recorded audio and transform it into a sound design or composition that complements and engages with the visual particle pixel system.

Sound Synthesis introduction

Technological advancements have made sound synthesis achievable both through hardware and software. Hardware synthesizers are equipped with a computer, memory, and physical controls such as buttons and a musical keyboard. In contrast, software synthesizers are programmed to utilize the computer’s processor and can employ various algorithms and synthesis methods that are difficult to replicate in hardware. The programming language used for sound synthesis is virtually limitless.

Sound synthesis involves creating new sounds through non-acoustic means, whether analog or digital. According to the Royal Spanish Academy, synthesis is defined as the “composition of a whole by the meeting of its parts” (RAE, 2016), which forms the foundation for sound synthesis.

A sound synthesizer is a musical instrument used to generate sound signals through sound synthesis. This approach enables the creation of two types of sounds: entirely new sounds with unique timbre properties, pitch, duration, and intensity, and sounds that imitate existing ones by controlling the frequency spectrum and envelope. Sound synthesis can be performed using digital media, as well as acoustic and digital sound sources.

Granular synthesis

While oscillators are commonly used in audio synthesis, there is a unique type of synthesis known as granular synthesis that utilizes audio samples as its source. In granular synthesis, the audio sample is divided into tiny segments called grains to create different sounds.

There are two main approaches in granular synthesis. The first involves adding different grains from various sources sequentially, one after another. This allows for the creation of complex and evolving textures as the grains blend together. The second approach involves looping individual grains, repeating them at different rates to generate new sounds. Each grain can be manipulated individually, and they can be arranged in various ways to create intricate and expressive compositions.

Sound Sample as a Particle

To translate the concept of particles into sound, it is necessary to go into the domain of sound sampling. In simple terms, it involves taking a small portion of an existing audio recording and repurposing it to create a new sound piece. This sample can be manipulated by altering playback speed, direction, pitch, and more. Sampling as an artistic technique originated around 1940 in France when composers began experimenting with looping and slicing segments of tape recordings. This movement, known as musique concrète or concrete music, marked a significant shift in how music was perceived, breaking away from traditional acoustic compositions. Nowadays, sampling is widely utilized across various music genres, particularly in Hip-Hop, for crafting drumbeats.

In this project, the sound samples were used in minute proportions, almost imperceptible to the listener, yet still retaining traces of their original form. The aim was to create a musical and sound design piece that evokes similar contemplations as the visual aspect, forging an immersive audiovisual environment. To stay true to the inspiration drawn from my connection with Aby, I captured various moments as audio recordings, encompassing barks, snores, paw sounds on the ground, whining, and even my partner’s voice while speaking to our dogs. These samples were subsequently employed as sound sources to construct digital instruments.

Digital Instrument design

I have developed a Max/MSP patch that serves as a versatile instrument and compositional tool, drawing inspiration from various audio tools and techniques. The primary functionalities of this instrument include pitch recognition, pitch adjustment, and pitch modulation.

By integrating these functionalities into a single space, I aimed to create a comprehensive tool that allows for the manipulation and exploration of sound recordings. This instrument combines elements from renowned audio tools such as Melodyne by Celemony, as well as the Simpler and Sampler instruments from Ableton Live.

Through my experience in the industry, I have encountered various audio tools that have inspired me, and I desired to have their capabilities consolidated in one convenient instrument. This Max/MSP patch functions as a culmination of those inspirations, providing a range of tools and features that enhance the compositional process and sound manipulation.

Instrument signal flow

The initial step in the process involves importing the sound into the Max/MSP patch and subsequently recognizing its pitch. To accomplish this, I incorporated an object called “retune~” into the patch. The “retune~” object offers the capability of detecting the frequency of the input signal, which can be accessed through its second output.

By utilizing the “retune~” object, the patch becomes equipped with the ability to analyze the pitch of the imported sound, providing valuable information about its fundamental frequency. This pitch recognition capability acts as a foundation for later stages in the instrument’s functionality, allowing for further manipulation and control over the sound.

Figure .: Importing sound and detecting its pitch – Patch Luisa Pinzon

Figure 5.4 illustrates the section of the instrument where the audio file is processed and its pitch is detected. The first step involves creating a buffer that can hold a defined number of samples at a time. In this particular case, the buffer is named “buff” and is set to hold 3000 samples. Once the audio file is loaded into the buffer, it is then routed to the “retune” object. The “retune” object analyzes the input signal and provides a frequency value as its output.

Figure .: Average pitch – Patch by Luisa Pinzon

There is though, a problem setting up things this way, and it is that considering that most samples are coming from daily recordings of voices and sounds, the pitch will be constantly changing. In order to address this challenge, a solution has been implemented in the instrument. Instead of directly detecting and adjusting the pitch of the input signal, the entire file is played once, and the output frequencies are collected and grouped using the “zl group” object.

The “zl group” object gathers all the detected frequencies and calculates an average using the “mean” object. This average frequency becomes the reference pitch for the next signal processing stages. This approach ensures a more stable and consistent pitch detection for the entire duration of the audio file. Figure 5.5 provides a visual representation of the signal flow, showing how the audio file is played, the frequencies are grouped using “zl group,” and the average frequency is determined using the “mean” object.

Figure .: Re-pitch calculation – Patch by Luisa Pinzon

Once the pitch has been calculated, the re-pitching process begins by adjusting the detected pitch to a starting note, which in this case is C2 or MIDI note number 36. Figure 5.6 illustrates this section of the process, highlighted in red.

Later, the adjusted pitch can be further modified by the user, either through the built-in piano keyboard in Max/Msp or using an external MIDI controller device. Once the desired note is determined, a formula is employed to calculate the speed at which each sound should be played to achieve the desired pitch. Figure 5.6 displays this calculation, highlighted in green, using the following formula as a function y = x\^(2\^(1/12)). Note that when x = integers, these are half steps. The calculated speed information is then passed to the “groove” object, which is responsible for playing the final audio. This step is shown in Figure 5.6, highlighted in blue.

Figure .: Loop selection – Patch by Luisa Pinzon

Finally, to incorporate granular synthesis into the instrument, the user is given the ability to select a specific starting position within the waveform and determine the length of the loop that will be played. This functionality is demonstrated through the use of yellow knobs, as shown in Figure 5.7. By adjusting these knobs, the user can indicate to the “groove” object where to begin playing the audio within the buffer and define the duration of the selected audio portion.

These options provided by the “groove” object in Max/Msp enable the creation of granular synthesis textures, allowing for precise control over the playback position and length of the audio. This feature adds further depth and flexibility to the instrument, expanding the possibilities for sound manipulation and composition.

Music Composition

The sound piece acts as both a standalone composition and a supporting sound design for the particle system created in Touch Designer. It is characterized by its spatial qualities, rich reverberation, and effects, incorporating sharp rhythmic elements and short sounds.

While the composition is approached in a conceptual and experimental manner, it is essential for me to create art that captivates the viewer’s eyes and ears, regardless of their background. This brings to mind the work of Alex Buck, a former DMA CalArts composer, who skillfully blends meaningful concepts with compositions that resonate with a wide audience.

“Convergence is often the magical force in the creative process that leads to an ear-catching work. Two or more seemingly disparate elements meet in the imagination of an artist who unites them into something new that resonates with both universal and personal meaning. This is the story behind Brazilian composer Alex Buck’s evocative eight-minute sonic journey Screaming Trees”9

By tapping into the magic of bringing different elements together in the creative process, where they blend harmoniously, my goal is to create a captivating and immersive sonic experience that speaks to everyone on a personal level and carries deep meaning.

Music style

As a musician and sound engineer, I’ve come to appreciate the importance of using references during the creative process, both in terms of sound quality and style. For this reason, I firstly approached the compositional process getting inspiration from specific artists whose work resonated with the ideas I had in mind.

Trent Reznor and Atticus Ross, well-known for their work in composing soundtracks, had a significant influence on my creative process, especially their work on the movie “Soul.” The themes explored in the film, such as the soul, the mind, and the afterlife, deeply resonated with the essence of my own project. Inspired by their skillful blending of acoustic elements, digital synthesizers, and processed signals, I found guidance for my artistic approach.

Another artist who greatly influenced my work was Frakkur, also known as Jónsi. Hailing from Iceland, his album “2000-2004” and the track “SFTLB2” in particular, captivated me with their unique blend of ambient sounds and rhythmic percussive samples. What particularly resonated with me was the distinctiveness of the sounds he created, which were not produced by traditional acoustic instruments, but rather by unknown samples of sound. This aspect deeply inspired my own project, as I aimed to create a piece that conveyed a sense of spatial depth while incorporating rhythmic elements that would actively engage the audience. Frakkur’s innovative approach sparked my curiosity and encouraged me to explore new sonic possibilities in my composition.

Taking inspiration from these references, I approached my composition by experimenting mostly with the samples and creating rhythmic patterns inspired by my South American culture and the African influences I gained during my time at CalArts. The resulting piece emerged with a strong beat that aligned with the visual style created in Touch Designer. Simultaneously, I decided to create a second piece with a different mood, featuring longer sounds, pitch changes, and fewer rhythmic components. This imparted a sense of deeper connection to the project’s concept, resonating with our souls and spirits.

Both compositions were crafted using Ableton Live and the seamless integration between Ableton Live and Max/Msp, facilitating a smooth and efficient compositional process that yielded quick results.

Figure 5.8: Sound sampling examples in Max/Msp and Ableton Live by Luisa Pinzon

Mixing and Mastering

Initially, my original idea was to compose and create within Ableton Live, and later apply a mixing and mastering signal flow using the Pro Tools music software by Avid. This decision was influenced by my extensive experience as a sound engineer, where I primarily utilized Pro Tools for professional productions. However, as I progressed through the process, I realized the advantages of keeping the entire piece within Ableton Live. This approach provided greater flexibility in making compositional decisions while simultaneously handling the mixing and mastering aspects.

Equalizing

Figure 5.9: Equalizing – Ableton Session by Luisa Pinzon

When it comes to mixing music, it’s crucial to recognize that all the sounds should function cohesively as a group rather than individual instruments. It is essential to find a balance where the sounds complement each other and avoid excessive overlap. This principle applies during both the production and composing stages, as well as the mixing process.

To achieve this, the key is to select sounds that cover the entire frequency spectrum, which ranges from 20Hz to 20kHz for human auditory system. Each sound in the composition was carefully chosen to fulfill this objective. Through the use of equalizers, I was able to carve out distinct spaces for each sound, allowing them to occupy their own frequencies within the mix. In Figure 5.9, you can observe some of the equalizers employed on the instruments, providing insight into how each sound occupies a unique space in the frequency spectrum.

Compression and Reverberation

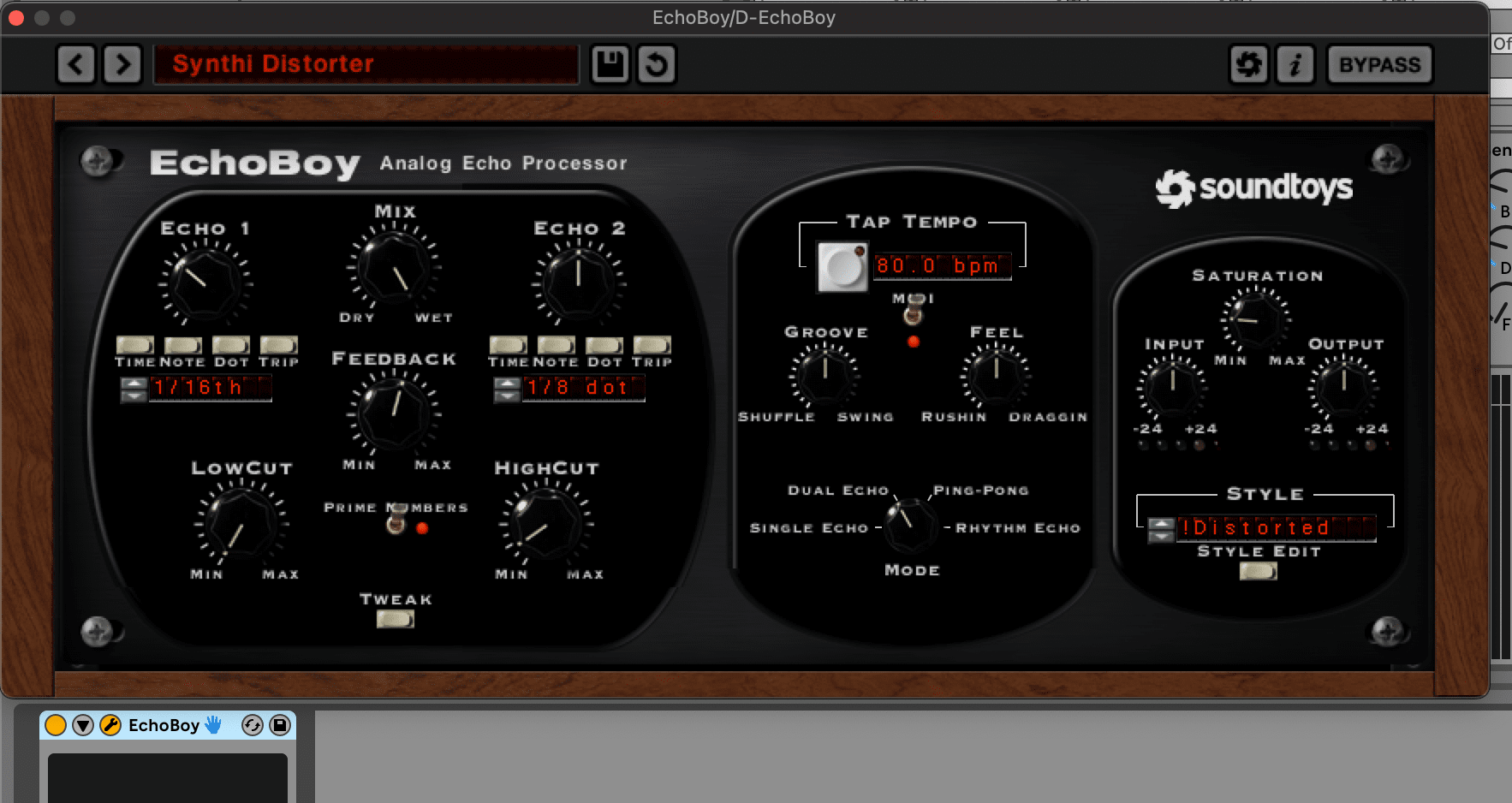

Figure .: Equalizing – Ableton Session by Luisa Pinzon

The dynamic range and spatiality of the piece were achieved by utilizing a variety of compressors, reverb, and delay effects. During the session, I incorporated over five distinct time-based effects, such as delay and reverb, which were carefully adjusted to match the BPM (beats per minute) of the music. This attention to detail ensured a cohesive and immersive sonic environment. Figure 5.10 displays a selection of the different effects employed in the piece.

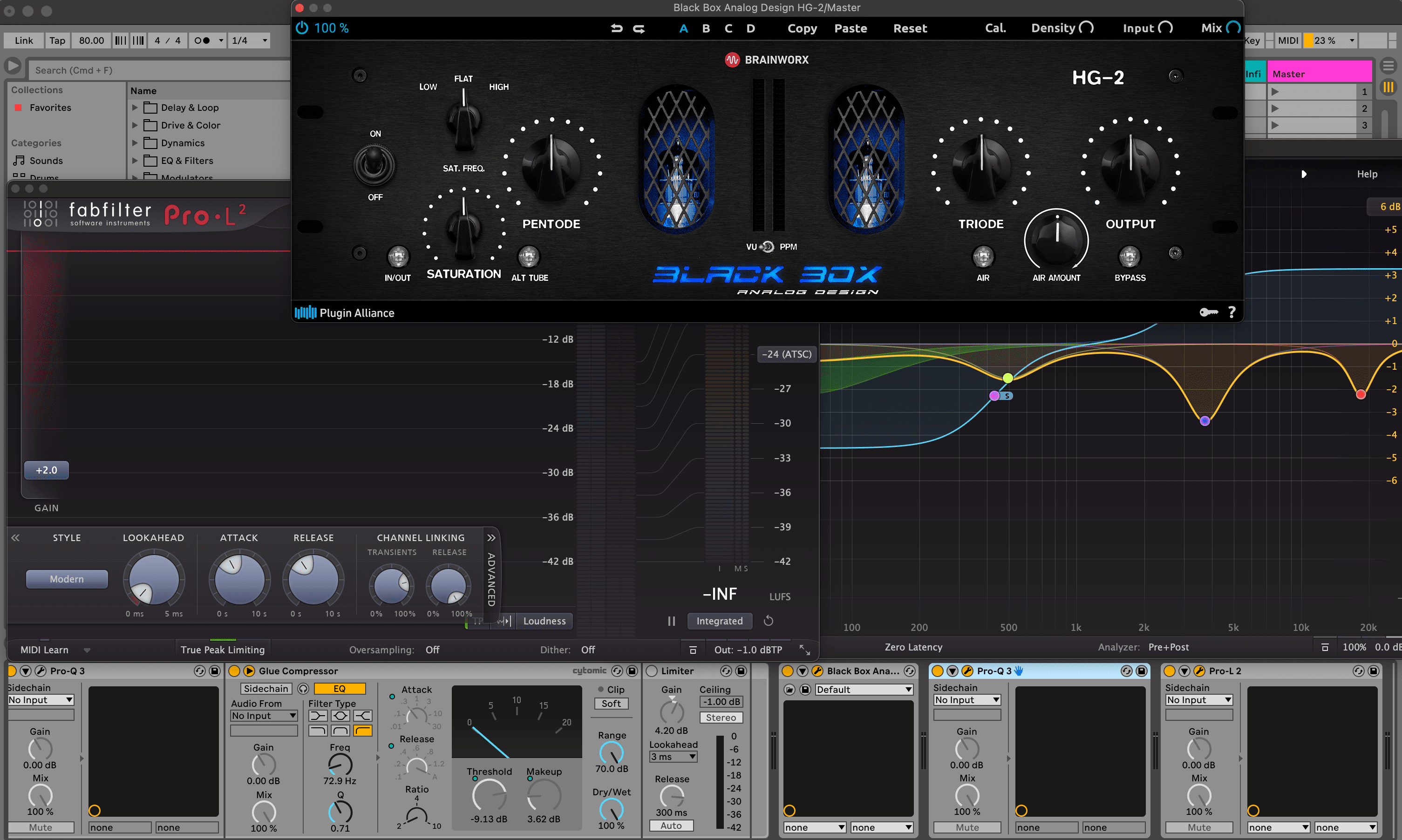

Figure .: Mastering chain– Ableton Session by Luisa Pinzon

Mastering

Finally, I implemented a simple mastering process on the master channel of the composition to attain the intended loudness level required by most streaming platforms. This procedure also added a sense of unity and coherence to the overall piece, ensuring that all instruments and sounds felt interconnected. The plugin chain employed for this purpose is shown in figure 5.11. Initially, an equalizer was applied to address any harsh frequencies that may emerge when combining multiple instruments. Next, a compressor was used to manage the overall dynamic range. The signal then passed through the Blackbox plugin from Plugin Alliance, which introduced various harmonics depending on the selected configuration. Subsequently, another equalizer was employed to shape and refine the final frequency spectrum, making subtle adjustments of no more than 2 or 3 dBs per frequency band. Lastly, a limiter was applied to achieve the intended final volume level for export.

Music streaming